Large language models are getting incredibly powerful, but let’s be honest—their inference speed is still a massive headache for anyone […]

Category: Large Language Model

Zyphra Introduces Tensor and Sequence Parallelism (TSP): A Hardware-Aware Training and Inference Strategy That Delivers 2.6x Throughput Over Matched TP+SP Baselines

Training and serving large transformer models at scale is fundamentally a memory management problem. Every GPU in a cluster has […]

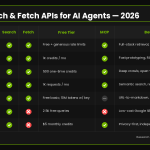

Top Search and Fetch APIs for Building AI Agents in 2026: Tools, Tradeoffs, and Free Tiers

Web search and content retrieval have quietly become the most critical infrastructure decisions in AI agent development. An agent without […]

A Developer’s Guide to Systematic Prompting: Mastering Negative Constraints, Structured JSON Outputs, and Multi-Hypothesis Verbalized Sampling

Most developers treat prompting as an afterthought—write something reasonable, observe the output, and iterate if needed. That approach works until […]

A Coding Implementation to Explore and Analyze the TaskTrove Dataset with Streaming Parsing Visualization and Verifier Detection

In this tutorial, we take a deep dive into the TaskTrove dataset on Hugging Face and build a complete, practical […]

Mistral AI Launches Remote Agents in Vibe and Mistral Medium 3.5 with 77.6% SWE-Bench Verified Score

Mistral AI has been quietly building one of the more practical coding agent ecosystems in the open-source/weights AI space, and […]

A New NVIDIA Research Shows Speculative Decoding in NeMo RL Achieves 1.8× Rollout Generation Speedup at 8B and Projects 2.5× End-to-End Speedup at 235B

If you have been running reinforcement learning (RL) post-training on a language model for math reasoning, code generation, or any […]

Moonshot AI Open-Sources FlashKDA: CUTLASS Kernels for Kimi Delta Attention with Variable-Length Batching and H20 Benchmarks

The team behind Kimi.ai (Moonshot AI) just made a significant contribution to the open-source AI infrastructure space. The research team […]

Microsoft Research’s World-R1 Uses Flow-GRPO and 3D-Aware Rewards to Inject Geometric Consistency Into Wan 2.1 Without Architectural Changes

Video foundation models can paint a beautiful frame. They are still notoriously bad at remembering it. Push the camera through […]

Top 10 KV Cache Compression Techniques for LLM Inference: Reducing Memory Overhead Across Eviction, Quantization, and Low-Rank Methods

As large language models scale to longer context windows and serve more concurrent users, the key-value (KV) cache has emerged […]