Inference speed is becoming a competitive metric for large language models. Xiaomi’s MiMo team just released MiMo-V2.5-Pro-UltraSpeed, built in collaboration […]

Category: Machine Learning

The weather and climate science AI revolution isn’t revolutionary

Skip to content Machine learning has its limits—how is it being used? Credit: Aurich Lawson | Getty Images Credit: Aurich […]

Building Reflective Prompt Optimization with GEPA: Multi-Component Prompts, Structured Feedback, and Held-Out Validation

In this tutorial, we use GEPA as a reflective prompt-evolution framework to improve the way a language model solves arithmetic […]

Google’s New Colab CLI Lets Developers and AI Agents Run Python on Remote Colab GPUs and TPUs From the Terminal

This week, Google AI team released the Colab CLI. The tool connects your local terminal to remote Colab runtimes. It […]

NVIDIA AI Releases Dynamo Snapshot: A CRIU-Based Fast Startup System for AI Inference on Kubernetes

In production inference deployments, demand fluctuates over time, requiring inference replicas to scale elastically. Cold-starting inference workloads on Kubernetes can […]

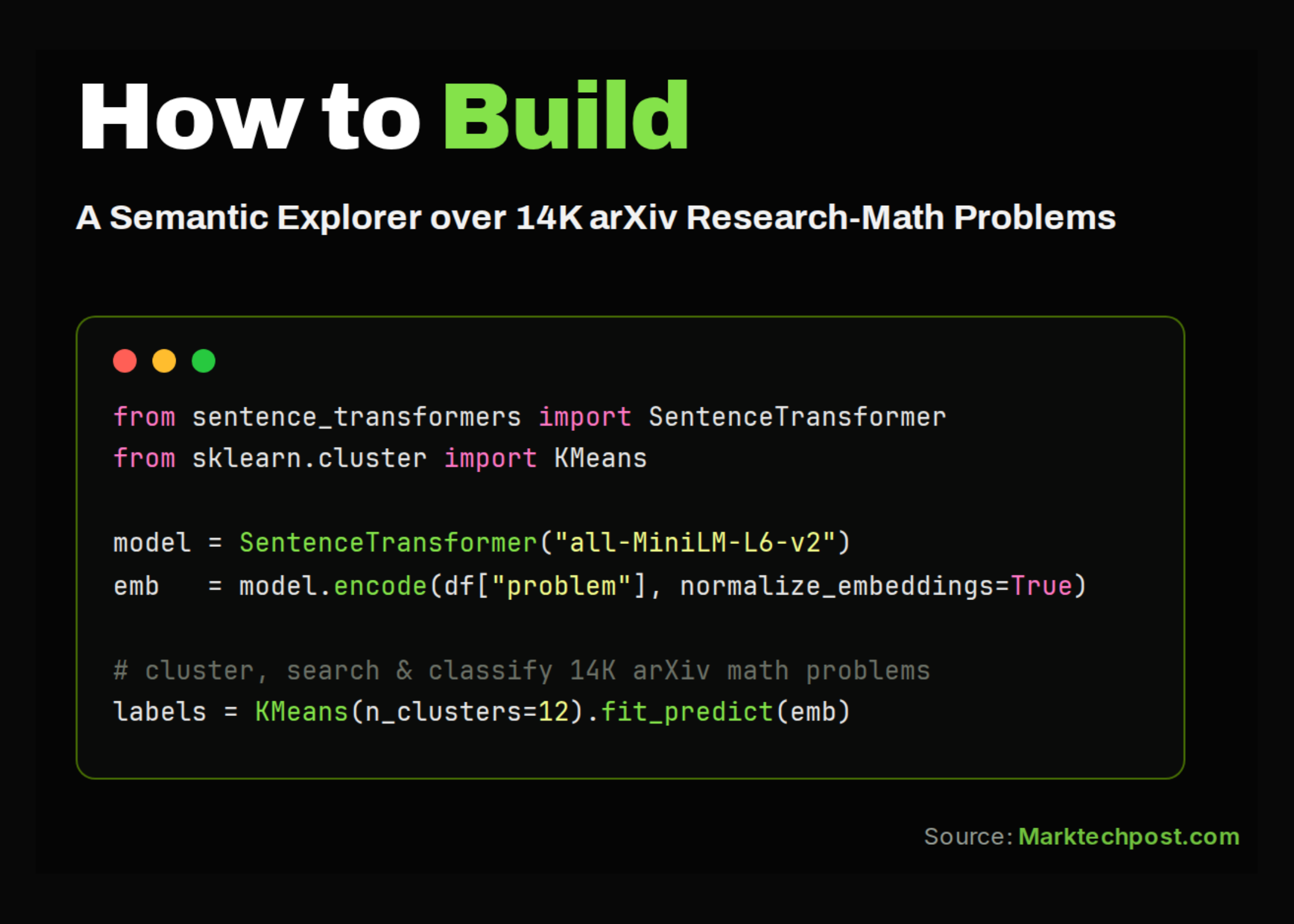

Building a Semantic Search Engine and Open-Status Classifier over the ResearchMath-14k Dataset

In this tutorial, we work with the amphora/ResearchMath-14k dataset, a collection of research-level mathematics problems mined from arXiv. We load […]

NVIDIA AI Releases Nemotron 3 Ultra: An Open 550B Mixture-of-Experts Hybrid Mamba-Transformer for Long-Running Agents

NVIDIA has released Nemotron 3 Ultra, the largest model in its Nemotron 3 family. It targets a specific problem: long-running […]

Meet OpenJarvis: A Local-First Framework for On-Device Personal AI Agents with Tools, Memory, and Learning

Researchers at Stanford University and Lambda Labs, have published the research paper for OpenJarvis, an open-source framework that runs inference, […]

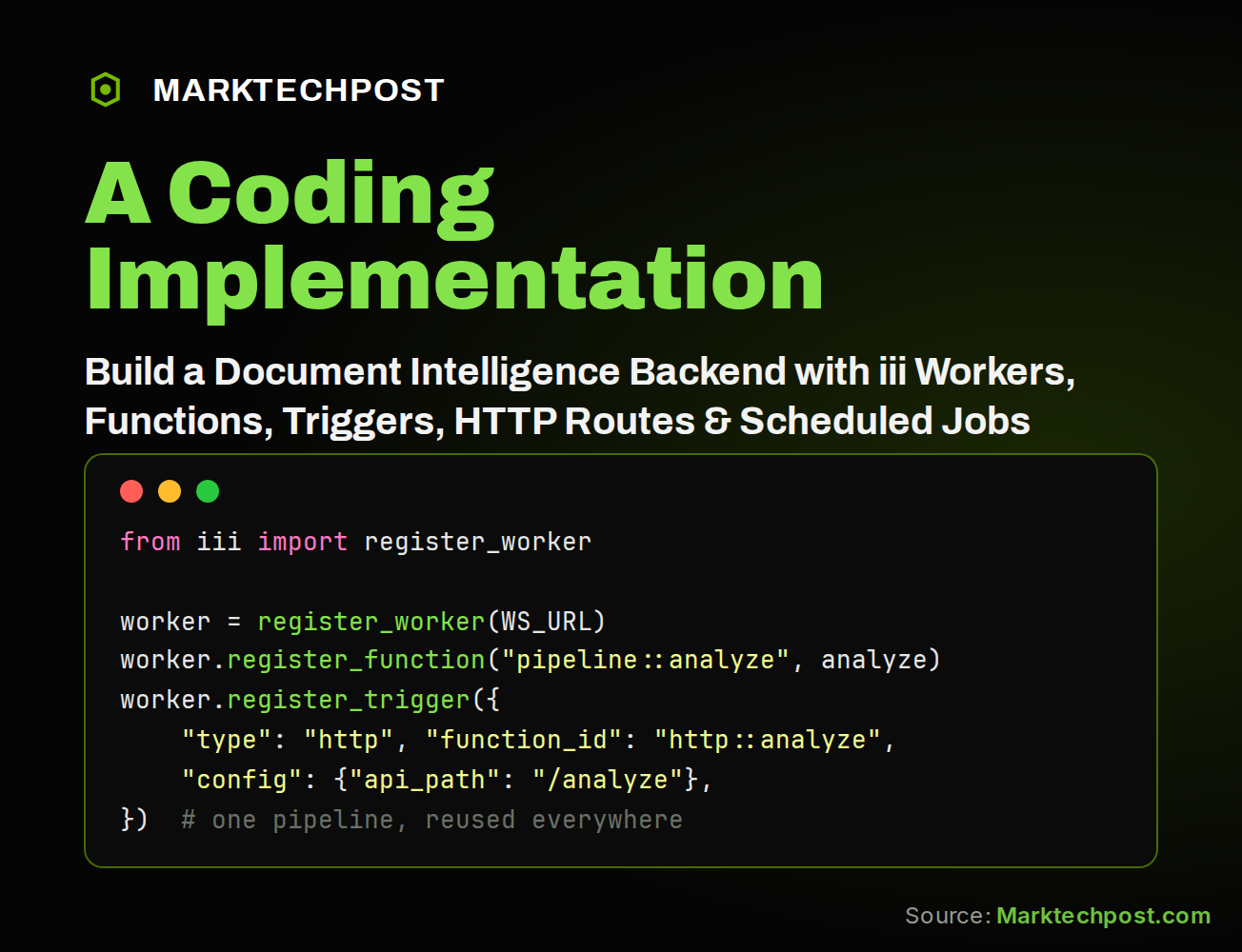

How to Build a Document Intelligence Backend with iii Using Workers, Functions, and Cron Triggers

In this tutorial, we build a document-intelligence workflow with iii. We begin by installing the iii engine and Python SDK, […]

Google DeepMind Releases Gemma 4 12B: An Encoder-Free Multimodal Model with Native audio that runs on a 16 GB laptop

Google DeepMind just released Gemma 4 12B, a dense multimodal model that strips out traditional encoders entirely. Vision and audio […]