Serverless computing has significantly streamlined how developers build and deploy applications on cloud platforms like AWS. However, debugging and managing […]

Category: AI infrastructure

Allen Institute for AI (Ai2) Launches OLMoTrace: Real-Time Tracing of LLM Outputs Back to Training Data

Understanding the Limits of Language Model Transparency As large language models (LLMs) become central to a growing number of applications—ranging […]

LLMs No Longer Require Powerful Servers: Researchers from MIT, KAUST, ISTA, and Yandex Introduce a New AI Approach to Rapidly Compress Large Language Models without a Significant Loss of Quality

HIGGS — the innovative method for compressing large language models was developed in collaboration with teams at Yandex Research, MIT, […]

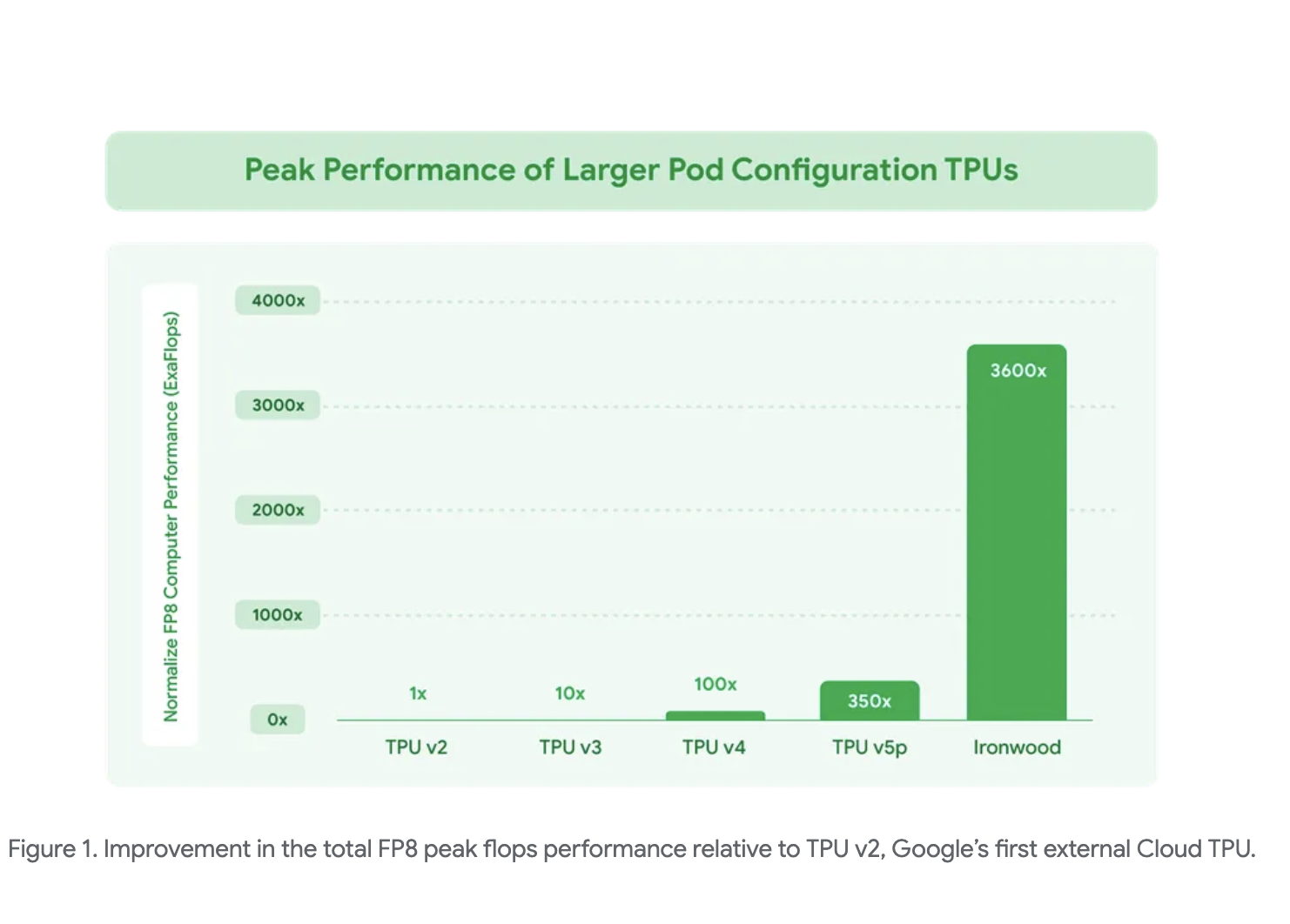

Google AI Introduces Ironwood: A Google TPU Purpose-Built for the Age of Inference

At the 2025 Google Cloud Next event, Google introduced Ironwood, its latest generation of Tensor Processing Units (TPUs), designed specifically […]

This AI Paper Introduces a Machine Learning Framework to Estimate the Inference Budget for Self-Consistency and GenRMs (Generative Reward Models)

Large Language Models (LLMs) have demonstrated significant advancements in reasoning capabilities across diverse domains, including mathematics and science. However, improving […]

This AI Paper from ByteDance Introduces MegaScale-Infer: A Disaggregated Expert Parallelism System for Efficient and Scalable MoE-Based LLM Serving

Large language models are built on transformer architectures and power applications like chat, code generation, and search, but their growing […]

Scalable and Principled Reward Modeling for LLMs: Enhancing Generalist Reward Models RMs with SPCT and Inference-Time Optimization

Reinforcement Learning RL has become a widely used post-training method for LLMs, enhancing capabilities like human alignment, long-term reasoning, and […]

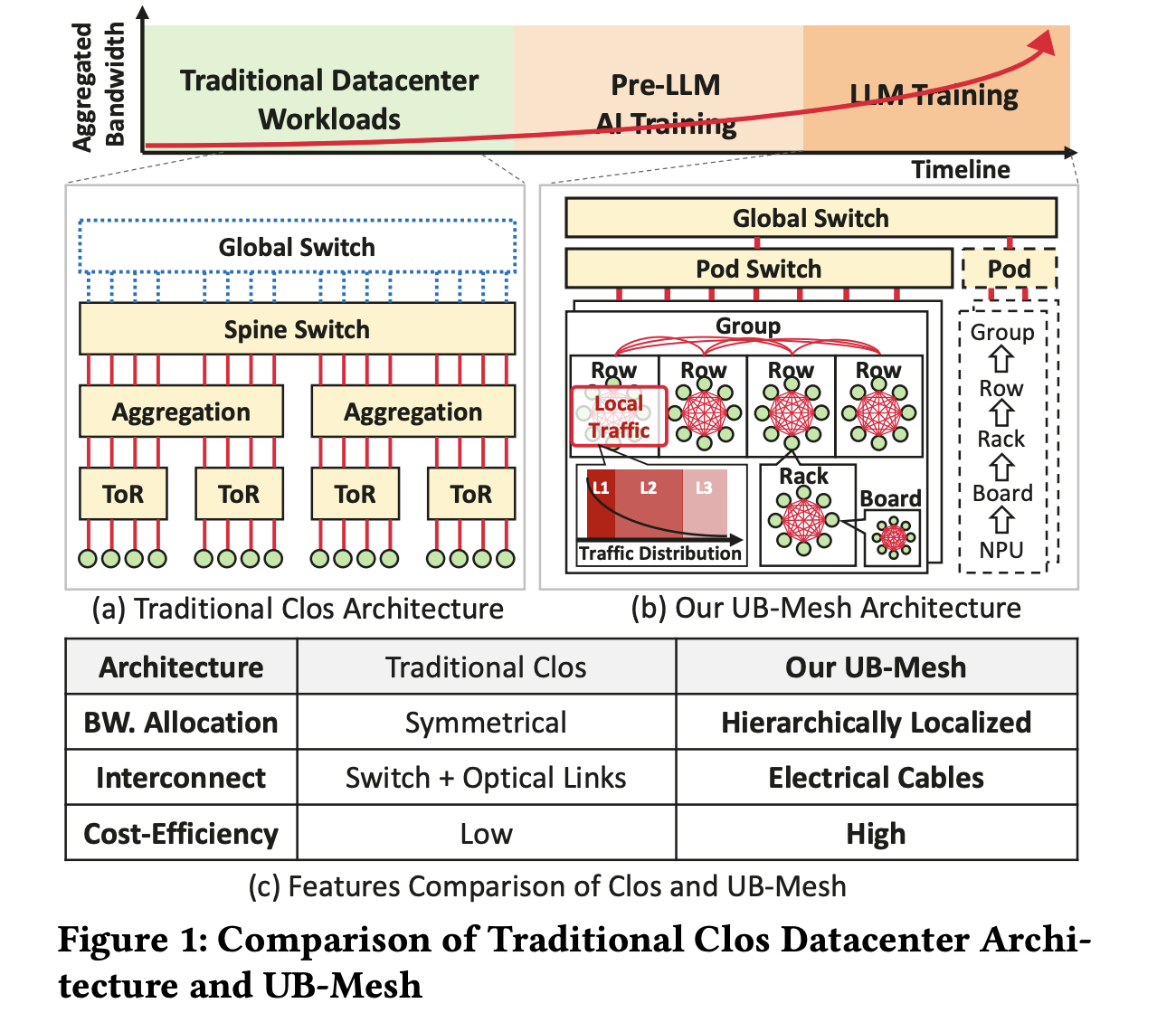

UB-Mesh: A Cost-Efficient, Scalable Network Architecture for Large-Scale LLM Training

As LLMs scale, their computational and bandwidth demands increase significantly, posing challenges for AI training infrastructure. Following scaling laws, LLMs […]

This AI Paper Unveils a Reverse-Engineered Simulator Model for Modern NVIDIA GPUs: Enhancing Microarchitecture Accuracy and Performance Prediction

GPUs are widely recognized for their efficiency in handling high-performance computing workloads, such as those found in artificial intelligence and […]

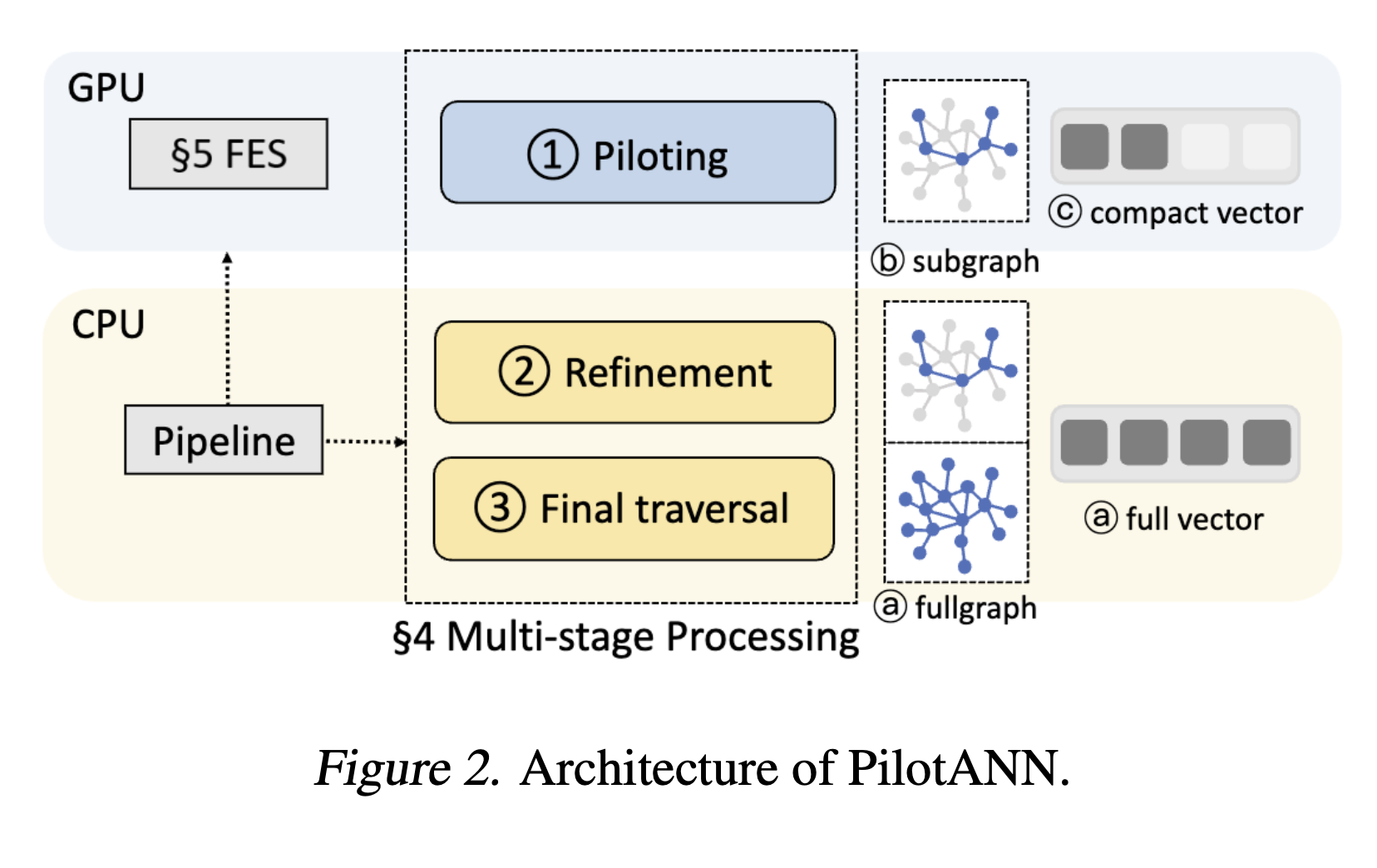

PilotANN: A Hybrid CPU-GPU System For Graph-based ANNS

Approximate Nearest Neighbor Search (ANNS) is a fundamental vector search technique that efficiently identifies similar items in high-dimensional vector spaces. […]