Large Language Models (LLMs) have revolutionized generative AI, showing remarkable capabilities in producing human-like responses. However, these models face a […]

Acer Aspire Vero 16 hands on: I tried the world’s first oyster shell laptop

Early Verdict The Acer Aspire Vero 16 is a powerful eco-conscious laptop that comes in at an affordable price point. […]

What are Large Language Model (LLMs)?

Understanding and processing human language has always been a difficult challenge in artificial intelligence. Early AI systems often struggled to […]

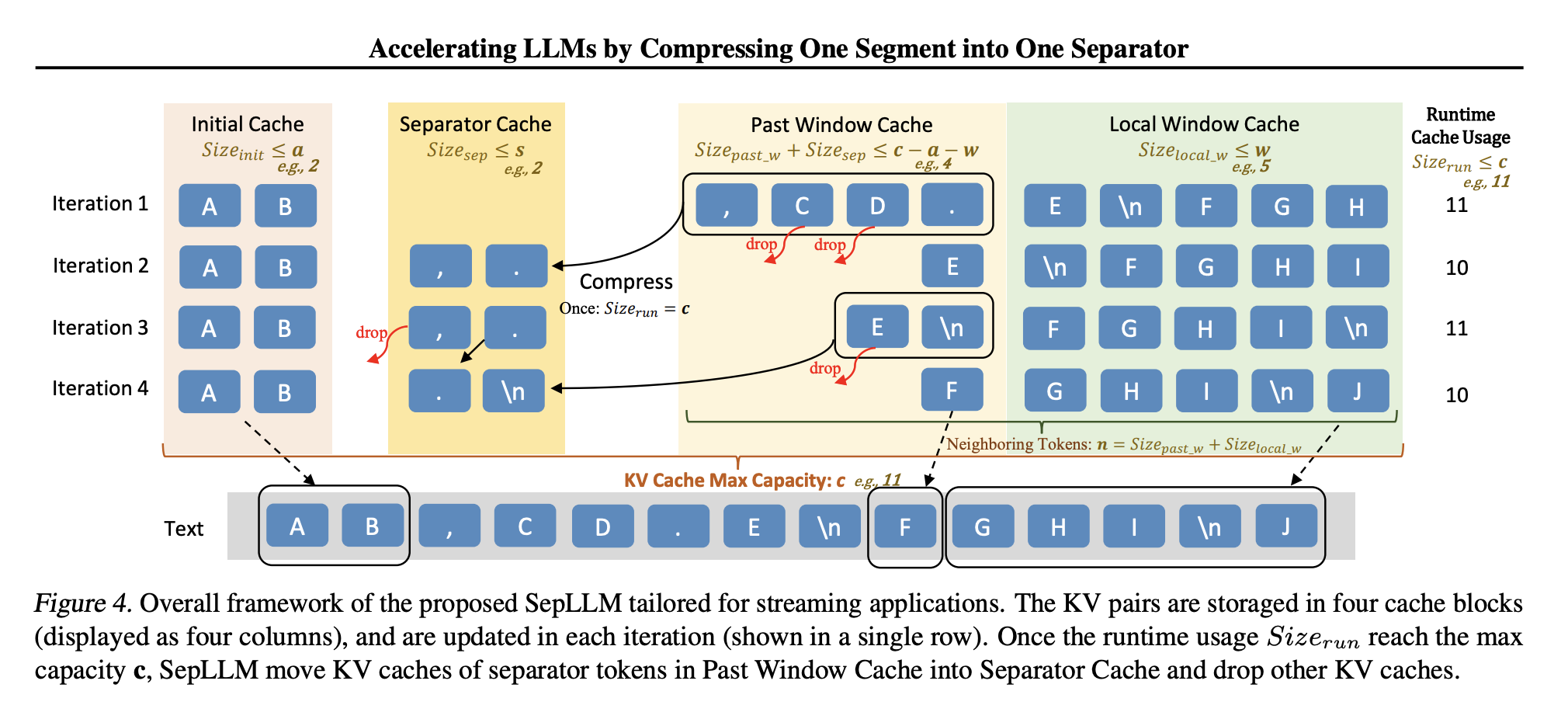

SepLLM: A Practical AI Approach to Efficient Sparse Attention in Large Language Models

Large Language Models (LLMs) have shown remarkable capabilities across diverse natural language processing tasks, from generating text to contextual reasoning. […]

Quordle today – my hints and answers for Sunday, January 12 (game #1084)

(Image credit: Getty Images) Quordle was one of the original Wordle alternatives and is still going strong now more than […]

NYT Strands today — my hints, answers and spangram for Sunday, January 12 (game #315)

Strands is the NYT’s latest word game after the likes of Wordle, Spelling Bee and Connections – and it’s great […]

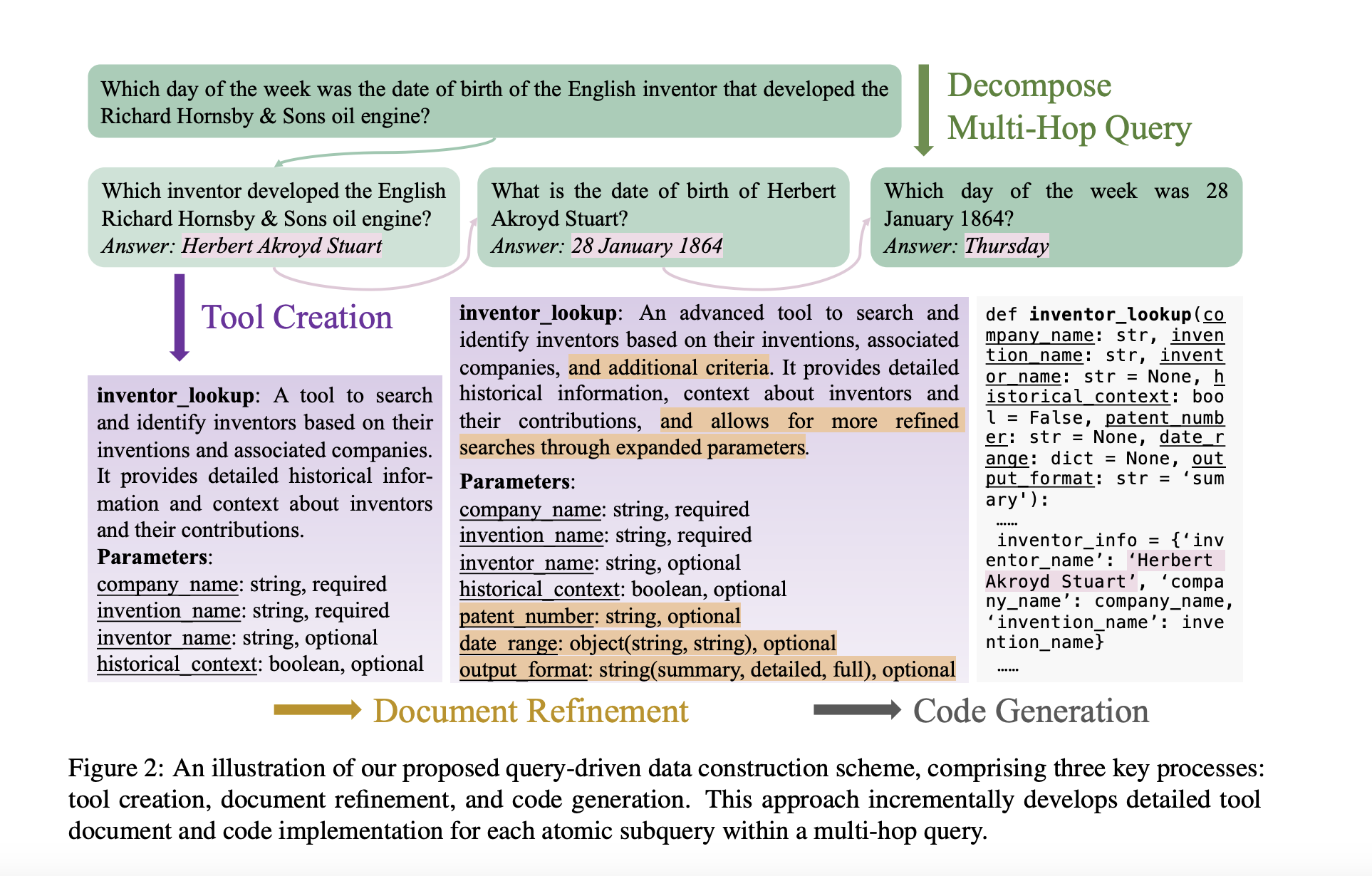

ToolHop: A Novel Dataset Designed to Evaluate LLMs in Multi-Hop Tool Use Scenarios

Multi-hop queries have always given LLM agents a hard time with their solutions, necessitating multiple reasoning steps and information from […]

ProVision: A Scalable Programmatic Approach to Vision-Centric Instruction Data for Multimodal Language Models

The rise of multimodal applications has highlighted the importance of instruction data in training MLMs to handle complex image-based queries […]

This AI Paper Explores Embodiment, Grounding, Causality, and Memory: Foundational Principles for Advancing AGI Systems

Artificial General Intelligence (AGI) seeks to create systems that can perform various tasks, reasoning, and learning with human-like adaptability. Unlike […]

Cache-Augmented Generation: Leveraging Extended Context Windows in Large Language Models for Retrieval-Free Response Generation

Large language models (LLMs) have recently been enhanced through retrieval-augmented generation (RAG), which dynamically integrates external knowledge sources to improve […]