Large Language Models (LLMs) have shown significant potential in reasoning tasks, using methods like Chain-of-Thought (CoT) to break down complex […]

Category: Technology

Researchers from Tsinghua University Propose ReMoE: A Fully Differentiable MoE Architecture with ReLU Routing

The development of Transformer models has significantly advanced artificial intelligence, delivering remarkable performance across diverse tasks. However, these advancements often […]

NeuralOperator: A New Python Library for Learning Neural Operators in PyTorch

Operator learning is a transformative approach in scientific computing. It focuses on developing models that map functions to other functions, […]

aiXplain Introduces a Multi-AI Agent Autonomous Framework for Optimizing Agentic AI Systems Across Diverse Industries and Applications

Agentic AI systems have revolutionized industries by enabling complex workflows through specialized agents working in collaboration. These systems streamline operations, […]

Hypernetwork Fields: Efficient Gradient-Driven Training for Scalable Neural Network Optimization

Hypernetworks have gained attention for their ability to efficiently adapt large models or train generative models of neural representations. Despite […]

This AI Paper Explores How Formal Systems Could Revolutionize Math LLMs

Formal mathematical reasoning represents a significant frontier in artificial intelligence, addressing fundamental logic, computation, and problem-solving challenges. This field focuses […]

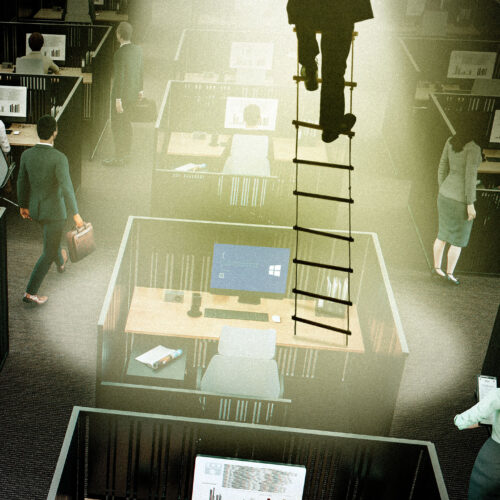

Tech worker movements grow as threats of RTO, AI loom

Advocates say tech workers movements got too big to ignore in 2024. Credit: Aurich Lawson | Getty Images It feels […]

Collective Monte Carlo Tree Search (CoMCTS): A New Learning-to-Reason Method for Multimodal Large Language Models

In today’s world, Multimodal large language models (MLLMs) are advanced systems that process and understand multiple input forms, such as […]

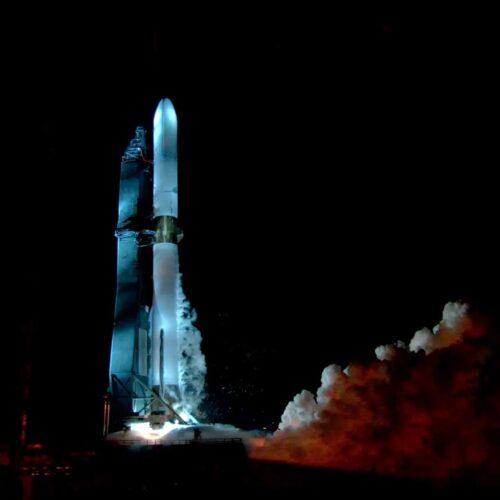

After a 24-second test of its engines, the New Glenn rocket is ready to fly

After a long day of stops and starts that stretched well into the evening, and on what appeared to be […]

YuLan-Mini: A 2.42B Parameter Open Data-efficient Language Model with Long-Context Capabilities and Advanced Training Techniques

Large language models (LLMs) built using transformer architectures heavily depend on pre-training with large-scale data to predict sequential tokens. This […]