Managing datasets effectively has become a pressing challenge as machine learning (ML) continues to grow in scale and complexity. As […]

Category: New Releases

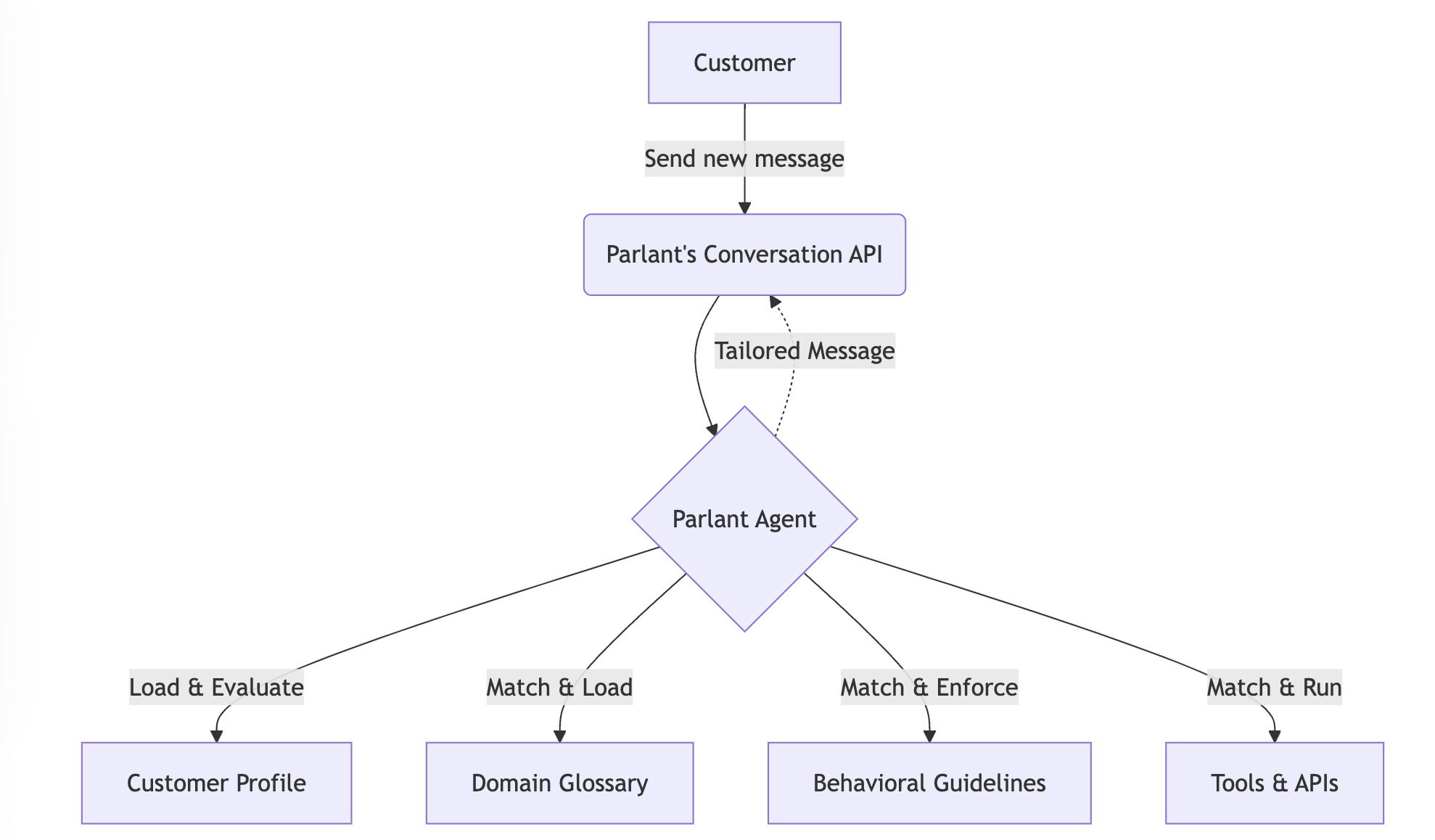

Introducing Parlant: The Open-Source Framework for Reliable AI Agents

The Problem: Why Current AI Agent Approaches Fail If you have ever designed and implemented an LLM Model-based chatbot in […]

Meet KaLM-Embedding: A Series of Multilingual Embedding Models Built on Qwen2-0.5B and Released Under MIT

Multilingual applications and cross-lingual tasks are central to natural language processing (NLP) today, making robust embedding models essential. These models […]

AMD Researchers Introduce Agent Laboratory: An Autonomous LLM-based Framework Capable of Completing the Entire Research Process

Scientific research is often constrained by resource limitations and time-intensive processes. Tasks such as hypothesis testing, data analysis, and report […]

Microsoft AI Just Released Phi-4: A Small Language Model Available on Hugging Face Under the MIT License

Microsoft has released Phi-4, a compact and efficient small language model, on Hugging Face under the MIT license. This decision […]

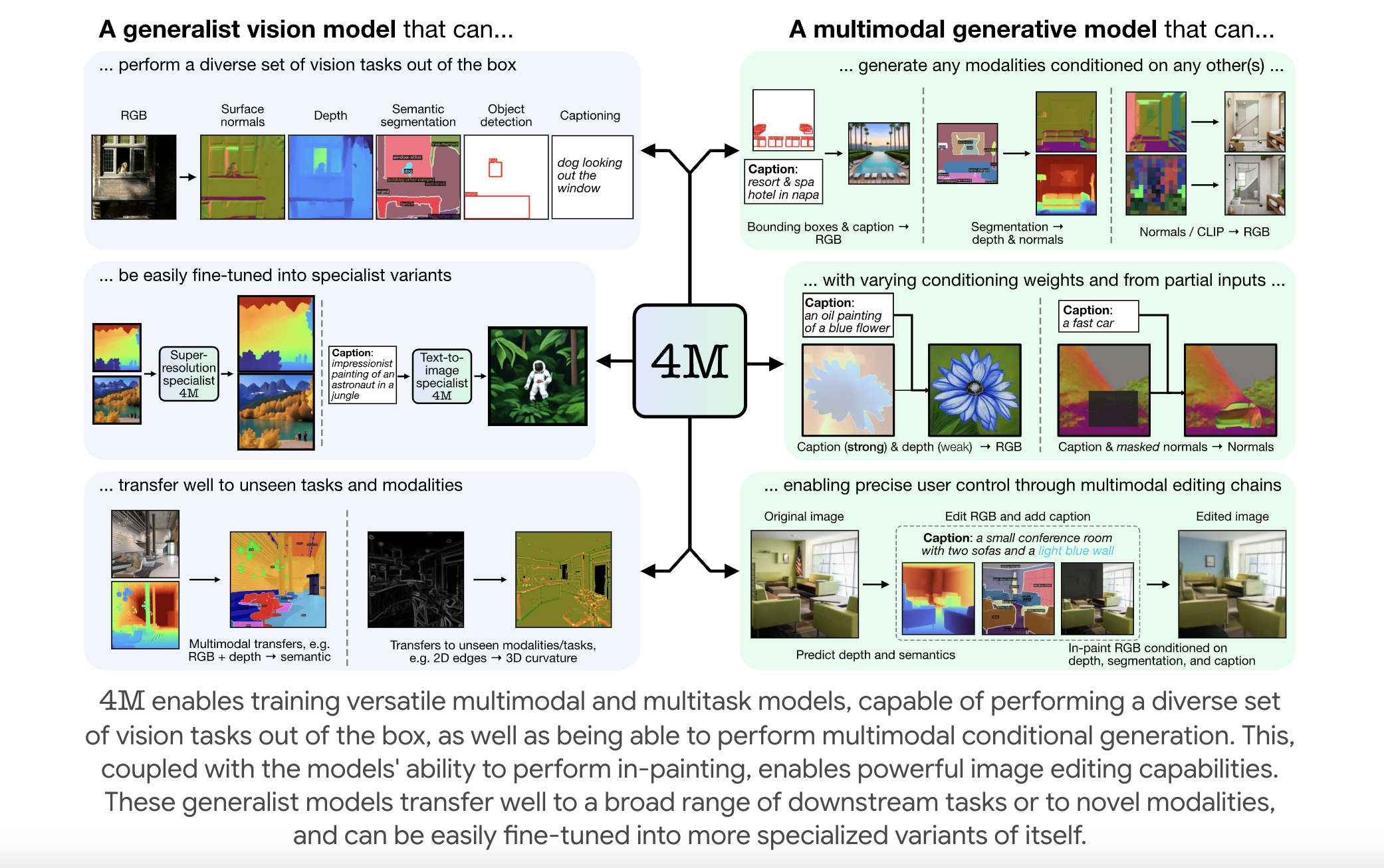

EPFL Researchers Releases 4M: An Open-Source Training Framework to Advance Multimodal AI

Multimodal foundation models are becoming increasingly relevant in artificial intelligence, enabling systems to process and integrate multiple forms of data—such […]

Researchers from USC and Prime Intellect Released METAGENE-1: A 7B Parameter Autoregressive Transformer Model Trained on Over 1.5T DNA and RNA Base Pairs

In a time when global health faces persistent threats from emerging pandemics, the need for advanced biosurveillance and pathogen detection […]

Dolphin 3.0 Released (Llama 3.1 + 3.2 + Qwen 2.5): A Local-First, Steerable AI Model that Puts You in Control of Your AI Stack and Alignment

Artificial intelligence has come a long way, transforming the way we work, live, and interact. Yet, challenges remain. Many AI […]

Researchers from NVIDIA, CMU and the University of Washington Released ‘FlashInfer’: A Kernel Library that Provides State-of-the-Art Kernel Implementations for LLM Inference and Serving

Large Language Models (LLMs) have become an integral part of modern AI applications, powering tools like chatbots and code generators. […]

PRIME: An Open-Source Solution for Online Reinforcement Learning with Process Rewards to Advance Reasoning Abilities of Language Models Beyond Imitation or Distillation

Large Language Models (LLMs) face significant scalability limitations in improving their reasoning capabilities through data-driven imitation, as better performance demands […]