The development of multimodal large language models (MLLMs) has brought new opportunities in artificial intelligence. However, significant challenges persist in […]

Category: Editors Pick

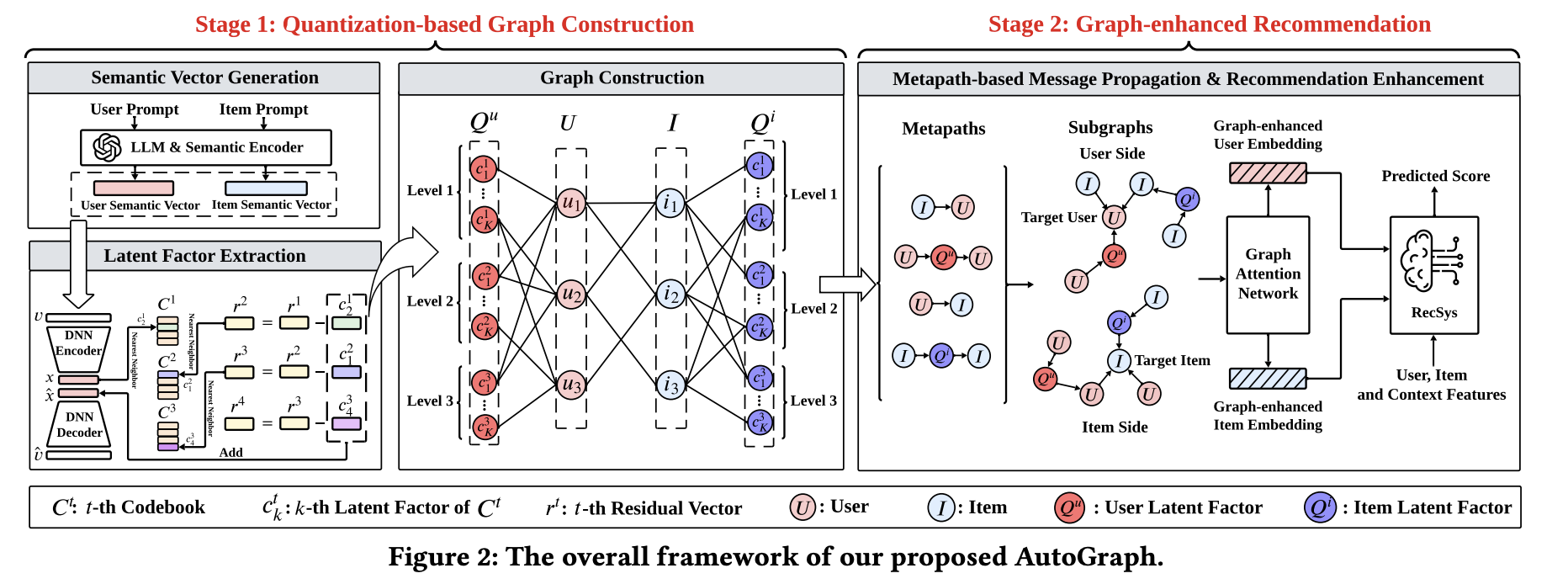

AutoGraph: An Automatic Graph Construction Framework based on LLMs for Recommendation

Enhancing user experiences and boosting retention using recommendation systems is an effective and ever-evolving strategy used by many industries, such […]

Researchers from Salesforce, The University of Tokyo, UCLA, and Northeastern University Propose the Inner Thoughts Framework: A Novel Approach to Proactive AI in Multi-Party Conversations

Conversational AI has come a long way, but one challenge persists: getting systems to engage proactively in a way that […]

Dolphin 3.0 Released (Llama 3.1 + 3.2 + Qwen 2.5): A Local-First, Steerable AI Model that Puts You in Control of Your AI Stack and Alignment

Artificial intelligence has come a long way, transforming the way we work, live, and interact. Yet, challenges remain. Many AI […]

Graph Generative Pre-trained Transformer (G2PT): An Auto-Regressive Model Designed to Learn Graph Structures through Next-Token Prediction

Graph generation is an important task across various fields, including molecular design and social network analysis, due to its ability […]

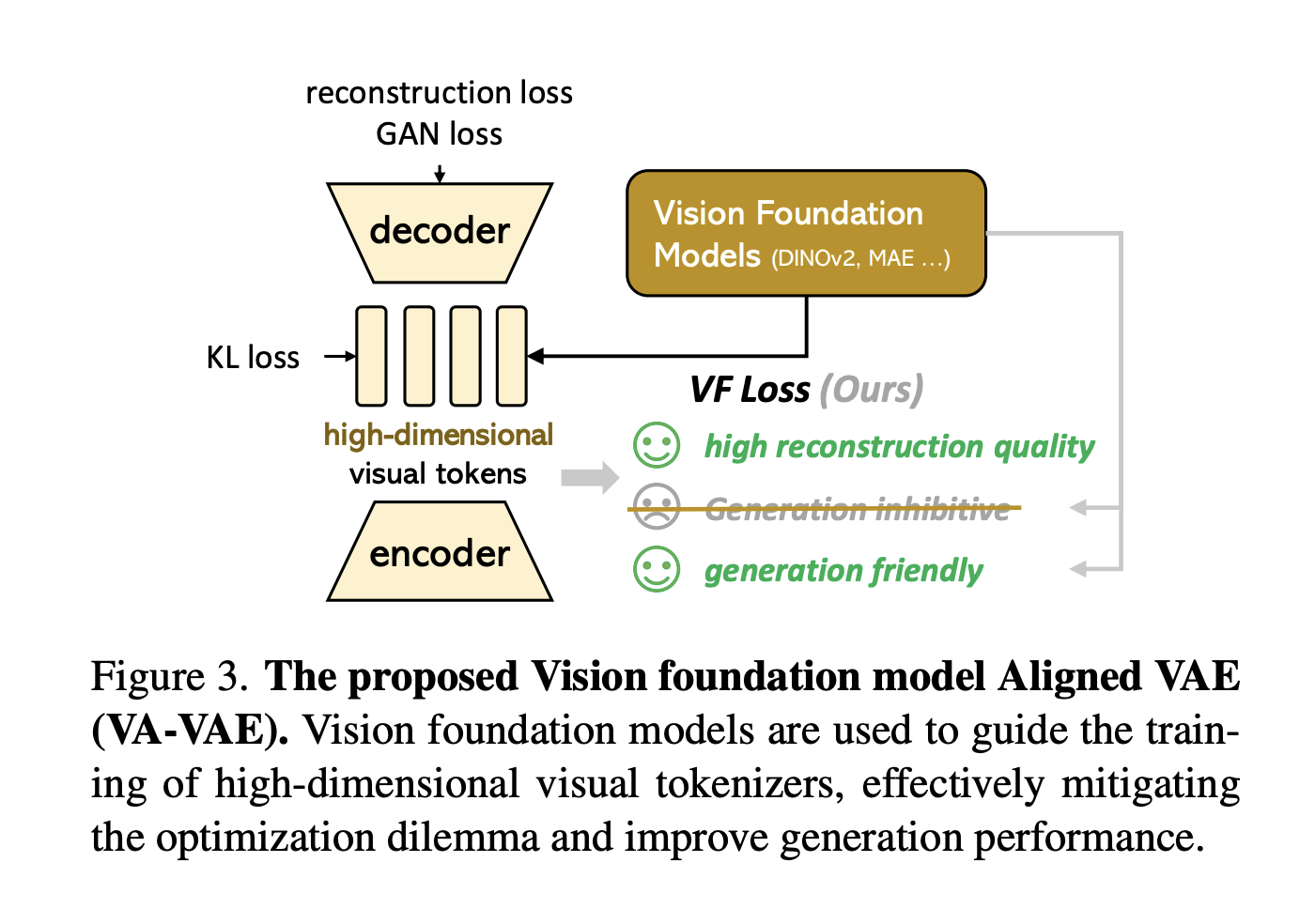

From Latent Spaces to State-of-the-Art: The Journey of LightningDiT

Latent diffusion models are advanced techniques for generating high-resolution images by compressing visual data into a latent space using visual […]

ScreenSpot-Pro: The First Benchmark Driving Multi-Modal LLMs into High-Resolution Professional GUI-Agent and Computer-Use Environments

GUI agents face three critical challenges in professional environments: (1) the greater complexity of professional applications compared to general-use software, […]

Enhancing Protein Docking with AlphaRED: A Balanced Approach to Protein Complex Prediction

Protein docking, the process of predicting the structure of protein-protein complexes, remains a complex challenge in computational biology. While advances […]

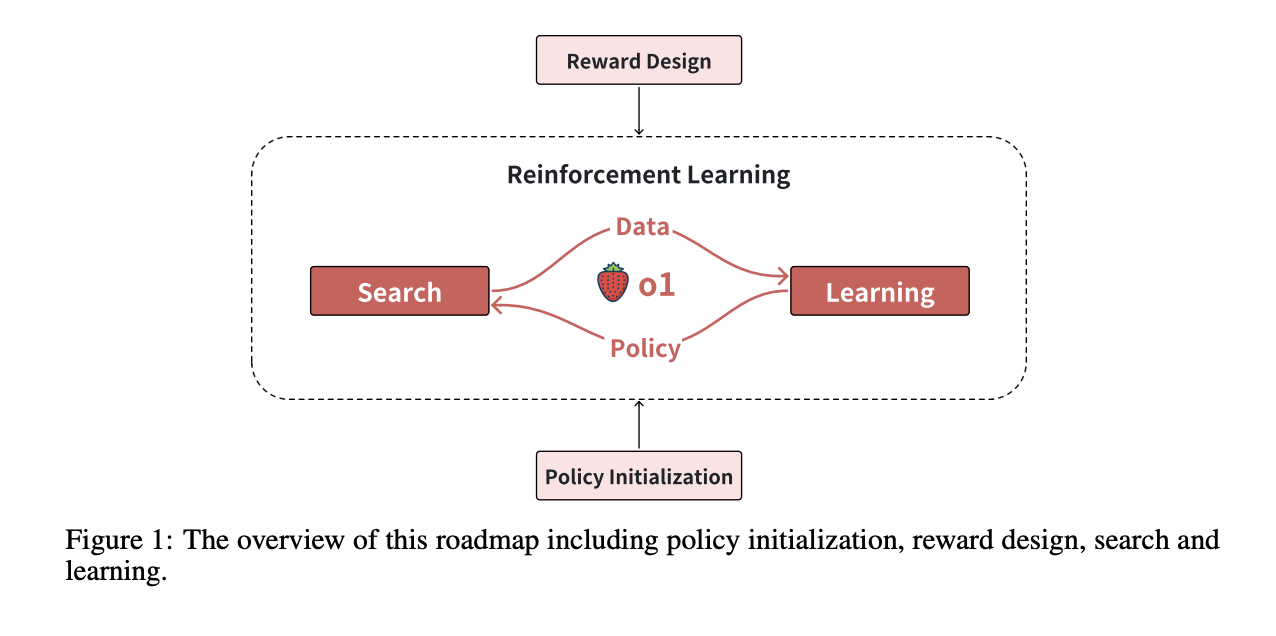

Scaling of Search and Learning: A Roadmap to Reproduce o1 from Reinforcement Learning Perspective

Achieving expert-level performance in complex reasoning tasks is a significant challenge in artificial intelligence (AI). Models like OpenAI’s o1 demonstrate […]

Researchers from NVIDIA, CMU and the University of Washington Released ‘FlashInfer’: A Kernel Library that Provides State-of-the-Art Kernel Implementations for LLM Inference and Serving

Large Language Models (LLMs) have become an integral part of modern AI applications, powering tools like chatbots and code generators. […]