Artificial intelligence research has steadily advanced toward creating systems capable of complex reasoning. Multimodal large language models (MLLMs) represent a […]

Category: AI

AI start-up Anthropic Eyes $2 Billion, $60 Billion Valuation in Latest Funding Round: Report

AI start-up Anthropic is on the edge of securing an additional $2 billion in funding, propelling its valuation to $60 […]

TabTreeFormer: Enhancing Synthetic Tabular Data Generation Through Tree-Based Inductive Biases and Dual-Quantization Tokenization

The generation of synthetic tabular data has become increasingly crucial in fields like healthcare and financial services, where privacy concerns […]

It’s remarkably easy to inject new medical misinformation into LLMs

Changing just 0.001% of inputs to misinformation makes the AI less accurate. It’s pretty easy to see the problem here: […]

How I program with LLMs

The second issue is we can do better. I am happy we now live in a time when programmers write […]

Microsoft AI Just Released Phi-4: A Small Language Model Available on Hugging Face Under the MIT License

Microsoft has released Phi-4, a compact and efficient small language model, on Hugging Face under the MIT license. This decision […]

This AI Paper Introduces Semantic Backpropagation and Gradient Descent: Advanced Methods for Optimizing Language-Based Agentic Systems

Language-based agentic systems represent a breakthrough in artificial intelligence, allowing for the automation of tasks such as question-answering, programming, and […]

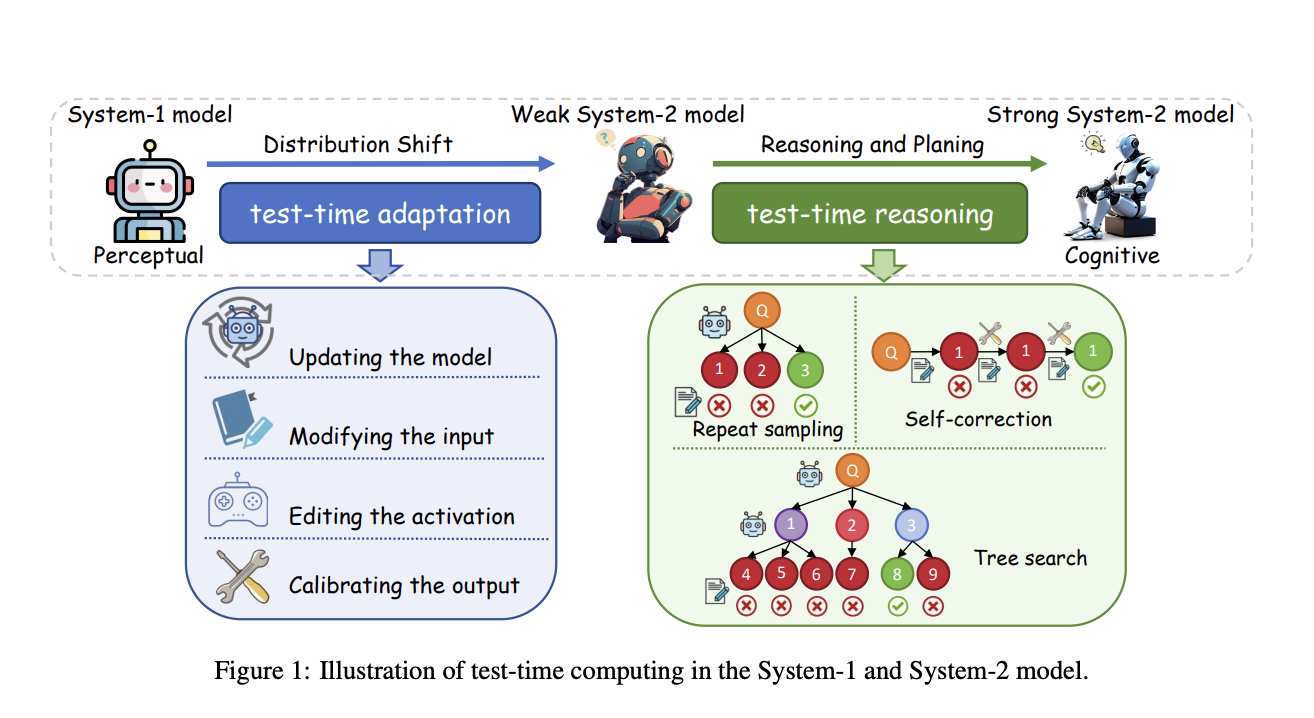

Advancing Test-Time Computing: Scaling System-2 Thinking for Robust and Cognitive AI

The o1 model’s impressive performance in complex reasoning highlights the potential of test-time computing scaling, which enhances System-2 thinking by […]

Researchers from Princeton University Introduce Metadata Conditioning then Cooldown (MeCo) to Simplify and Optimize Language Model Pre-training

The pre-training of language models (LMs) plays a crucial role in enabling their ability to understand and generate text. However, […]

PyG-SSL: An Open-Source Library for Graph Self-Supervised Learning and Compatible with Various Deep Learning and Scientific Computing Backends

Complex domains like social media, molecular biology, and recommendation systems have graph-structured data that consists of nodes, edges, and their […]