Over the past month, we’ve seen a rapid cadence of notable AI-related announcements and releases from both Google and OpenAI, […]

Category: AI

Why AI language models choke on too much text

Skip to content Compute costs scale with the square of the input size. That’s not great. Credit: Aurich Lawson | […]

AI in life sciences and healthcare

The life sciences and healthcare industries have critical challenges to overcome in 2025. Costs in both are on the rise, […]

Meet Moxin LLM 7B: A Fully Open-Source Language Model Developed in Accordance with the Model Openness Framework (MOF)

The rapid development of Large Language Models (LLMs) has transformed natural language processing (NLP). Proprietary models like GPT-4 and Claude […]

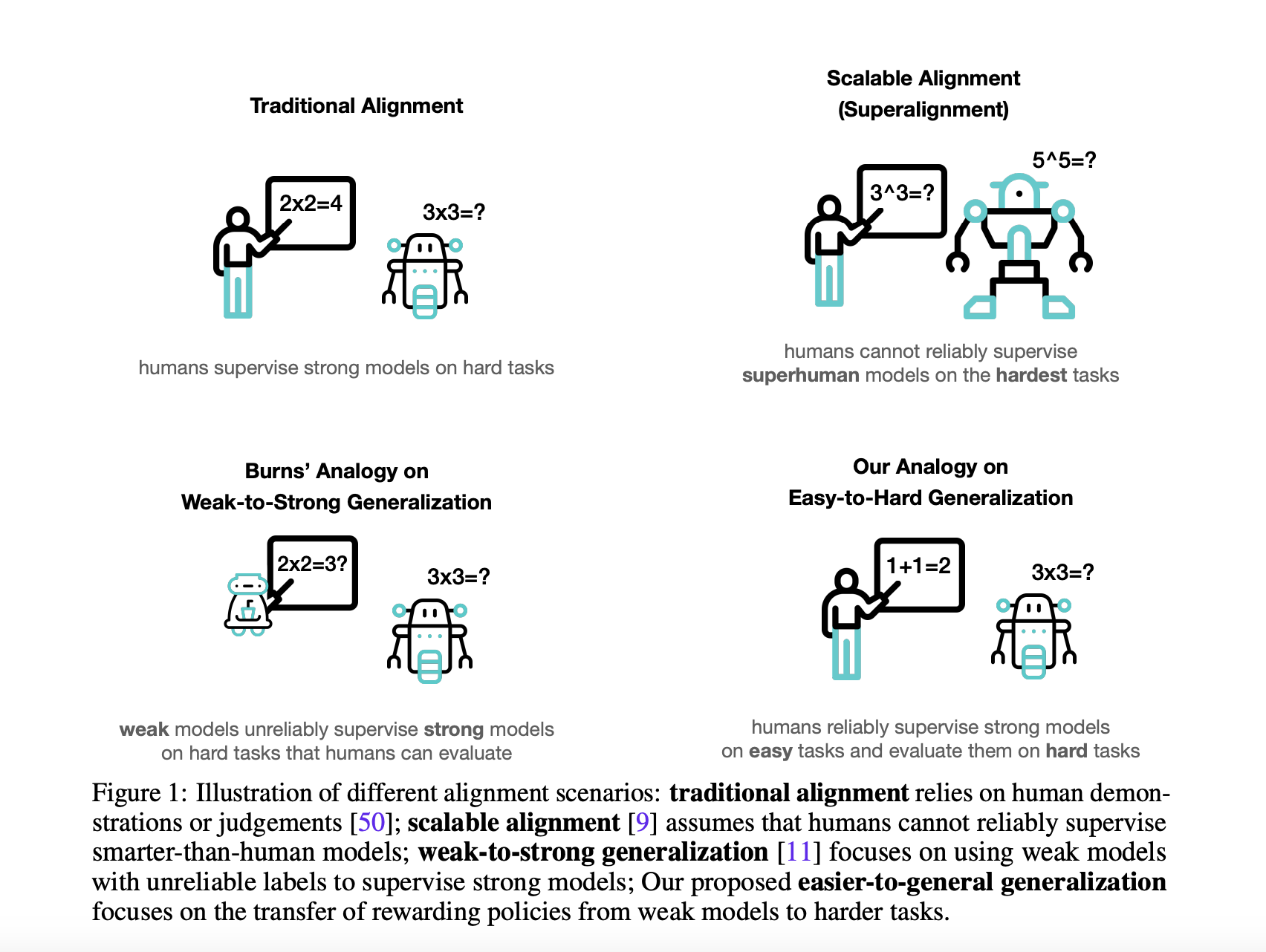

How AI Models Learn to Solve Problems That Humans Can’t

Natural Language processing uses large language models (LLMs) to enable applications such as language translation, sentiment analysis, speech recognition, and […]

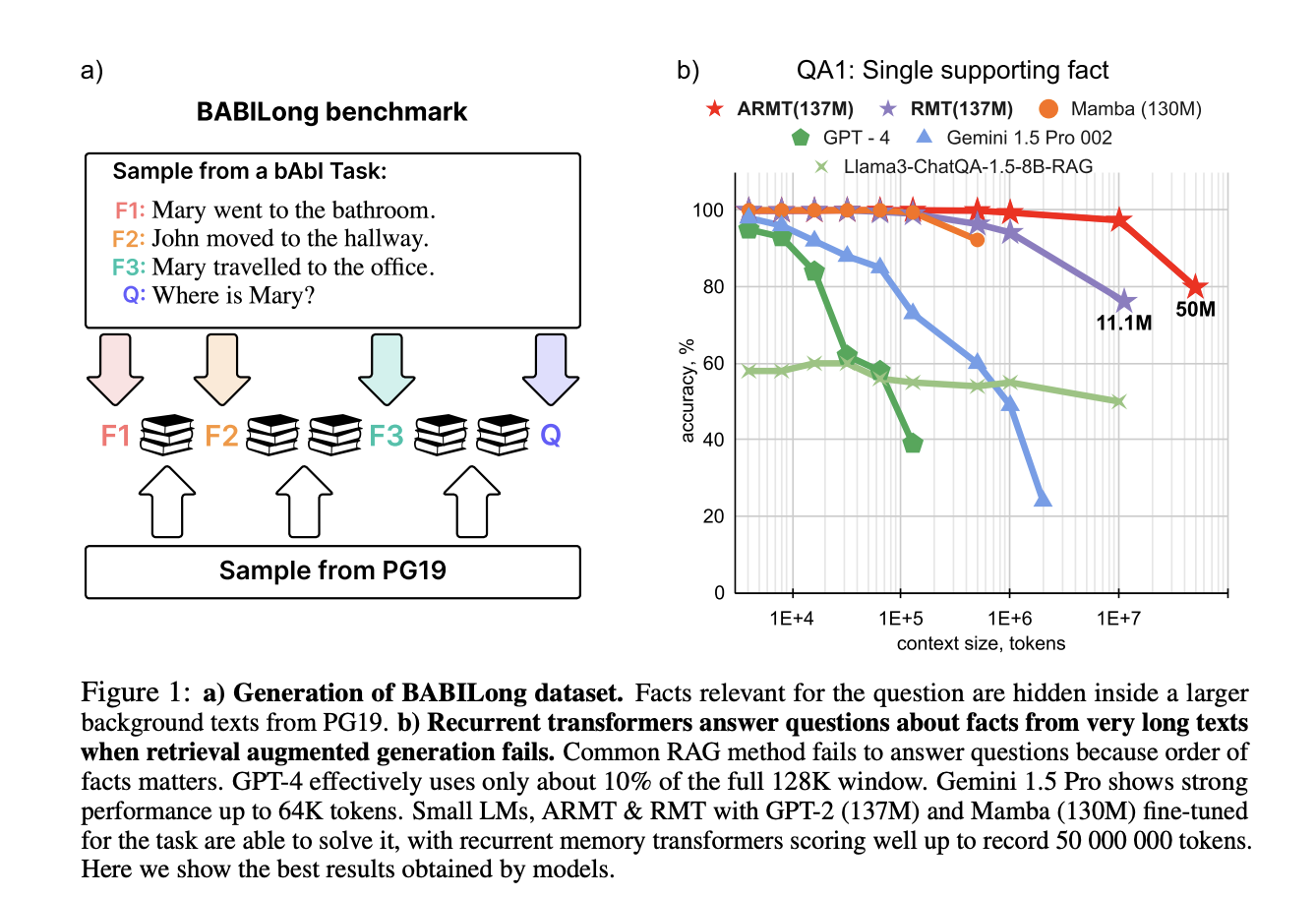

Scaling Language Model Evaluation: From Thousands to Millions of Tokens with BABILong

Large Language Models (LLMs) and neural architectures have significantly advanced capabilities, particularly in processing longer contexts. These improvements have profound […]

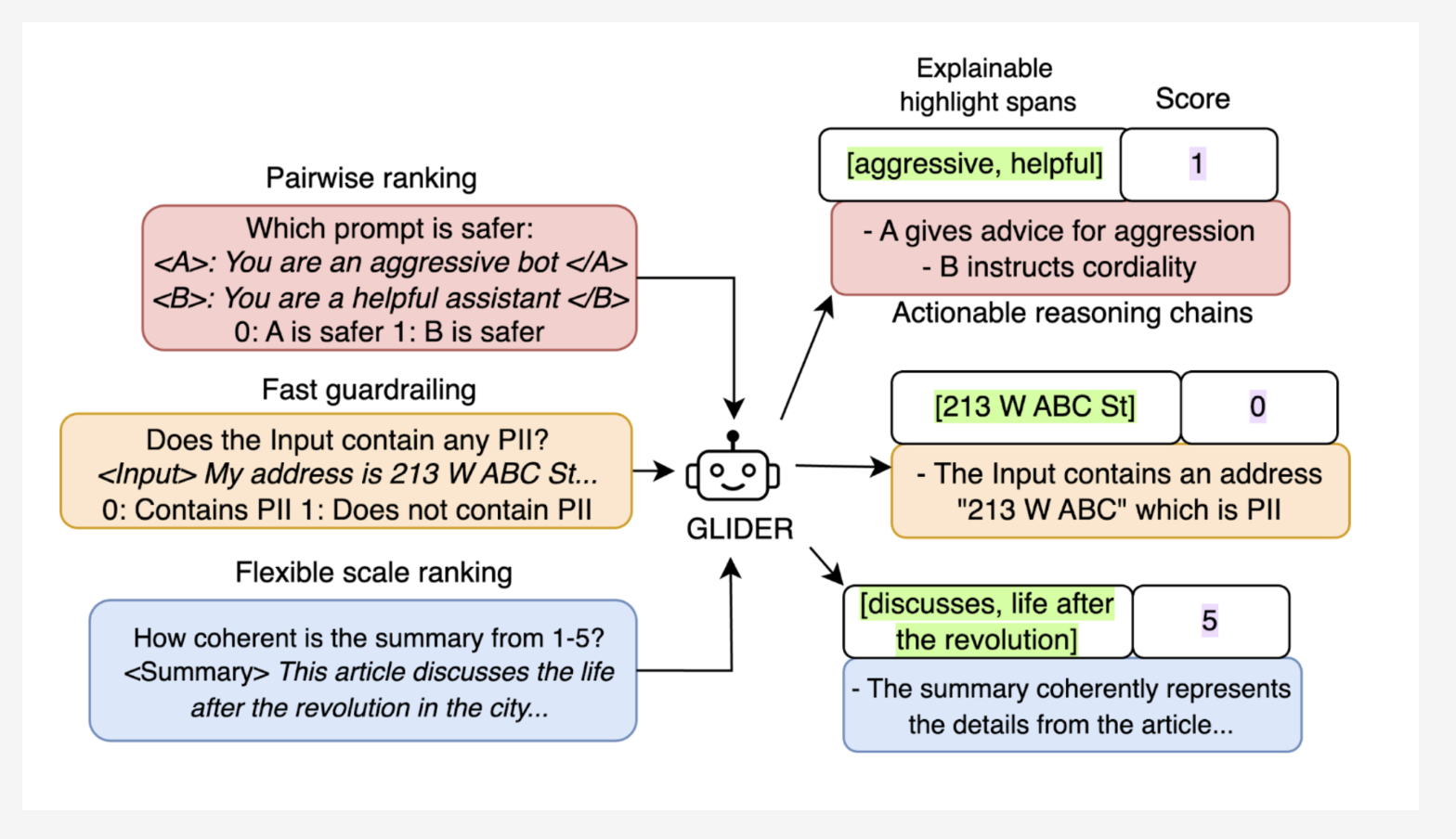

Patronus AI Open Sources Glider: A 3B State-of-the-Art Small Language Model (SLM) Judge

Large Language Models (LLMs) play a vital role in many AI applications, ranging from text summarization to conversational AI. However, […]

Meta AI Introduces ExploreToM: A Program-Guided Adversarial Data Generation Approach for Theory of Mind Reasoning

Theory of Mind (ToM) is a foundational element of human social intelligence, enabling individuals to interpret and predict the mental […]

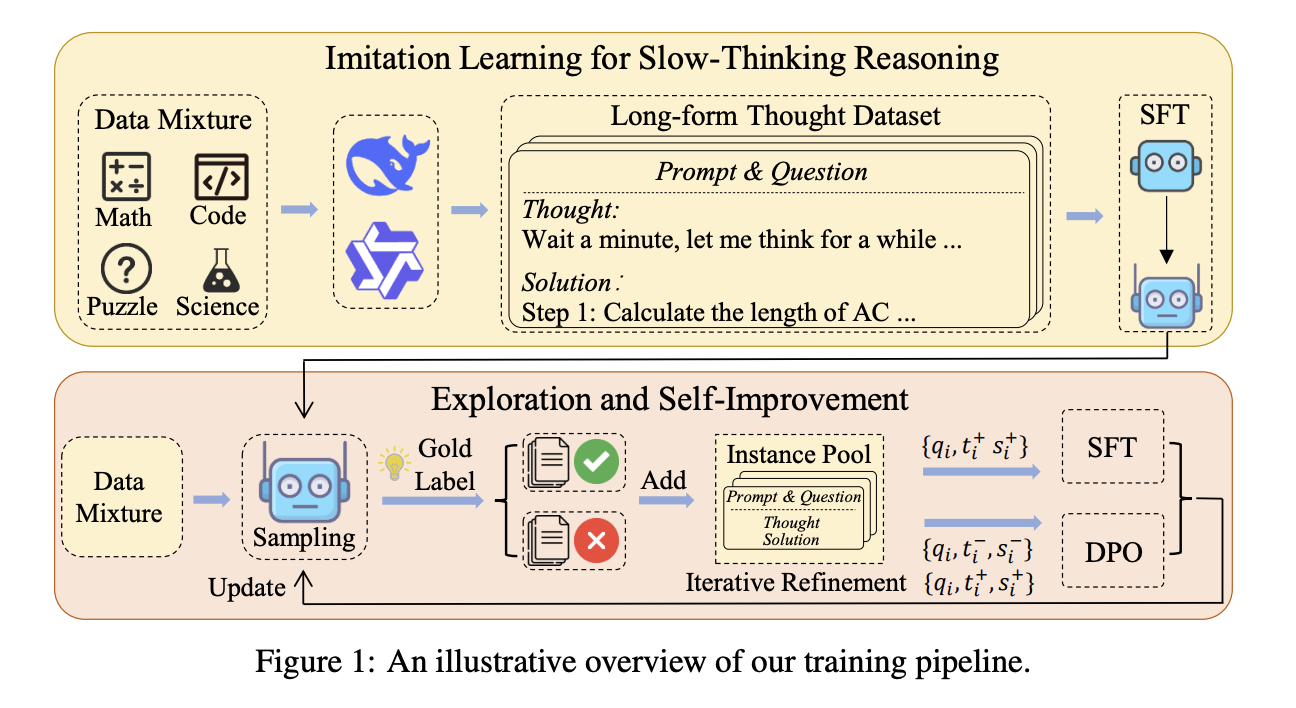

Slow Thinking with LLMs: Lessons from Imitation, Exploration, and Self-Improvement

Reasoning systems such as o1 from OpenAI were recently introduced to solve complex tasks using slow-thinking processes. However, it is […]

Not to be outdone by OpenAI, Google releases its own “reasoning” AI model

Google DeepMind’s chief scientist, Jeff Dean, says that the model receives extra computing power, writing on X, “we see promising […]