Large Language Models (LLMs) face significant challenges in optimizing their post-training methods, particularly in balancing Supervised Fine-Tuning (SFT) and Reinforcement […]

Category: Technology

This AI Paper from Weco AI Introduces AIDE: A Tree-Search-Based AI Agent for Automating Machine Learning Engineering

The development of high-performing machine learning models remains a time-consuming and resource-intensive process. Engineers and researchers spend significant time fine-tuning […]

The Stepford Wives turns 50

Sinister suburbia Joanna (Katharine Ross) is a housewife and aspiring photographer who moves from Manhattan to the Stepford suburb. Columbia […]

In war against DEI in science, researchers see collateral damage

Senate Republicans flagged thousands of grants as “woke DEI” research. What does that really mean? Senate Commerce Committee Chairman Ted […]

Flashy exotic birds can actually glow in the dark

Found in the forests of Papua New Guinea, Indonesia, and Eastern Australia, birds of paradise are famous for flashy feathers […]

What are AI Agents? Demystifying Autonomous Software with a Human Touch

In today’s digital landscape, technology continues to advance at a steady pace. One development that has steadily gained attention is […]

Moonshot AI and UCLA Researchers Release Moonlight: A 3B/16B-Parameter Mixture-of-Expert (MoE) Model Trained with 5.7T Tokens Using Muon Optimizer

Training large language models (LLMs) has become central to advancing artificial intelligence, yet it is not without its challenges. As […]

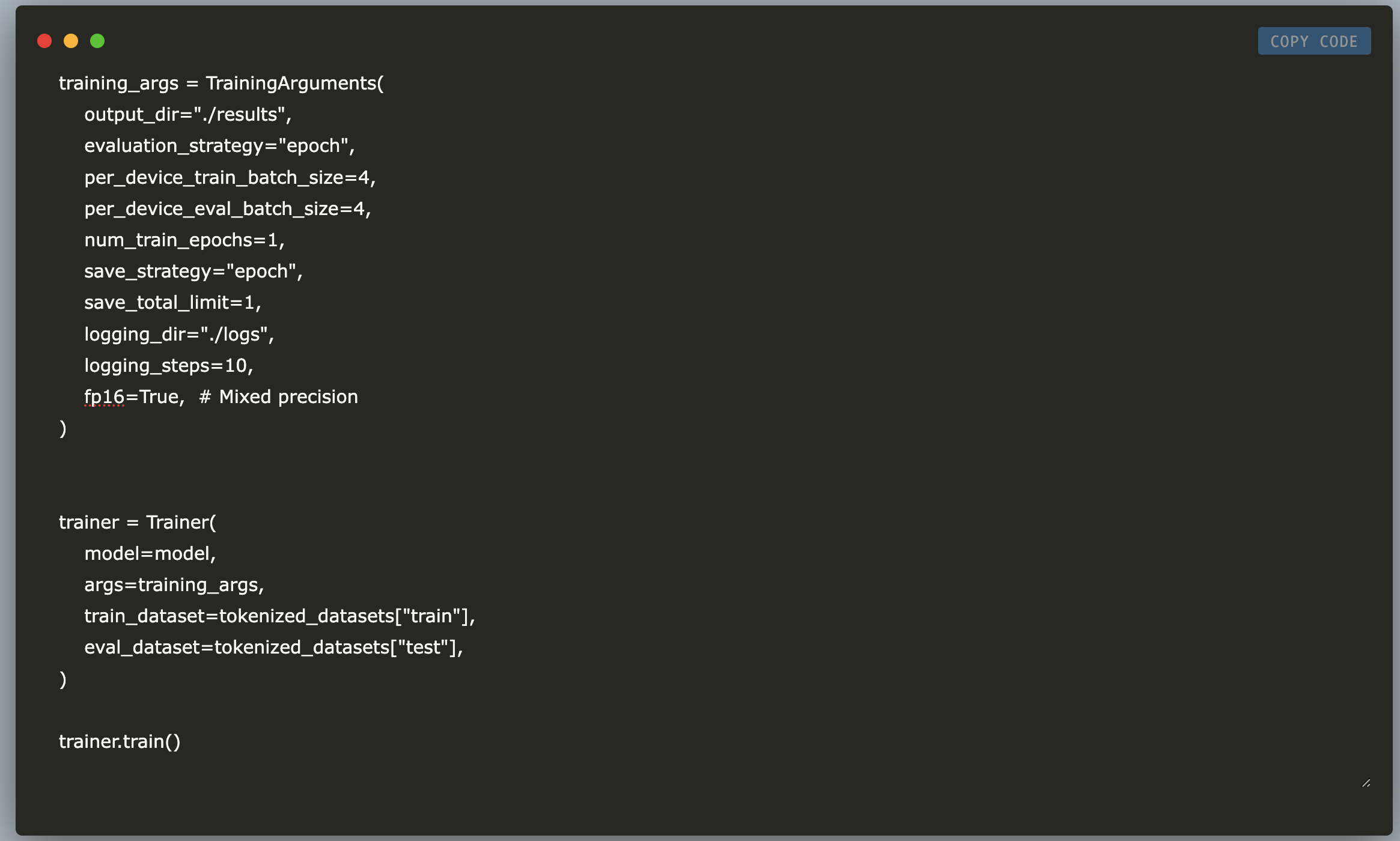

Fine-Tuning NVIDIA NV-Embed-v1 on Amazon Polarity Dataset Using LoRA and PEFT: A Memory-Efficient Approach with Transformers and Hugging Face

In this tutorial, we explore how to fine-tune NVIDIA’s NV-Embed-v1 model on the Amazon Polarity dataset using LoRA (Low-Rank Adaptation) […]

Sony Researchers Propose TalkHier: A Novel AI Framework for LLM-MA Systems that Addresses Key Challenges in Communication and Refinement

LLM-based multi-agent (LLM-MA) systems enable multiple language model agents to collaborate on complex tasks by dividing responsibilities. These systems are […]

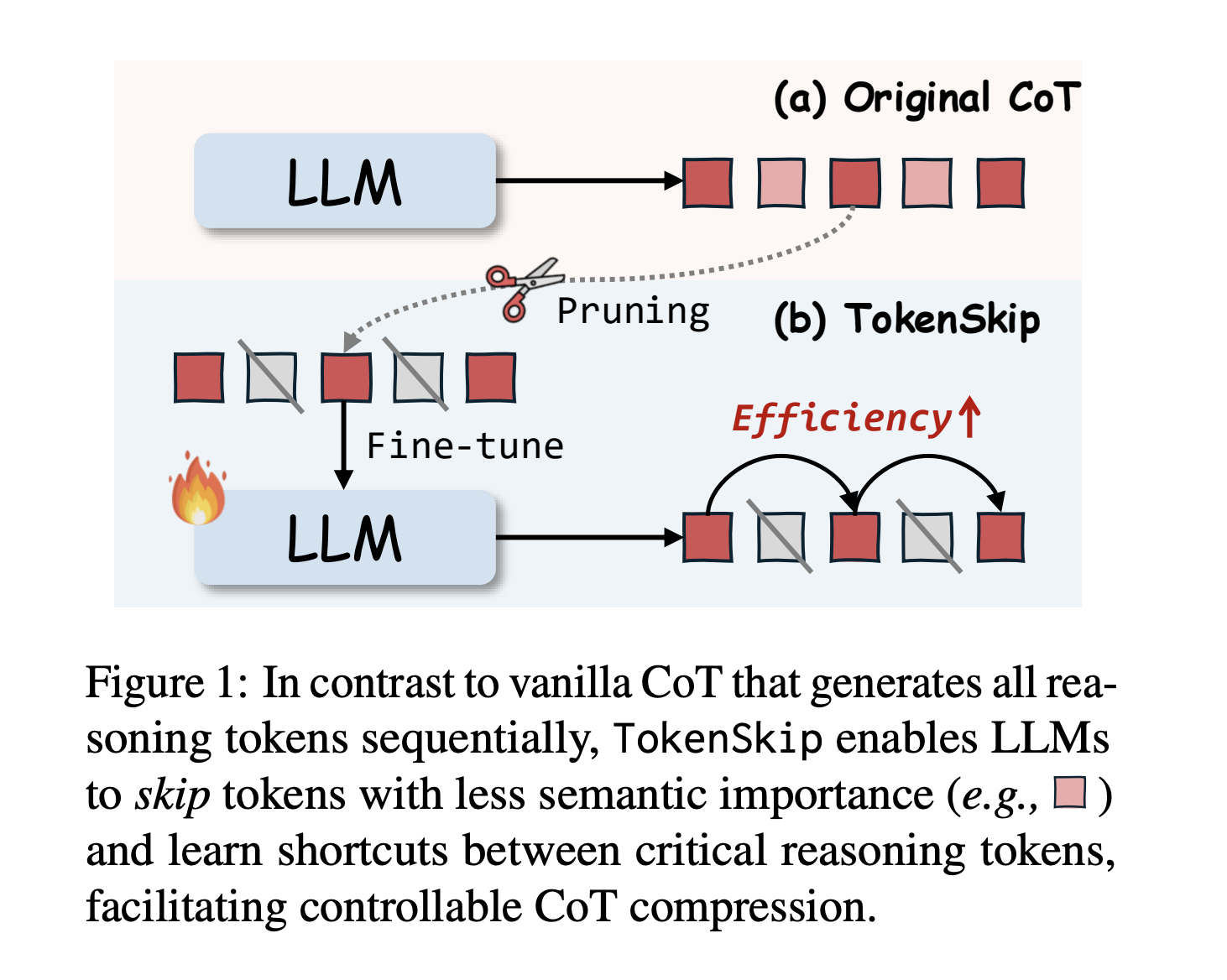

TokenSkip: Optimizing Chain-of-Thought Reasoning in LLMs Through Controllable Token Compression

Large Language Models (LLMs) face significant challenges in complex reasoning tasks, despite the breakthrough advances achieved through Chain-of-Thought (CoT) prompting. […]