Microsoft has released Phi-4, a compact and efficient small language model, on Hugging Face under the MIT license. This decision […]

Category: Editors Pick

This AI Paper Introduces Semantic Backpropagation and Gradient Descent: Advanced Methods for Optimizing Language-Based Agentic Systems

Language-based agentic systems represent a breakthrough in artificial intelligence, allowing for the automation of tasks such as question-answering, programming, and […]

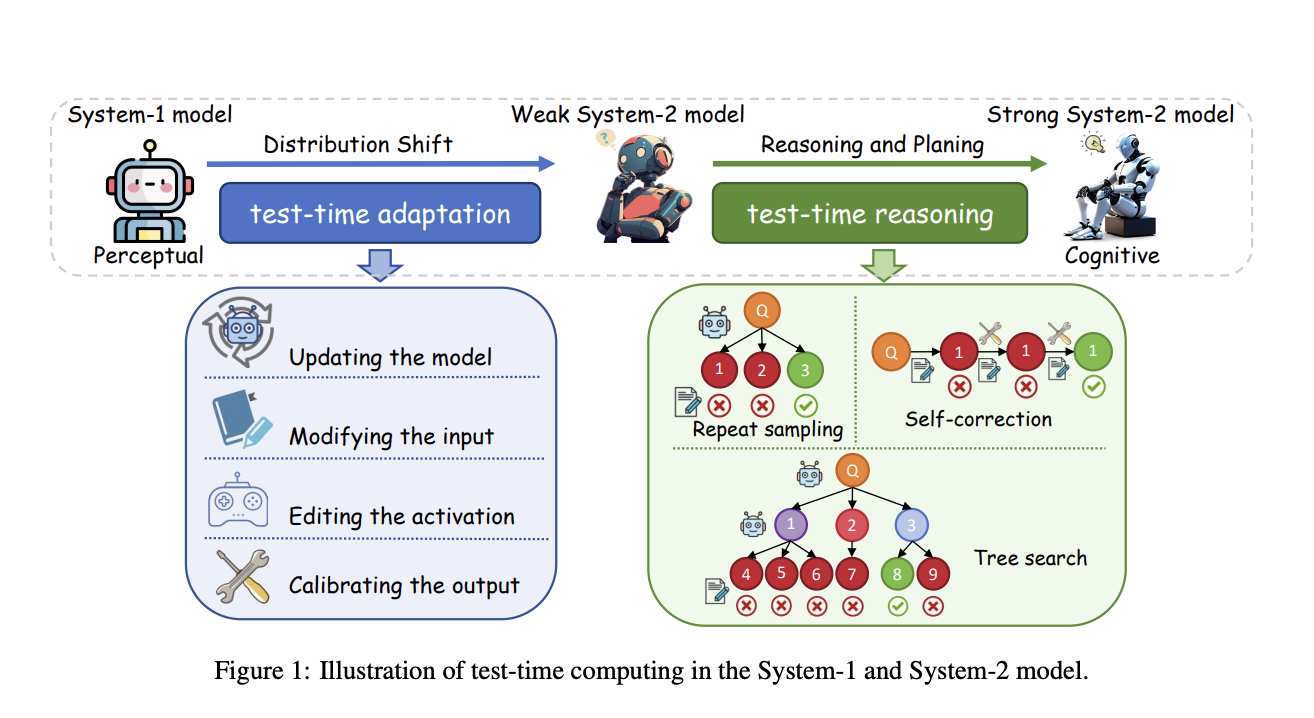

Advancing Test-Time Computing: Scaling System-2 Thinking for Robust and Cognitive AI

The o1 model’s impressive performance in complex reasoning highlights the potential of test-time computing scaling, which enhances System-2 thinking by […]

Researchers from Princeton University Introduce Metadata Conditioning then Cooldown (MeCo) to Simplify and Optimize Language Model Pre-training

The pre-training of language models (LMs) plays a crucial role in enabling their ability to understand and generate text. However, […]

PyG-SSL: An Open-Source Library for Graph Self-Supervised Learning and Compatible with Various Deep Learning and Scientific Computing Backends

Complex domains like social media, molecular biology, and recommendation systems have graph-structured data that consists of nodes, edges, and their […]

DeepMind Research Introduces The FACTS Grounding Leaderboard: Benchmarking LLMs’ Ability to Ground Responses to Long-Form Input

Large language models (LLMs) have revolutionized natural language processing, enabling applications that range from automated writing to complex decision-making aids. […]

Researchers from Caltech, Meta FAIR, and NVIDIA AI Introduce Tensor-GaLore: A Novel Method for Efficient Training of Neural Networks with Higher-Order Tensor Weights

Advancements in neural networks have brought significant changes across domains like natural language processing, computer vision, and scientific computing. Despite […]

HBI V2: A Flexible AI Framework that Elevates Video-Language Learning with a Multivariate Co-Operative Game

Video-Language Representation Learning is a crucial subfield of multi-modal representation learning that focuses on the relationship between videos and their […]

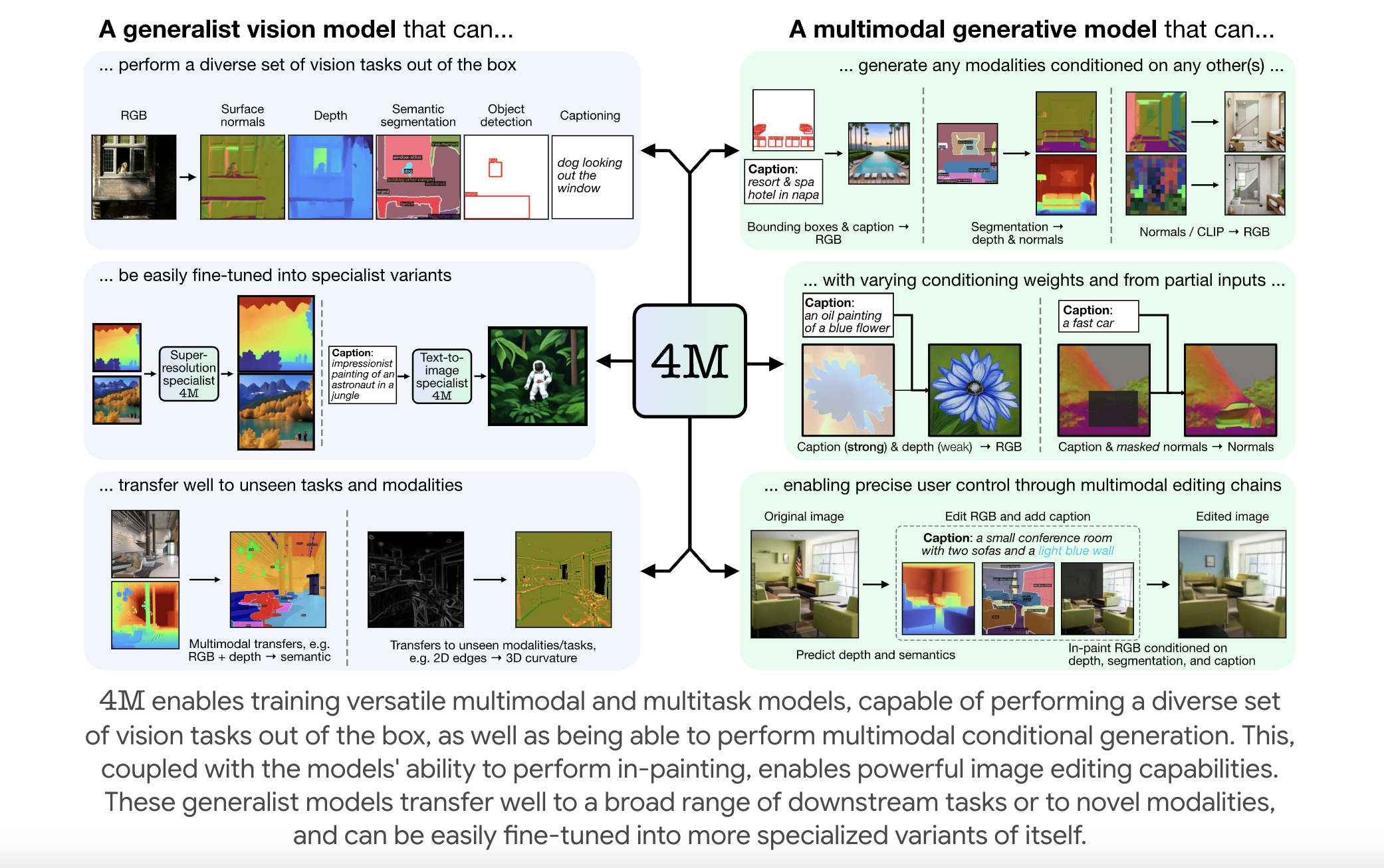

EPFL Researchers Releases 4M: An Open-Source Training Framework to Advance Multimodal AI

Multimodal foundation models are becoming increasingly relevant in artificial intelligence, enabling systems to process and integrate multiple forms of data—such […]

Transformer-Based AI Models for Ovarian Lesion Diagnosis: Enhancing Accuracy and Reducing Expert Referral Dependence Across International Centers

Ovarian lesions are frequently detected, often by chance, and managing them is crucial to avoid delayed diagnoses or unnecessary interventions. […]