Optimizing LLMs for Human Alignment Using Reinforcement Learning Large language models often require a further alignment phase to optimize them […]

Category: Artificial Intelligence

What Is Context Engineering in AI? Techniques, Use Cases, and Why It Matters

Introduction: What is Context Engineering? Context engineering refers to the discipline of designing, organizing, and manipulating the context that is […]

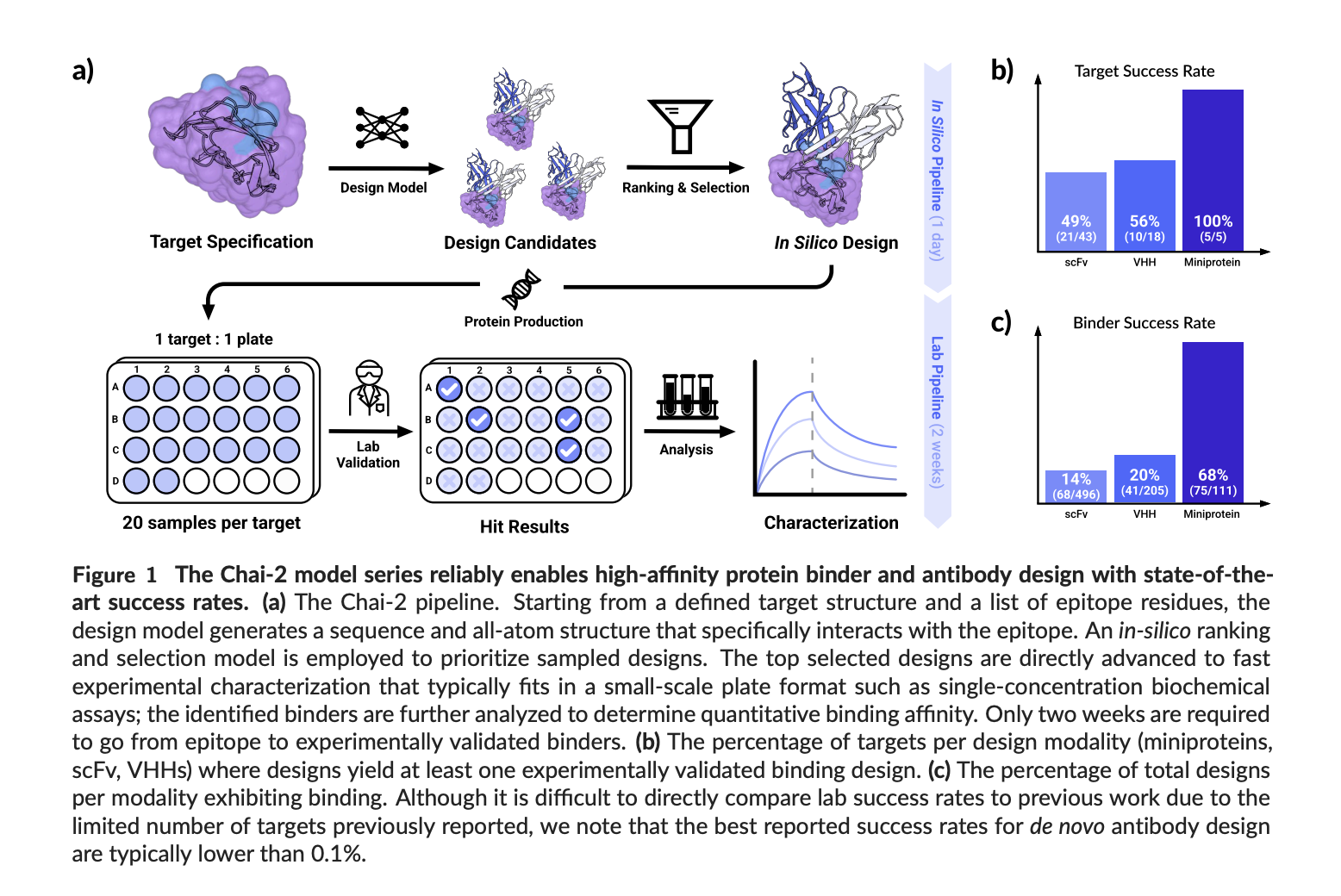

Chai Discovery Team Releases Chai-2: AI Model Achieves 16% Hit Rate in De Novo Antibody Design

TLDR: Chai Discovery Team introduces Chai-2, a multimodal AI model that enables zero-shot de novo antibody design. Achieving a 16% […]

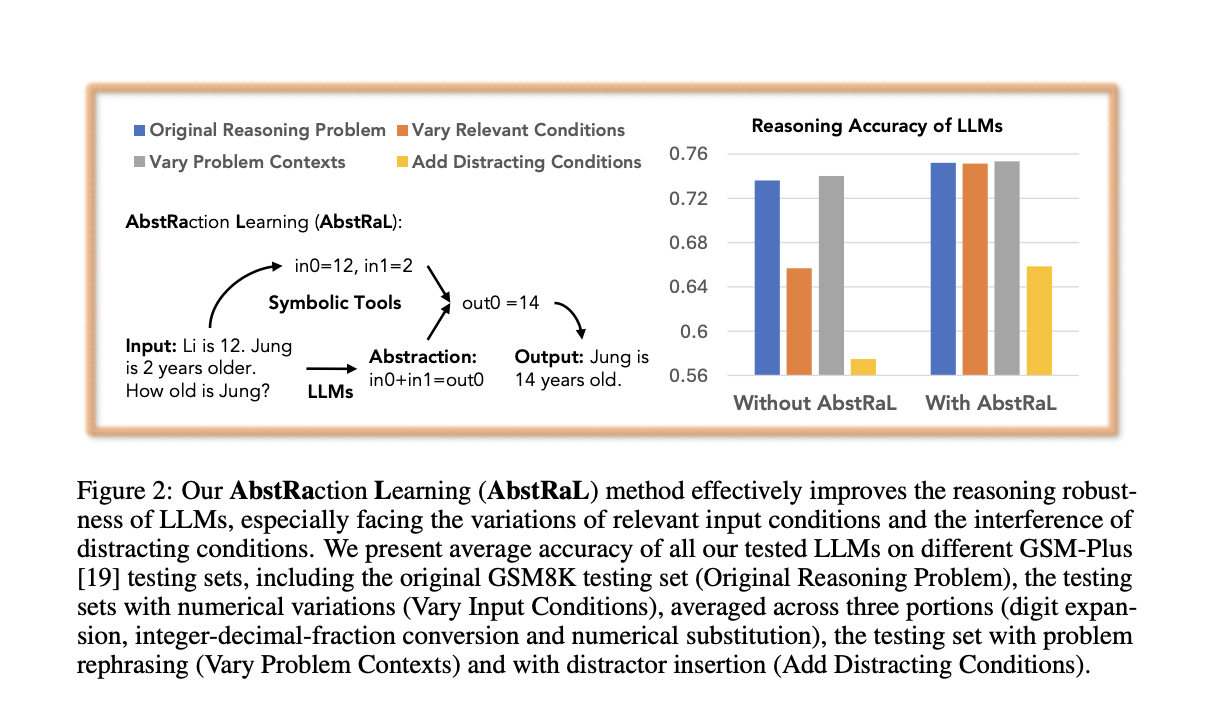

AbstRaL: Teaching LLMs Abstract Reasoning via Reinforcement to Boost Robustness on GSM Benchmarks

Recent research indicates that LLMs, particularly smaller ones, frequently struggle with robust reasoning. They tend to perform well on familiar […]

Kyutai Releases 2B Parameter Streaming Text-to-Speech TTS with 220ms Latency and 2.5M Hours of Training

Kyutai, an open AI research lab, has released a groundbreaking streaming Text-to-Speech (TTS) model with ~2 billion parameters. Designed for […]

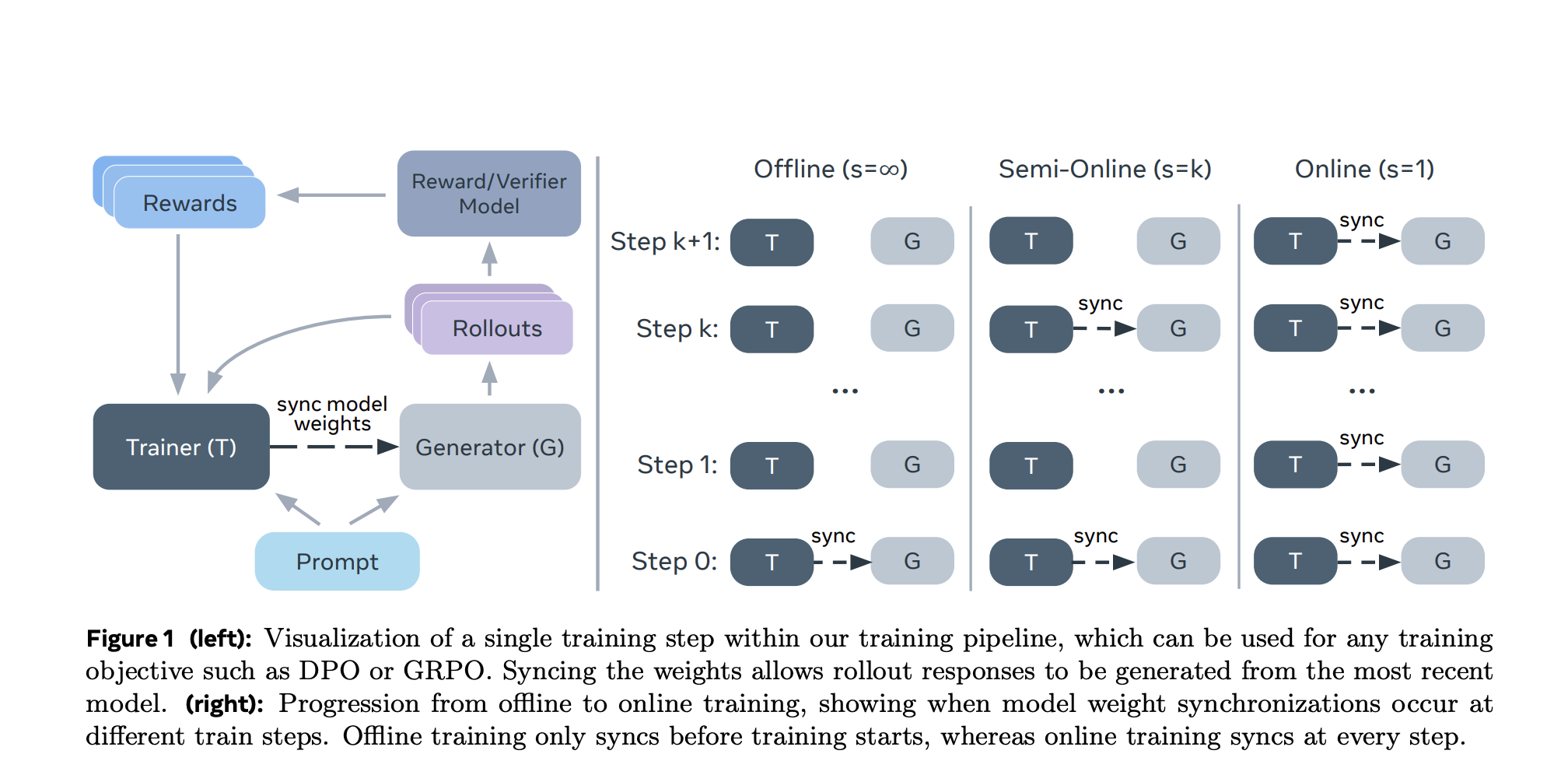

Can We Improve Llama 3’s Reasoning Through Post-Training Alone? ASTRO Shows +16% to +20% Benchmark Gains

Improving the reasoning capabilities of large language models (LLMs) without architectural changes is a core challenge in advancing AI alignment […]

5 simple ChatGPT cheat codes to help you get better answers from AI

(Image credit: Shutterstock/bluecat_stock) The best bit about ChatGPT and other AI chatbots is the tech’s ability to meet your needs […]

rabbit returns: AI gadget maker takes on Sam Altman and Jony Ive in a race for AI device dominance

(Image credit: rabbit) When it first launched in April last year, reviews of the rabbit r1, the $200 walkie-talkie-like AI […]

Crome: Google DeepMind’s Causal Framework for Robust Reward Modeling in LLM Alignment

Reward models are fundamental components for aligning LLMs with human feedback, yet they face the challenge of reward hacking issues. […]

Thought Anchors: A Machine Learning Framework for Identifying and Measuring Key Reasoning Steps in Large Language Models with Precision

Understanding the Limits of Current Interpretability Tools in LLMs AI models, such as DeepSeek and GPT variants, rely on billions […]