Post-training Large Language Models (LLMs) for long-horizon agentic tasks—such as software engineering, web browsing, and complex tool use—presents a persistent […]

Category: Applications

Google Introduces TurboQuant: A New Compression Algorithm that Reduces LLM Key-Value Cache Memory by 6x and Delivers Up to 8x Speedup, All with Zero Accuracy Loss

The scaling of Large Language Models (LLMs) is increasingly constrained by memory communication overhead between High-Bandwidth Memory (HBM) and SRAM. […]

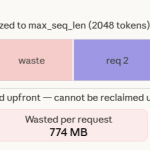

Paged Attention in Large Language Models LLMs

When running LLMs at scale, the real limitation is GPU memory rather than compute, mainly because each request requires a […]

This AI Paper Introduces TinyLoRA, A 13-Parameter Fine-Tuning Method That Reaches 91.8 Percent GSM8K on Qwen2.5-7B

Researchers from FAIR at Meta, Cornell University, and Carnegie Mellon University have demonstrated that large language models (LLMs) can learn […]

Yann LeCun’s New LeWorldModel (LeWM) Research Targets JEPA Collapse in Pixel-Based Predictive World Modeling

World Models (WMs) are a central framework for developing agents that reason and plan in a compact latent space. However, […]

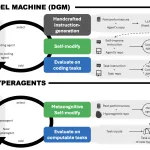

Meta AI’s New Hyperagents Don’t Just Solve Tasks—They Rewrite the Rules of How They Learn

The dream of recursive self-improvement in AI—where a system doesn’t just get better at a task, but gets better at […]

Meet GitAgent: The Docker for AI Agents that is Finally Solving the Fragmentation between LangChain, AutoGen, and Claude Code

The current state of AI agent development is characterized by significant architectural fragmentation. Software devs building autonomous systems must generally […]

Safely Deploying ML Models to Production: Four Controlled Strategies (A/B, Canary, Interleaved, Shadow Testing)

Deploying a new machine learning model to production is one of the most critical stages of the ML lifecycle. Even […]

NVIDIA Releases Nemotron-Cascade 2: An Open 30B MoE with 3B Active Parameters, Delivering Better Reasoning and Strong Agentic Capabilities

NVIDIA has announced the release of Nemotron-Cascade 2, an open-weight 30B Mixture-of-Experts (MoE) model with 3B activated parameters. The model […]

Google Colab Now Has an Open-Source MCP (Model Context Protocol) Server: Use Colab Runtimes with GPUs from Any Local AI Agent

Google has officially released the Colab MCP Server, an implementation of the Model Context Protocol (MCP) that enables AI agents […]