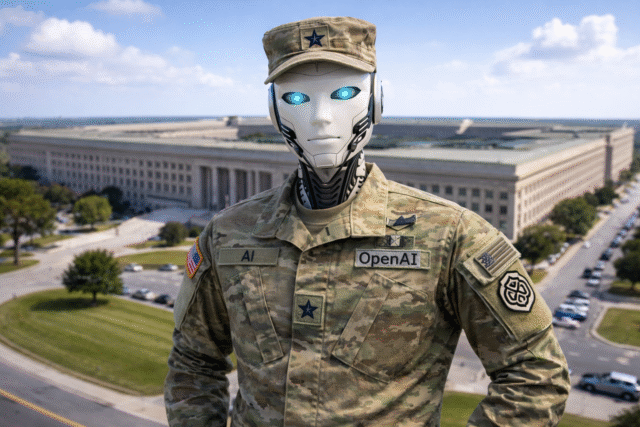

OpenAI has updated its agreement with the Department of War to further restrict how its AI systems can be used in classified environments, particularly with regards to domestic surveillance. The changes come after a public backlash, and now make it clearer that its AI tools cannot be used to monitor U.S. citizens, including via commercially acquired data.

A few days earlier, and with spectacularly bad timing considering what was about to happen with Iran, OpenAI announced it had reached a deal to deploy advanced AI systems in classified settings, with what it described as “firm guardrails”. It outlined red lines, including no use for mass domestic surveillance, no directing autonomous weapons, and no high stakes automated decisions.

The original agreement relied on cloud only deployment, OpenAI controlled safety systems, and contractual language referencing existing U.S. law. It also included provisions stating that AI systems would not independently direct autonomous weapons where human control is required by law or policy.

OpenAI’s strategic retreat

After criticism and questions about how those safeguards would work in practice, CEO Sam Altman said on X that the company has since worked with the Department to amend the contract. The updated language explicitly states that, consistent with applicable laws including the Fourth Amendment, the National Security Act of 1947, and the FISA Act of 1978, the AI system shall not be intentionally used for domestic surveillance of U.S. persons and nationals.

It adds, “For the avoidance of doubt, the Department understands this limitation to prohibit deliberate tracking, surveillance, or monitoring of U.S. persons or nationals, including through the procurement or use of commercially acquired personal or identifiable information.”

Altman wrote that protecting civil liberties is critical and said the additional wording was meant to make that point especially clear. He admitted:

The issues are super complex, and demand clear communication. We were genuinely trying to de-escalate things and avoid a much worse outcome, but I think it just looked opportunistic and sloppy. Good learning experience for me as we face higher-stakes decisions in the future.

The government confirmed that OpenAI services will not be used by Department of War intelligence agencies, including the NSA, and that any such use would require a new agreement be drawn up.

Here is re-post of an internal post:

We have been working with the DoW to make some additions in our agreement to make our principles very clear.

1. We are going to amend our deal to add this language, in addition to everything else:

“• Consistent with applicable laws,…

— Sam Altman (@sama) March 3, 2026

OpenAI stresses that it retains control over its safety stack and will keep cleared engineers and alignment researchers involved in deployments. It also said it would rather terminate the contract than accept uses that violate its principles.

The Department said it plans to convene a working group with frontier AI labs, cloud providers, and policy officials to discuss emerging AI capabilities and privacy concerns, and OpenAI will participate in it.

What do you think about these changes? Let us know in the comments.