Supporters of Chromium-Based Browsers sounds like a very niche local meetup, one with hats and T-shirts that barely fit the […]

Category: Open Source

Microsoft AI Just Released Phi-4: A Small Language Model Available on Hugging Face Under the MIT License

Microsoft has released Phi-4, a compact and efficient small language model, on Hugging Face under the MIT license. This decision […]

Security platform adopts Open API standards

Exabeam’s cloud-native, New-Scale Security Operations Platform has become the first security operations platform compatible with the Open-API Standard (OAS). This […]

Researchers from USC and Prime Intellect Released METAGENE-1: A 7B Parameter Autoregressive Transformer Model Trained on Over 1.5T DNA and RNA Base Pairs

In a time when global health faces persistent threats from emerging pandemics, the need for advanced biosurveillance and pathogen detection […]

Dolphin 3.0 Released (Llama 3.1 + 3.2 + Qwen 2.5): A Local-First, Steerable AI Model that Puts You in Control of Your AI Stack and Alignment

Artificial intelligence has come a long way, transforming the way we work, live, and interact. Yet, challenges remain. Many AI […]

PRIME: An Open-Source Solution for Online Reinforcement Learning with Process Rewards to Advance Reasoning Abilities of Language Models Beyond Imitation or Distillation

Large Language Models (LLMs) face significant scalability limitations in improving their reasoning capabilities through data-driven imitation, as better performance demands […]

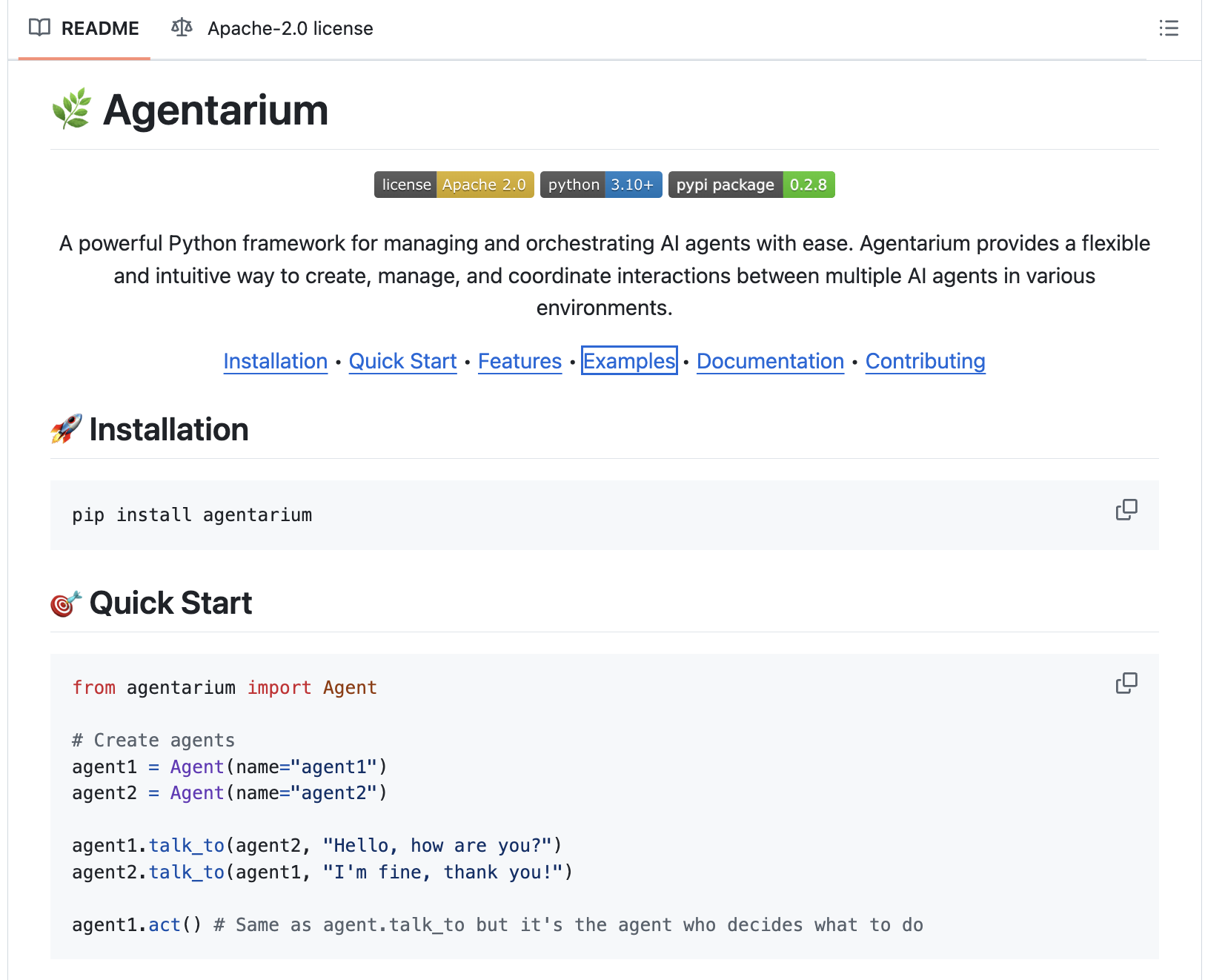

Meet Agentarium: A Powerful Python Framework for Managing and Orchestrating AI Agents

AI agents have become an integral part of modern industries, automating tasks and simulating complex systems. Despite their potential, managing […]

Hugging Face Just Released SmolAgents: A Smol Library that Enables to Run Powerful AI Agents in a Few Lines of Code

Creating intelligent agents has traditionally been a complex task, often requiring significant technical expertise and time. Developers encounter challenges like […]

Meet SemiKong: The World’s First Open-Source Semiconductor-Focused LLM

The semiconductor industry enables advancements in consumer electronics, automotive systems, and cutting-edge computing technologies. The production of semiconductors involves sophisticated […]

DeepSeek-AI Just Released DeepSeek-V3: A Strong Mixture-of-Experts (MoE) Language Model with 671B Total Parameters with 37B Activated for Each Token

The field of Natural Language Processing (NLP) has made significant strides with the development of large-scale language models (LLMs). However, […]