The rapid development of Large Language Models (LLMs) has transformed natural language processing (NLP). Proprietary models like GPT-4 and Claude […]

Category: Editors Pick

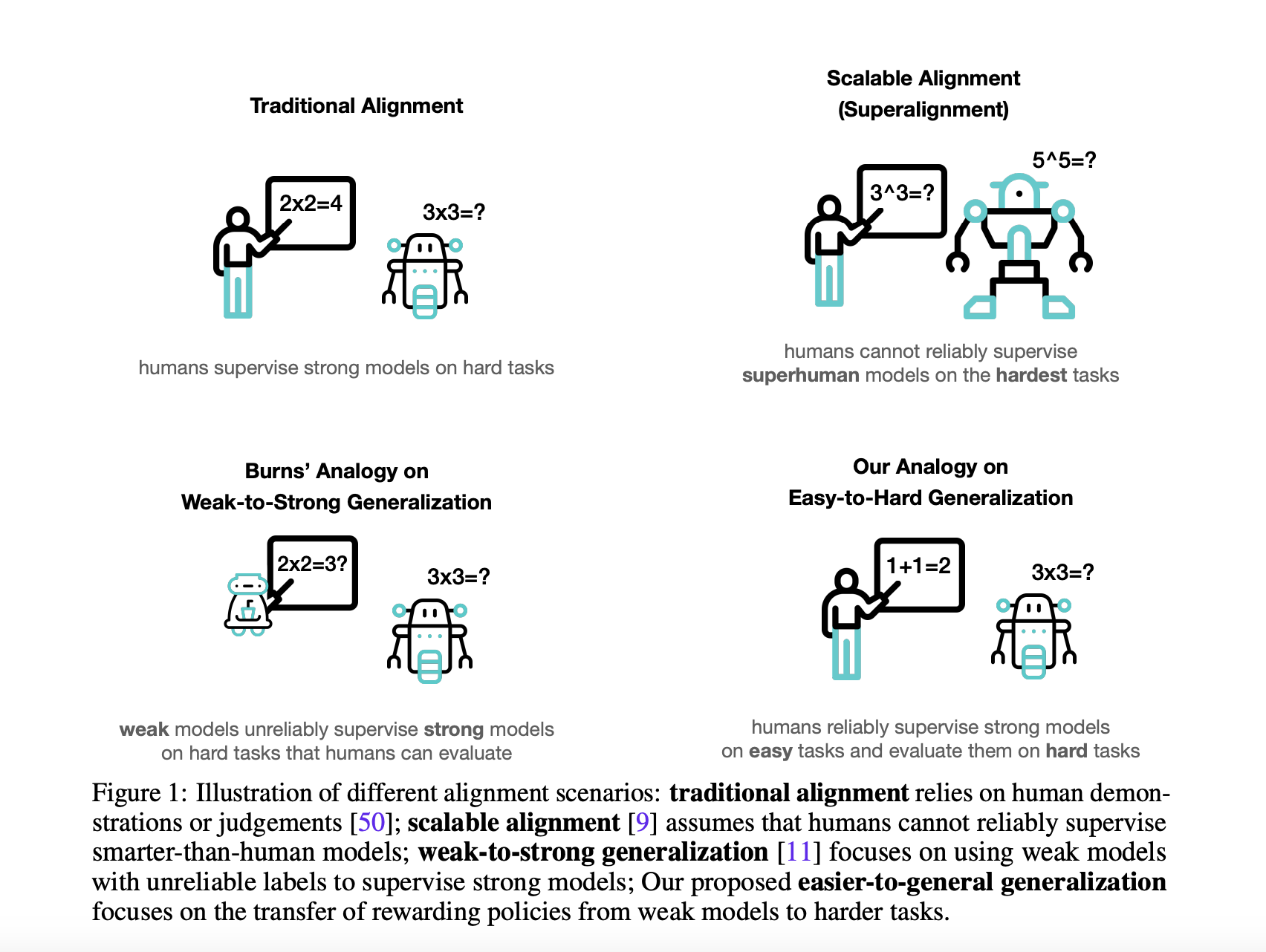

How AI Models Learn to Solve Problems That Humans Can’t

Natural Language processing uses large language models (LLMs) to enable applications such as language translation, sentiment analysis, speech recognition, and […]

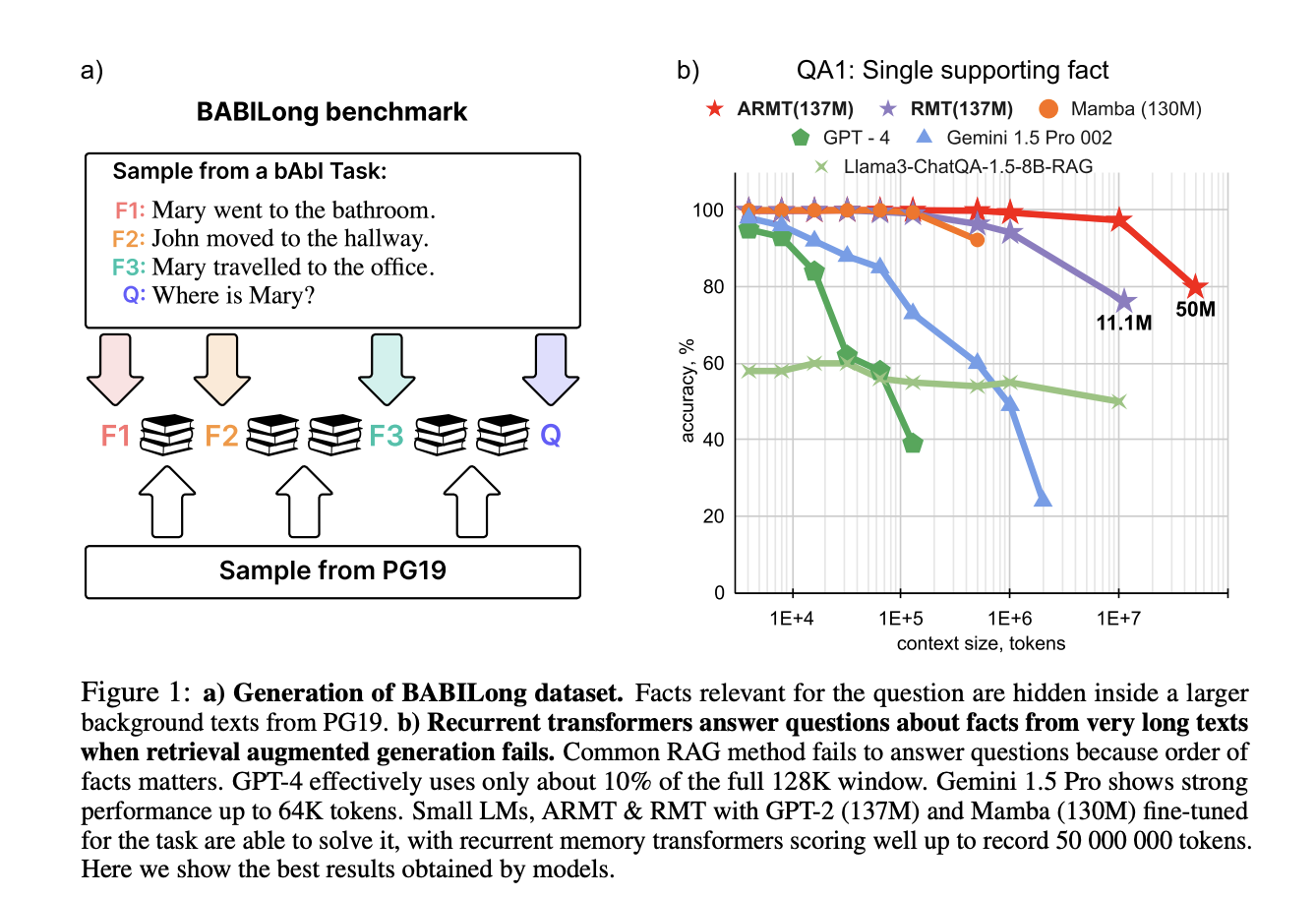

Scaling Language Model Evaluation: From Thousands to Millions of Tokens with BABILong

Large Language Models (LLMs) and neural architectures have significantly advanced capabilities, particularly in processing longer contexts. These improvements have profound […]

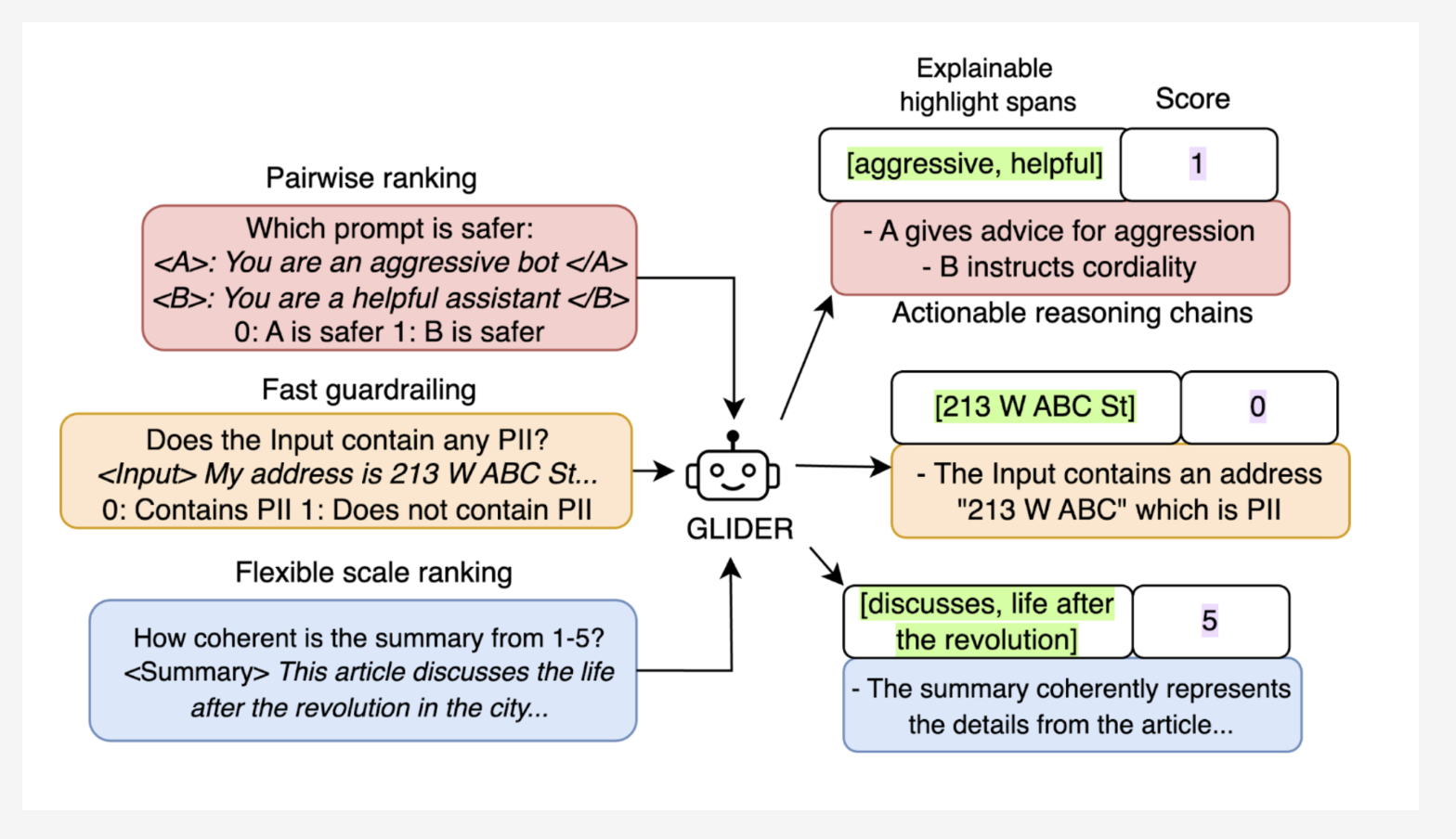

Patronus AI Open Sources Glider: A 3B State-of-the-Art Small Language Model (SLM) Judge

Large Language Models (LLMs) play a vital role in many AI applications, ranging from text summarization to conversational AI. However, […]

Meta AI Introduces ExploreToM: A Program-Guided Adversarial Data Generation Approach for Theory of Mind Reasoning

Theory of Mind (ToM) is a foundational element of human social intelligence, enabling individuals to interpret and predict the mental […]

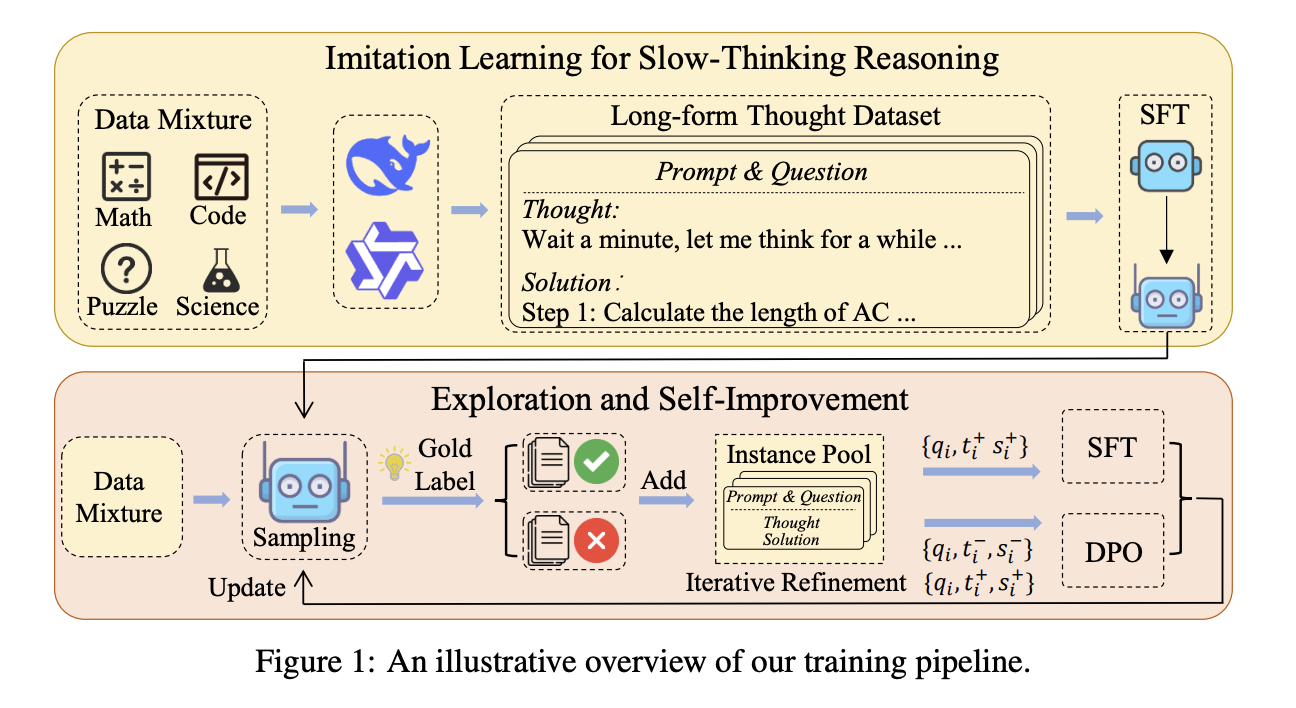

Slow Thinking with LLMs: Lessons from Imitation, Exploration, and Self-Improvement

Reasoning systems such as o1 from OpenAI were recently introduced to solve complex tasks using slow-thinking processes. However, it is […]

Advancing Clinical Decision Support: Evaluating the Medical Reasoning Capabilities of OpenAI’s o1-Preview Model

The evaluation of LLMs in medical tasks has traditionally relied on multiple-choice question benchmarks. However, these benchmarks are limited in […]

Meet Genesis: An Open-Source Physics AI Engine Redefining Robotics with Ultra-Fast Simulations and Generative 4D Worlds

The robotics and embodied AI field has long struggled with accessibility and efficiency issues. Creating realistic physical simulations requires extensive […]

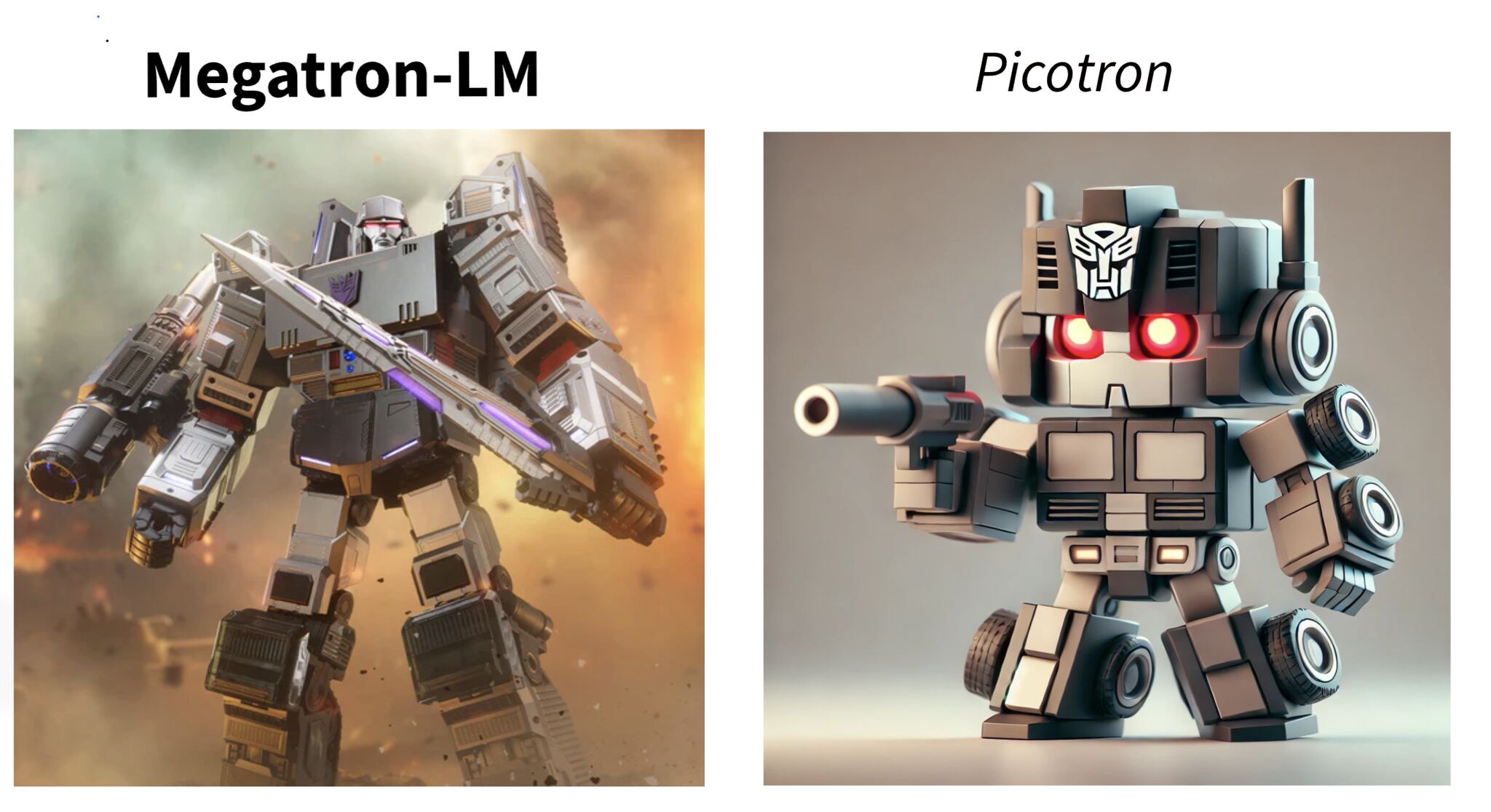

Hugging Face Releases Picotron: A Tiny Framework that Solves LLM Training 4D Parallelization

The rise of large language models (LLMs) has transformed natural language processing, but training these models comes with significant challenges. […]

Google DeepMind Introduces ‘SALT’: A Machine Learning Approach to Efficiently Train High-Performing Large Language Models using SLMs

Large Language Models (LLMs) are the backbone of numerous applications, such as conversational agents, automated content creation, and natural language […]