In this tutorial, we explore the full capabilities of Z.AI’s GLM-5 model and build a complete understanding of how to use it for real-world, agentic applications. We start from the fundamentals by setting up the environment using the Z.AI SDK and its OpenAI-compatible interface, and then progressively move on to advanced features such as streaming responses, thinking mode for deeper reasoning, and multi-turn conversations. As we continue, we integrate function calling, structured outputs, and eventually construct a fully functional multi-tool agent powered by GLM-5. Also, we understand each capability in isolation, and also how Z.AI’s ecosystem enables us to build scalable, production-ready AI systems.

!pip install -q zai-sdk openai rich import os import json import time from datetime import datetime from typing import Optional import getpass API_KEY = os.environ.get("ZAI_API_KEY") if not API_KEY: API_KEY = getpass.getpass("🔑 Enter your Z.AI API key (hidden input): ").strip() if not API_KEY: raise ValueError( "❌ No API key provided! Get one free at: https://z.ai/manage-apikey/apikey-list" ) os.environ["ZAI_API_KEY"] = API_KEY print(f"✅ API key configured (ends with ...{API_KEY[-4:]})") from zai import ZaiClient client = ZaiClient(api_key=API_KEY) print("✅ ZaiClient initialized — ready to use GLM-5!") print("n" + "=" * 70) print("📝 SECTION 2: Basic Chat Completion") print("=" * 70) response = client.chat.completions.create( model="glm-5", messages=[ {"role": "system", "content": "You are a concise, expert software architect."}, {"role": "user", "content": "Explain the Mixture-of-Experts architecture in 3 sentences."}, ], max_tokens=256, temperature=0.7, ) print("n🤖 GLM-5 Response:") print(response.choices[0].message.content) print(f"n📊 Usage: {response.usage.prompt_tokens} prompt + {response.usage.completion_tokens} completion tokens") print("n" + "=" * 70) print("🌊 SECTION 3: Streaming Responses") print("=" * 70) print("n🤖 GLM-5 (streaming): ", end="", flush=True) stream = client.chat.completions.create( model="glm-5", messages=[ {"role": "user", "content": "Write a Python one-liner that checks if a number is prime."}, ], stream=True, max_tokens=512, temperature=0.6, ) full_response = "" for chunk in stream: delta = chunk.choices[0].delta if delta.content: print(delta.content, end="", flush=True) full_response += delta.content print(f"nn📊 Streamed {len(full_response)} characters")We begin by installing the Z.AI and OpenAI SDKs, then securely capture our API key through hidden terminal input using getpass. We initialize the ZaiClient and fire off our first basic chat completion to GLM-5, asking it to explain the Mixture-of-Experts architecture. We then explore streaming responses, watching tokens arrive in real time as GLM-5 generates a Python one-liner for prime checking.

print("n" + "=" * 70) print("🧠 SECTION 4: Thinking Mode (Chain-of-Thought)") print("=" * 70) print("GLM-5 can expose its internal reasoning before giving a final answer.") print("This is especially powerful for math, logic, and complex coding tasks.n") print("─── Thinking Mode + Streaming ───n") stream = client.chat.completions.create( model="glm-5", messages=[ { "role": "user", "content": ( "A farmer has 17 sheep. All but 9 run away. " "How many sheep does the farmer have left? " "Think carefully before answering." ), }, ], thinking={"type": "enabled"}, stream=True, max_tokens=2048, temperature=0.6, ) reasoning_text = "" answer_text = "" for chunk in stream: delta = chunk.choices[0].delta if hasattr(delta, "reasoning_content") and delta.reasoning_content: if not reasoning_text: print("💭 Reasoning:") print(delta.reasoning_content, end="", flush=True) reasoning_text += delta.reasoning_content if delta.content: if not answer_text and reasoning_text: print("nn✅ Final Answer:") print(delta.content, end="", flush=True) answer_text += delta.content print(f"nn📊 Reasoning: {len(reasoning_text)} chars | Answer: {len(answer_text)} chars") print("n" + "=" * 70) print("💬 SECTION 5: Multi-Turn Conversation") print("=" * 70) messages = [ {"role": "system", "content": "You are a senior Python developer. Be concise."}, {"role": "user", "content": "What's the difference between a list and a tuple in Python?"}, ] r1 = client.chat.completions.create(model="glm-5", messages=messages, max_tokens=512, temperature=0.7) assistant_reply_1 = r1.choices[0].message.content messages.append({"role": "assistant", "content": assistant_reply_1}) print(f"n🧑 User: {messages[1]['content']}") print(f"🤖 GLM-5: {assistant_reply_1[:200]}...") messages.append({"role": "user", "content": "When should I use a NamedTuple instead?"}) r2 = client.chat.completions.create(model="glm-5", messages=messages, max_tokens=512, temperature=0.7) assistant_reply_2 = r2.choices[0].message.content print(f"n🧑 User: {messages[-1]['content']}") print(f"🤖 GLM-5: {assistant_reply_2[:200]}...") messages.append({"role": "assistant", "content": assistant_reply_2}) messages.append({"role": "user", "content": "Show me a practical example with type hints."}) r3 = client.chat.completions.create(model="glm-5", messages=messages, max_tokens=1024, temperature=0.7) assistant_reply_3 = r3.choices[0].message.content print(f"n🧑 User: {messages[-1]['content']}") print(f"🤖 GLM-5: {assistant_reply_3[:300]}...") print(f"n📊 Conversation: {len(messages)+1} messages, {r3.usage.total_tokens} total tokens in last call")We activate GLM-5’s thinking mode to observe its internal chain-of-thought reasoning streamed live through the reasoning_content field before the final answer appears. We then build a multi-turn conversation where we ask about Python lists vs tuples, follow up on NamedTuples, and request a practical example with type hints, all while GLM-5 maintains full context across turns. We track how the conversation grows in message count and token usage with each successive exchange.

print("n" + "=" * 70) print("🔧 SECTION 6: Function Calling (Tool Use)") print("=" * 70) print("GLM-5 can decide WHEN and HOW to call external functions you define.n") tools = [ { "type": "function", "function": { "parameters": { "type": "object", "properties": { "city": { "type": "string", "description": "City name, e.g. 'San Francisco', 'Tokyo'", }, "unit": { "type": "string", "enum": ["celsius", "fahrenheit"], "description": "Temperature unit (default: celsius)", }, }, "required": ["city"], }, }, }, { "type": "function", "function": { "name": "calculate", "description": "Evaluate a mathematical expression safely", "parameters": { "type": "object", "properties": { "expression": { "type": "string", "description": "Math expression, e.g. '2**10 + 3*7'", } }, "required": ["expression"], }, }, }, ] def get_weather(city: str, unit: str = "celsius") -> dict: weather_db = { "san francisco": {"temp": 18, "condition": "Foggy", "humidity": 78}, "tokyo": {"temp": 28, "condition": "Sunny", "humidity": 55}, "london": {"temp": 14, "condition": "Rainy", "humidity": 85}, "new york": {"temp": 22, "condition": "Partly Cloudy", "humidity": 60}, } data = weather_db.get(city.lower(), {"temp": 20, "condition": "Clear", "humidity": 50}) if unit == "fahrenheit": data["temp"] = round(data["temp"] * 9 / 5 + 32) return {"city": city, "unit": unit or "celsius", **data} def calculate(expression: str) -> dict: allowed = set("0123456789+-*/.()% ") if not all(c in allowed for c in expression): return {"error": "Invalid characters in expression"} try: result = eval(expression) return {"expression": expression, "result": result} except Exception as e: return {"error": str(e)} TOOL_REGISTRY = {"get_weather": get_weather, "calculate": calculate} def run_tool_call(user_message: str): print(f"n🧑 User: {user_message}") messages = [{"role": "user", "content": user_message}] response = client.chat.completions.create( model="glm-5", messages=messages, tools=tools, tool_choice="auto", max_tokens=1024, ) assistant_msg = response.choices[0].message messages.append(assistant_msg.model_dump()) if assistant_msg.tool_calls: for tc in assistant_msg.tool_calls: fn_name = tc.function.name fn_args = json.loads(tc.function.arguments) print(f" 🔧 Tool call: {fn_name}({fn_args})") result = TOOL_REGISTRY[fn_name](**fn_args) print(f" 📦 Result: {result}") messages.append({ "role": "tool", "content": json.dumps(result, ensure_ascii=False), "tool_call_id": tc.id, }) final = client.chat.completions.create( model="glm-5", messages=messages, tools=tools, max_tokens=1024, ) print(f"🤖 GLM-5: {final.choices[0].message.content}") else: print(f"🤖 GLM-5: {assistant_msg.content}") run_tool_call("What's the weather like in Tokyo right now?") run_tool_call("What is 2^20 + 3^10 - 1024?") run_tool_call("Compare the weather in San Francisco and London, and calculate the temperature difference.") print("n" + "=" * 70) print("📋 SECTION 7: Structured JSON Output") print("=" * 70) print("Force GLM-5 to return well-structured JSON for downstream processing.n") response = client.chat.completions.create( model="glm-5", messages=[ { "role": "system", "content": ( "You are a data extraction assistant. " "Always respond with valid JSON only — no markdown, no explanation." ), }, { "role": "user", "content": ( "Extract structured data from this text:nn" '"Acme Corp reported Q3 2025 revenue of $4.2B, up 18% YoY. ' "Net income was $890M. The company announced 3 new products " "and plans to expand into 5 new markets by 2026. CEO Jane Smith " 'said she expects 25% growth next year."nn' "Return JSON with keys: company, quarter, revenue, revenue_growth, " "net_income, new_products, new_markets, ceo, growth_forecast" ), }, ], max_tokens=512, temperature=0.1, ) raw_output = response.choices[0].message.content print("📄 Raw output:") print(raw_output) try: clean = raw_output.strip() if clean.startswith("```"): clean = clean.split("n", 1)[1].rsplit("```", 1)[0] parsed = json.loads(clean) print("n✅ Parsed JSON:") print(json.dumps(parsed, indent=2)) except json.JSONDecodeError as e: print(f"n⚠️ JSON parsing failed: {e}") print("Tip: You can add response_format={'type': 'json_object'} for stricter enforcement")We define two tools, a weather lookup and a math calculator, then let GLM-5 autonomously decide when to invoke them based on the user’s natural language query. We run a complete tool-calling round-trip: the model selects the function, we execute it locally, feed the result back, and GLM-5 synthesizes a final human-readable answer. We then switch to structured output, prompting GLM-5 to extract financial data from raw text into clean, parseable JSON.

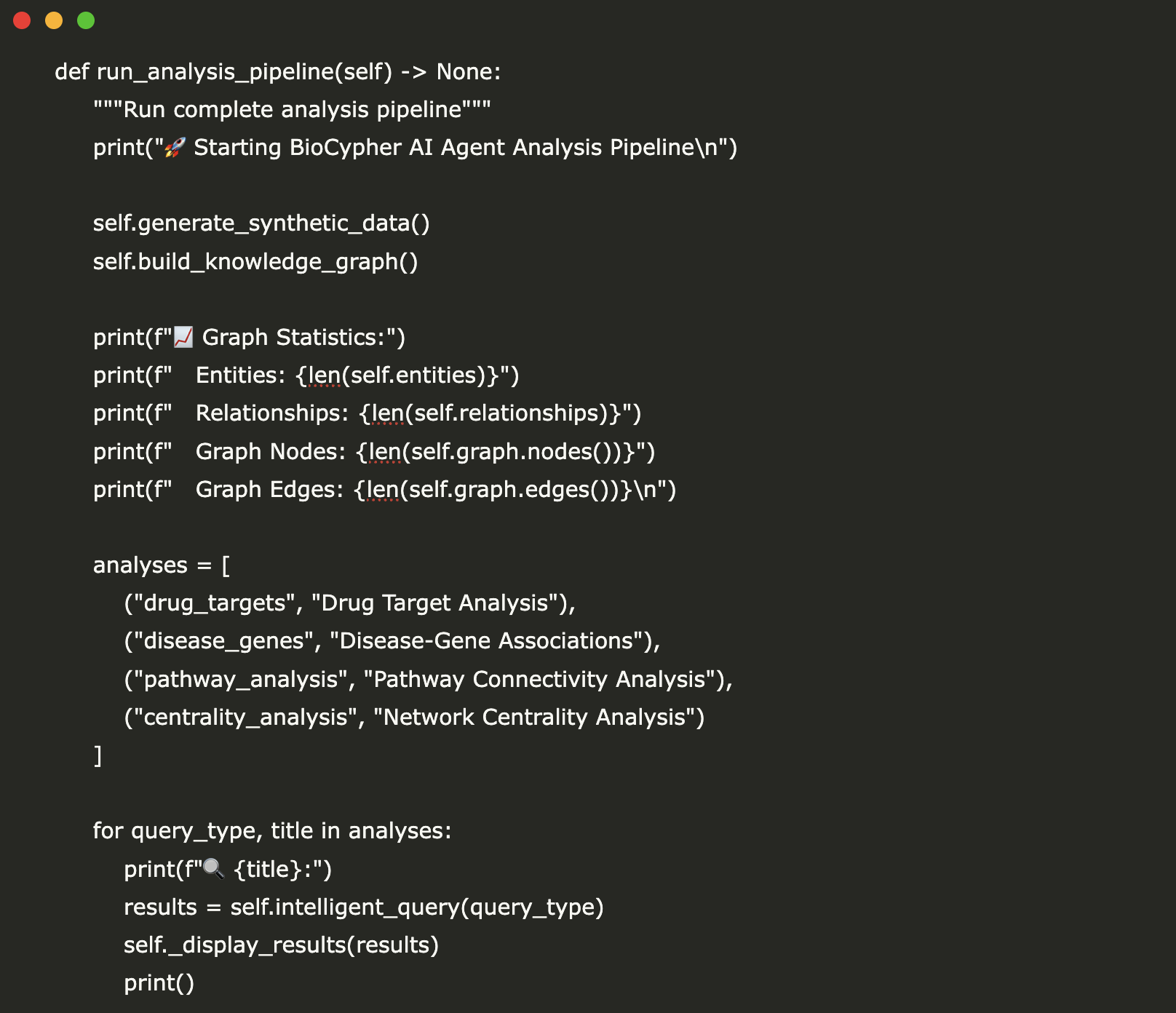

print("n" + "=" * 70) print("🤖 SECTION 8: Multi-Tool Agentic Loop") print("=" * 70) print("Build a complete agent that can use multiple tools across turns.n") class GLM5Agent: def __init__(self, system_prompt: str, tools: list, tool_registry: dict): self.client = ZaiClient(api_key=API_KEY) self.messages = [{"role": "system", "content": system_prompt}] self.tools = tools self.registry = tool_registry self.max_iterations = 5 def chat(self, user_input: str) -> str: self.messages.append({"role": "user", "content": user_input}) for iteration in range(self.max_iterations): response = self.client.chat.completions.create( model="glm-5", messages=self.messages, tools=self.tools, tool_choice="auto", max_tokens=2048, temperature=0.6, ) msg = response.choices[0].message self.messages.append(msg.model_dump()) if not msg.tool_calls: return msg.content for tc in msg.tool_calls: fn_name = tc.function.name fn_args = json.loads(tc.function.arguments) print(f" 🔧 [{iteration+1}] {fn_name}({fn_args})") if fn_name in self.registry: result = self.registry[fn_name](**fn_args) else: result = {"error": f"Unknown function: {fn_name}"} self.messages.append({ "role": "tool", "content": json.dumps(result, ensure_ascii=False), "tool_call_id": tc.id, }) return "⚠️ Agent reached maximum iterations without a final answer." extended_tools = tools + [ { "type": "function", "function": { "name": "get_current_time", "description": "Get the current date and time in ISO format", "parameters": { "type": "object", "properties": {}, "required": [], }, }, }, { "type": "function", "function": { "name": "unit_converter", "description": "Convert between units (length, weight, temperature)", "parameters": { "type": "object", "properties": { "value": {"type": "number", "description": "Numeric value to convert"}, "from_unit": {"type": "string", "description": "Source unit (e.g., 'km', 'miles', 'kg', 'lbs', 'celsius', 'fahrenheit')"}, "to_unit": {"type": "string", "description": "Target unit"}, }, "required": ["value", "from_unit", "to_unit"], }, }, }, ] def get_current_time() -> dict: return {"datetime": datetime.now().isoformat(), "timezone": "UTC"} def unit_converter(value: float, from_unit: str, to_unit: str) -> dict: conversions = { ("km", "miles"): lambda v: v * 0.621371, ("miles", "km"): lambda v: v * 1.60934, ("kg", "lbs"): lambda v: v * 2.20462, ("lbs", "kg"): lambda v: v * 0.453592, ("celsius", "fahrenheit"): lambda v: v * 9 / 5 + 32, ("fahrenheit", "celsius"): lambda v: (v - 32) * 5 / 9, ("meters", "feet"): lambda v: v * 3.28084, ("feet", "meters"): lambda v: v * 0.3048, } key = (from_unit.lower(), to_unit.lower()) if key in conversions: result = round(conversions[key](value), 4) return {"value": value, "from": from_unit, "to": to_unit, "result": result} return {"error": f"Conversion {from_unit} → {to_unit} not supported"} extended_registry = { **TOOL_REGISTRY, "get_current_time": get_current_time, "unit_converter": unit_converter, } agent = GLM5Agent( system_prompt=( "You are a helpful assistant with access to weather, math, time, and " "unit conversion tools. Use them whenever they can help answer the user's " "question accurately. Always show your work." ), tools=extended_tools, tool_registry=extended_registry, ) print("🧑 User: What time is it? Also, if it's 28°C in Tokyo, what's that in Fahrenheit?") print(" And what's 2^16?") result = agent.chat( "What time is it? Also, if it's 28°C in Tokyo, what's that in Fahrenheit? " "And what's 2^16?" ) print(f"n🤖 Agent: {result}") print("n" + "=" * 70) print("⚖️ SECTION 9: Thinking Mode ON vs OFF Comparison") print("=" * 70) print("See how thinking mode improves accuracy on a tricky logic problem.n") tricky_question = ( "I have 12 coins. One of them is counterfeit and weighs differently than the rest. " ) print("─── WITHOUT Thinking Mode ───") t0 = time.time() r_no_think = client.chat.completions.create( model="glm-5", messages=[{"role": "user", "content": tricky_question}], thinking={"type": "disabled"}, max_tokens=2048, temperature=0.6, ) t1 = time.time() print(f"⏱️ Time: {t1-t0:.1f}s | Tokens: {r_no_think.usage.completion_tokens}") print(f"📝 Answer (first 300 chars): {r_no_think.choices[0].message.content[:300]}...") print("n─── WITH Thinking Mode ───") t0 = time.time() r_think = client.chat.completions.create( model="glm-5", messages=[{"role": "user", "content": tricky_question}], thinking={"type": "enabled"}, max_tokens=4096, temperature=0.6, ) t1 = time.time() print(f"⏱️ Time: {t1-t0:.1f}s | Tokens: {r_think.usage.completion_tokens}") print(f"📝 Answer (first 300 chars): {r_think.choices[0].message.content[:300]}...")We build a reusable GLM5Agent class that runs a full agentic loop, automatically dispatching to weather, math, time, and unit conversion tools across multiple iterations until it reaches a final answer. We test it with a complex multi-part query that requires calling three different tools in a single turn. We then run a side-by-side comparison of the same tricky 12-coin logic puzzle with thinking mode disabled versus enabled, measuring both response time and answer quality.

print("n" + "=" * 70) print("🔄 SECTION 10: OpenAI SDK Compatibility") print("=" * 70) print("GLM-5 is fully compatible with the OpenAI Python SDK.") print("Just change the base_url — your existing OpenAI code works as-is!n") from openai import OpenAI openai_client = OpenAI( api_key=API_KEY, base_url="https://api.z.ai/api/paas/v4/", ) completion = openai_client.chat.completions.create( model="glm-5", messages=[ {"role": "system", "content": "You are a writing assistant."}, { "role": "user", "content": "Write a 4-line poem about artificial intelligence discovering nature.", }, ], max_tokens=256, temperature=0.9, ) print("🤖 GLM-5 (via OpenAI SDK):") print(completion.choices[0].message.content) print("n🌊 Streaming (via OpenAI SDK):") stream = openai_client.chat.completions.create( model="glm-5", messages=[ { "role": "user", "content": "List 3 creative use cases for a 744B parameter MoE model. Be brief.", } ], stream=True, max_tokens=512, ) for chunk in stream: if chunk.choices[0].delta.content: print(chunk.choices[0].delta.content, end="", flush=True) print() print("n" + "=" * 70) print("🎉 Tutorial Complete!") print("=" * 70) print(""" You've learned how to use GLM-5 for: ✅ Basic chat completions ✅ Real-time streaming responses ✅ Thinking mode (chain-of-thought reasoning) ✅ Multi-turn conversations with context ✅ Function calling / tool use ✅ Structured JSON output extraction ✅ Building a multi-tool agentic loop ✅ Comparing thinking mode ON vs OFF ✅ Drop-in OpenAI SDK compatibility 📚 Next steps: • GLM-5 Docs: https://docs.z.ai/guides/llm/glm-5 • Function Calling: https://docs.z.ai/guides/capabilities/function-calling • Structured Output: https://docs.z.ai/guides/capabilities/struct-output • Context Caching: https://docs.z.ai/guides/capabilities/cache • Web Search Tool: https://docs.z.ai/guides/tools/web-search • GitHub: https://github.com/zai-org/GLM-5 • API Keys: https://z.ai/manage-apikey/apikey-list 💡 Pro tip: GLM-5 also supports web search and context caching via the API for even more powerful applications! """)We demonstrate that GLM-5 works as a drop-in replacement with the standard OpenAI Python SDK; we simply point base_url, and everything works identically. We test both a standard completion for creative writing and a streaming call that lists use cases for a 744B MoE model. We wrap up with a full summary of all ten capabilities covered and links to the official docs for deeper exploration.

Check out the Full Codes Notebook here. Also, feel free to follow us on Twitter and don’t forget to join our 120k+ ML SubReddit and Subscribe to our Newsletter. Wait! are you on telegram? now you can join us on telegram as well.