“Lowering the threshold for scrutiny”

Overall, across 1,372 participants and over 9,500 individual trials, the researchers found subjects were willing to accept faulty AI reasoning a whopping 73.2 percent of the time, while only overruling it 19.7 percent of the time. The researchers say this “demonstrate[s] that people readily incorporate AI-generated outputs into their decision-making processes, often with minimal friction or skepticism.” In general, “fluent, confident outputs [are treated] as epistemically authoritative, lowering the threshold for scrutiny and attenuating the meta-cognitive signals that would ordinarily route a response to deliberation,” they write.

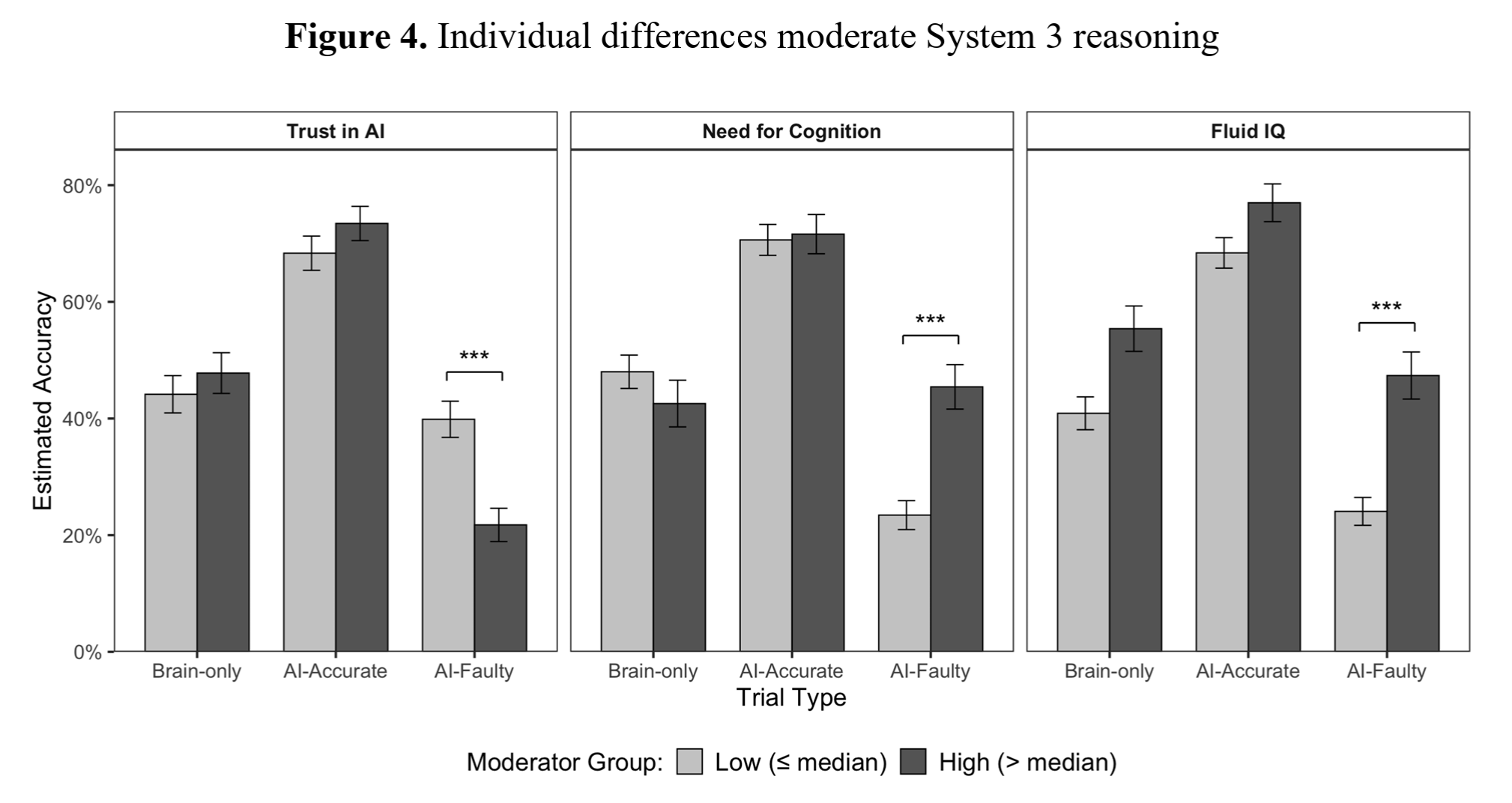

Subjects with high trust in AI were more likely to be misled by faulty responses, while those with high “Fluid IQ” were less likely to be misled by the AI.

Subjects with high trust in AI were more likely to be misled by faulty responses, while those with high “Fluid IQ” were less likely to be misled by the AI. Credit: Shaw and Nave

These kinds of effects weren’t uniform across all test subjects, though. Those who scored highly on separate measures of so-called fluid IQ were less likely to rely on the AI for help and were more likely to overrule a faulty AI when it was consulted. Those predisposed to see AI as authoritative in a survey, on the other hand, were much more likely to be led astray by faulty AI-provided answers.

Despite the results, though, the researchers point out that “cognitive surrender is not inherently irrational.” While relying on an LLM that’s wrong half the time (as in these experiments) has obvious downsides, a “statistically superior system” could plausibly give better-than-human results in domains such as “probabilistic settings, risk assessment, or extensive data,” the researchers suggest.

“As reliance increases, performance tracks AI quality,” the researchers write, “rising when accurate and falling when faulty, illustrating the promises of superintelligence and exposing a structural vulnerability of cognitive surrender.”

In other words, letting an AI do your reasoning means your reasoning is only ever going to be as good as that AI system. As always, let the prompter beware.