CRYPTOGRAPHICally RELEVANT QUANTUM COMPUTING

No, the sky isn’t falling, but Q Day is coming, and it won’t be as expensive as thought.

Building a utility-scale quantum computer that can crack one of the most vital cryptosystems—elliptic curves—doesn’t require nearly the resources anticipated just a year or two ago, two independently written whitepapers have concluded. In one, researchers demonstrated the use of neutral atoms as reconfigurable qubits that have free access to each other. They went on to show this approach could allow a quantum computer to break 256-bit elliptic-curve cryptography (ECC) in 10 days while using 100 times less overhead than previously estimated. In a second paper, Google researchers demonstrated how to break ECC-securing blockchains for bitcoin and other cryptocurrencies in less than nine minutes while achieving a 20-fold resource reduction.

Taken together, the papers are the latest sign that cryptographically relevant quantum computing (CRQC) at utility-scale is making meaningful progress. The advances are largely being driven by new quantum architectures developed by physicists and computer scientists in a push to create quantum computers that operate correctly even in the presence of errors that occur whenever qubits—the quantum analog to classical computing bits—interact with their environment. The other key drivers are ever-more efficient algorithms to supercharge Shor’s algorithm, the 1994 series of equations proving that quantum computing could break the ECC and RSA cryptosystems in polynomial time, specifically cubic time, far faster than the exponential time provided by today’s classical computers.

Neither paper has been peer-reviewed.

“The research community continues to make steady progress on both the physical qubits and the quantum algorithms necessary to realize an efficient and practical CRQC,” said Brian LaMacchia, a cryptography engineer who oversaw Microsoft’s post-quantum transition from 2015 to 2022 and now works at Farcaster Consulting Group. “I don’t think either paper gives us a new, hard date for when we’re going to have a practical CRQC (which of course we’ve never had), but they both provide evidence that we are continuing to march down the road to a realizable CRQC and progress toward that goal is not slowing down.”

Trapping atoms in “optical tweezers”

The paper that is getting the most attention takes a relatively new approach to creating fault-tolerant quantum computing (FTQC) that can reduce the number of physical qubits required to break ECC by a factor of 100. Unlike more common approaches based on superconducting, the researchers built physical qubits out of neutral atoms. Using lasers to cool atoms, the process traps individual atoms into tightly focused beams of light known as “optical tweezers.” Each tweezer snags a single atom. Using optical multiplexing, the researchers can make large arrays of these trapped atoms.

The benefit of this approach is that all physical qubits can interact with all other physical qubits. These “non-local” communications are a major departure from qubit interaction in superconducting approaches, where qubits are laid out on a 2D grid and can interact with only their four immediately adjacent qubit neighbors. The ability for qubits to interact with very far-away qubits makes error correction significantly more efficient, since non-local communication allows for drastically increasing the number and thoroughness of fault checks.

As a result, the researchers’ paper—titled Shor’s algorithm is possible with as few as 10,000 reconfigurable atomic qubits—says a quantum computer needs fewer than 30,000 physical cubits to break ECC-256 in 10 days, orders of magnitude more efficient than previous estimates. A separate research team last year showed that they could build neutral atom trapping arrays exceeding 6,000 qubits. Combined with advances in large-scale quantum operations with high fidelity, neutral atoms have the potential to run fault-tolerant quantum computing.

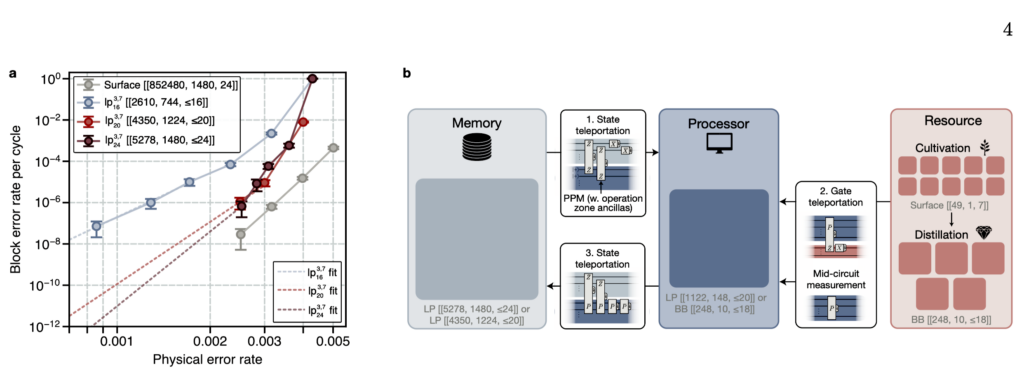

Logical code performance and architecture. a, Block error rates per cycle for several lifted product codes and surface codes. Least-squares power law fits (dashed lines) are used to extrapolate to lower physical error rates p which could not be numerically simulated. The blue fit is of the form y= axb, where a= 14.6±0.7 and b= 7.1±0.4 are fitted parameters using data from the three smallest physical error rates. Using this same procedure, the fitted values of b for lp3,720 and lp3,724 are larger than d/2, the theoretical maximum value as p→0. To be conservative, we therefore fit the form y= axd/2 from the smallest physical error rate (red and purple). b, Layout and compilation procedure for the logical architecture. The memory block stores quantum information, which is then teleported to the processor for computation. Sequential PPMs execute mid-circuit measurements and gate teleportation of magic states. Finally, the logical information is teleported back into the memory. Here, P denotes an arbitrary logical Pauli operator on the processor code.

Credit: Cain et al.

Logical code performance and architecture. a, Block error rates per cycle for several lifted product codes and surface codes. Least-squares power law fits (dashed lines) are used to extrapolate to lower physical error rates p which could not be numerically simulated. The blue fit is of the form y= axb, where a= 14.6±0.7 and b= 7.1±0.4 are fitted parameters using data from the three smallest physical error rates. Using this same procedure, the fitted values of b for lp3,720 and lp3,724 are larger than d/2, the theoretical maximum value as p→0. To be conservative, we therefore fit the form y= axd/2 from the smallest physical error rate (red and purple). b, Layout and compilation procedure for the logical architecture. The memory block stores quantum information, which is then teleported to the processor for computation. Sequential PPMs execute mid-circuit measurements and gate teleportation of magic states. Finally, the logical information is teleported back into the memory. Here, P denotes an arbitrary logical Pauli operator on the processor code. Credit: Cain et al.

“While substantial work is needed to integrate these advances into a complete apparatus and scale system sizes to the required levels, our analysis indicates that appropriately designed neutral-atom architectures could support cryptographically relevant implementations of Shor’s algorithm,” the researchers wrote. “This finding underscores the importance of ongoing efforts to transition widely deployed cryptographic systems to post-quantum standards designed to be secure against quantum attacks.”

Google is looking out for the crypto bros

A separate paper released by Google researchers also shows progress in using Shor’s algorithm to break ECC-256, specifically over secp256k1, the elliptic curve that forms the backbone of bitcoin and other blockchain cryptography. The researchers said they have devised improvements to Shor’s algorithm that make it possible to crack the public key in a bitcoin address in under 10 minutes with resources that are 20 times smaller than those achieved in 2003 research.

Specifically, Google said it has compiled two quantum circuits that solve the elliptic-curve discrete logarithm problem. One requires fewer than 1,200 logical qubits and 90 million Toffoli gates, and the other needs fewer than 1,450 logical qubits and 70 million Toffoli gates. A logical qubit is a fault-tolerant qubit that’s encoded using hundreds (or thousands) of physical qubits. The researchers estimate their machine needs roughly 500,000 physical qubits, half of what the same team estimated last June was needed to break 2048-bit RSA, which has a much larger key size. A Toffoli gate is a resource-intensive operation that’s a key driver in the amount of time required to complete an algorithm.

In a move that’s turning heads in security circles, Google isn’t releasing the algorithmic improvements that make this achievement possible. Instead, the researchers released a zero-knowledge proof that mathematically proves the existence of the algorithmic enhancement without disclosing it.

“The escalating risk that detailed cryptanalytic blueprints could be weaponized by adversarial actors necessitates a shift in disclosure practices,” the authors explained. “Accordingly, we believe it is now a matter of public responsibility to share refined resource estimates while withholding the precise mechanics of the underlying attacks.” The researchers, who said they consulted with the US government in forging the new policy, went on to say that “progress in quantum computing has reached the stage where it is prudent to stop publishing details of improved quantum cryptanalysis to avoid misuse.”

The move, recently proposed by influential researcher Scott Aaronson, is a complete turnaround from the strict 90-day disclosure policies Google’s Project Zero pioneered two decades ago and an accepted norm that has driven security research for even longer. Other researchers are already criticizing the lack of details.

“I think it’s alarmist to claim an immediate security risk from an algorithm that requires a computer that doesn’t exist,” Matt Green, a professor at Johns Hopkins University who studies cryptography, said. “Given that the stakes here are so low (for the same reason) I’d classify it as less harmful, and more on the hype side. I think it’s more of a PR trick than a serious concern anyone has.”

Google is also facing scrutiny for focusing on the harm CRQC poses to cryptocurrencies—an obsession of vocal influencers and the current White House—rather than on TLS implementations, DocuSign signatures, digital certificates, or any other number of more general applications that affect larger populations of people.

“While CRQCs certainly do pose a threat to blockchain-based technologies based on classical ECC algorithms, they are just one of many systems in our modern world that need to transition quickly to PQC,” LaMacchia said, referring to post-quantum cryptography. “Especially when reading some of the policy proposals at the end of the white paper, I am just dumbfounded that Google is focused on policy frameworks for solving problems that seem unique to the cryptocurrency space (e.g., salvaged digital assets) and not the general threat that CRQC pose to all our systems that use public-key cryptography.”

Dan Goodin is Senior Security Editor at Ars Technica, where he oversees coverage of malware, computer espionage, botnets, hardware hacking, encryption, and passwords. In his spare time, he enjoys gardening, cooking, and following the independent music scene. Dan is based in San Francisco. Follow him at here on Mastodon and here on Bluesky. Contact him on Signal at DanArs.82.