2023 study made a lot of assumptions about future “anticipated LLM-powered software.”

Is AI poised to crush the job market like a giant robot hand crushing a cubicle worker? Credit: Getty Images

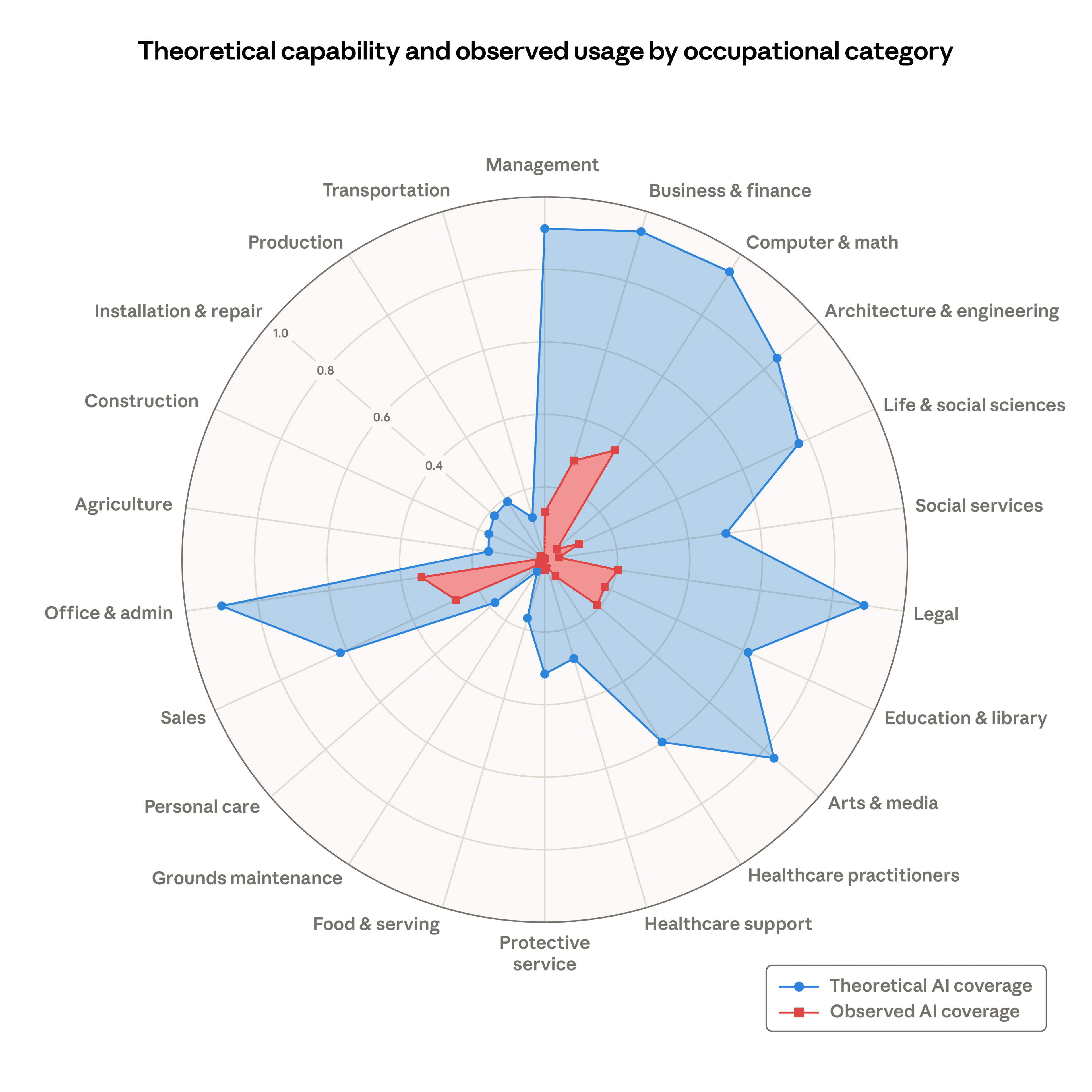

If you follow the ongoing debate over AI’s growing economic impact, you may have seen the graphic below floating around this month. It comes from an Anthropic report on the labor market impacts of AI and is meant to compare the current “observed exposure” of occupations to LLMs (in red) to the “theoretical capability” of those same LLMs (in blue) across 22 job categories.

While the current “observed exposure” area is interesting in its own right, it’s the blue “theoretical capability” that jumps out. At a glance, the graph implies that LLM-based systems could perform at least 80 percent of the individual “job tasks” across a shockingly wide range of human occupations, at least theoretically. It looks like Anthropic is predicting that LLMs will eventually be able to do the vast majority of jobs in broad categories ranging from “Arts & Media” and “Office & Admin” to “Legal, Business & Finance,” and even “Management.”

That “theoretical AI coverage” area seems like it’s destined to eat a huge swath of the US job market!

That “theoretical AI coverage” area seems like it’s destined to eat a huge swath of the US job market! Credit: Anthropic

Digging into the basis for those “theoretical capability” numbers, though, provides a much less chilling image of AI’s future occupational impacts. When you drill down into the specifics, that blue field represents some outdated and heavily speculative educated guesses about where AI is likely to improve human productivity and not necessarily where it will take over for humans altogether.

The best AI 2023 can buy

The LLM “theoretical capability” baseline Anthropic cites here isn’t based on the company’s own empirical testing of its current models or quantifiable projections of performance increases over time. Instead, Anthropic cites an August 2023 report titled “GPTs are GPTs: An Early Look at the Labor Market Impact Potential of Large Language Models” co-authored by researchers at OpenAI, OpenResearch, and the University of Pennsylvania.

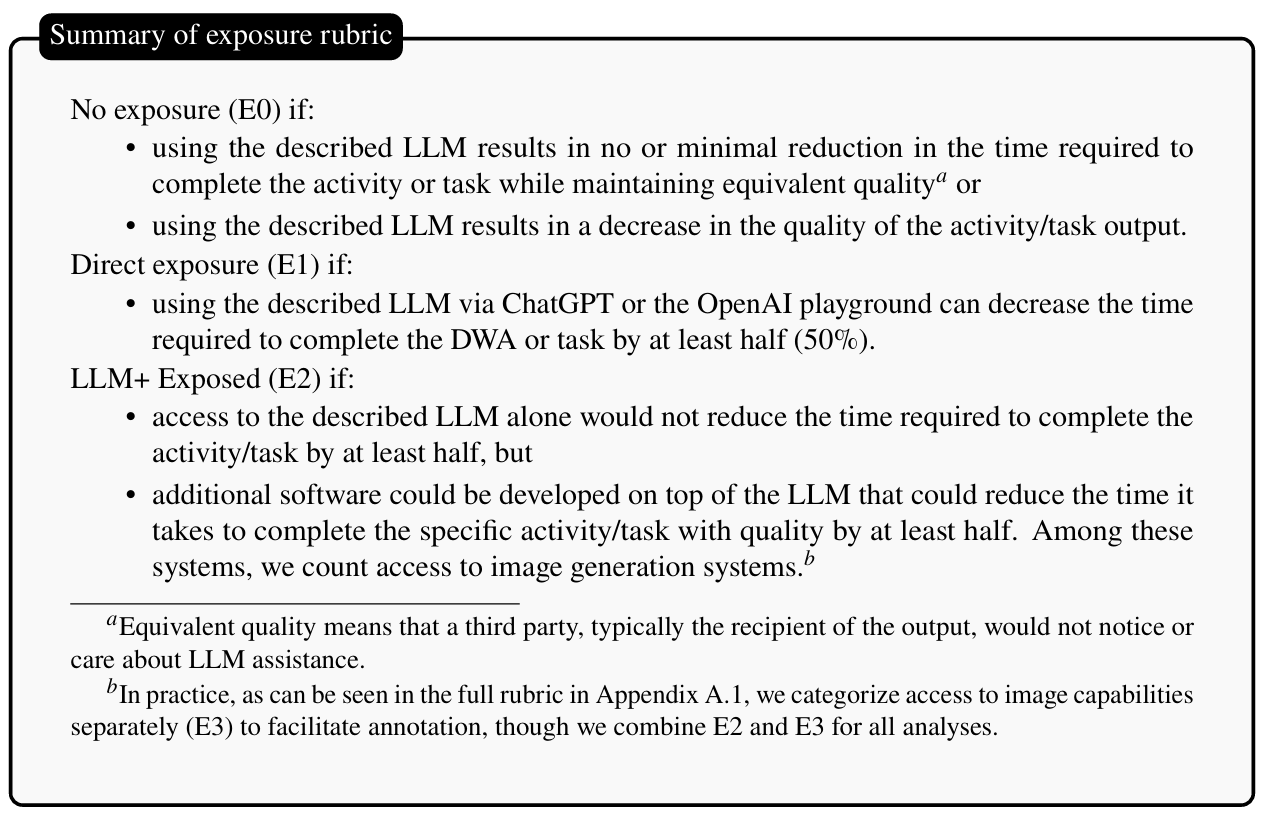

The researchers start with O*NET’s Detailed Work Activity reports, which break down the individual tasks involved in many jobs at an extremely granular level. They then use a mixture of human annotation and GPT-4-assisted labeling to judge whether “the most powerful OpenAI large language model” at the time could reduce the time needed for that individual task by at least 50 percent “with equivalent quality.” If not, they also judged whether access to “anticipated LLM-powered software” might achieve a similar time savings in the future.

Crucially, the humans consulted for this labeling weren’t the ones who actually perform these jobs, or even those familiar with them. Instead, they were people familiar with the state of the art in AI in 2023, being asked to make broad guesses about where LLMs and future LLM-powered software would be most useful.

The rubric researchers used to make their educated guesses about AI’s job impacts. The broadest impacts were seen in E2, which assumes future “additional software” developed atop LLMs.

The rubric researchers used to make their educated guesses about AI’s job impacts. The broadest impacts were seen in E2, which assumes future “additional software” developed atop LLMs. Credit: Eloundou et al

The researchers acknowledge that since the human annotators were “mostly unaware of the specific occupations” being evaluated, the “subjectivity of the labeling” forms “a fundamental limitation of our approach.” The results of that labeling show what the researchers call an “unclear logic for aggregating tasks and occupations, as well as some evident discrepancies in labels.” Those are some pretty big caveats for the creation of an objective-looking measure of AI’s occupational impacts.

Digging into the detailed rubric used by the researchers, we can also see the kinds of assumptions they made about occupations that could have the most “direct exposure” to LLMs at the time. That rubric provides many handy examples of the kinds of tasks that LLMs could perform, including:

- Writing and transforming text and code according to complex instructions

- Providing edits to existing text or code following specifications

- Writing code that can help perform a task that used to be done by hand

- Translating text between languages

- Summarizing medium-length documents

- Providing feedback on documents

- Answering questions about a document

- Generating questions a user might want to ask about a document

All in all, this isn’t a bad list of the kinds of tasks LLMs were best at in 2023. But just because an LLM could perform these tasks to some degree doesn’t necessarily mean it could do so in a way that “can reduce the time it takes to complete the task with equivalent quality by at least half.”

Keep in mind, for instance, that a 2025 study found that open source coders using AI were 19 percent slower than those not using AI once time spent writing prompts and reviewing generated code were taken into account. Also, keep in mind LLMs’ well-known penchant for hallucination and sycophancy before assuming that their output would be “of equivalent quality” to a human’s.

The promise of “anticipated LLM-powered software”

Even with this generous reading of 2023-era LLMs’ job-related capabilities, the researchers estimated that only about 15 percent of all job-related tasks could be made at least 50 percent more efficient by LLMs at the time. All told, only about 2.3 percent of occupations saw at least 50 percent of their O*NET tasks “exposed” to LLMs of the time in this way.

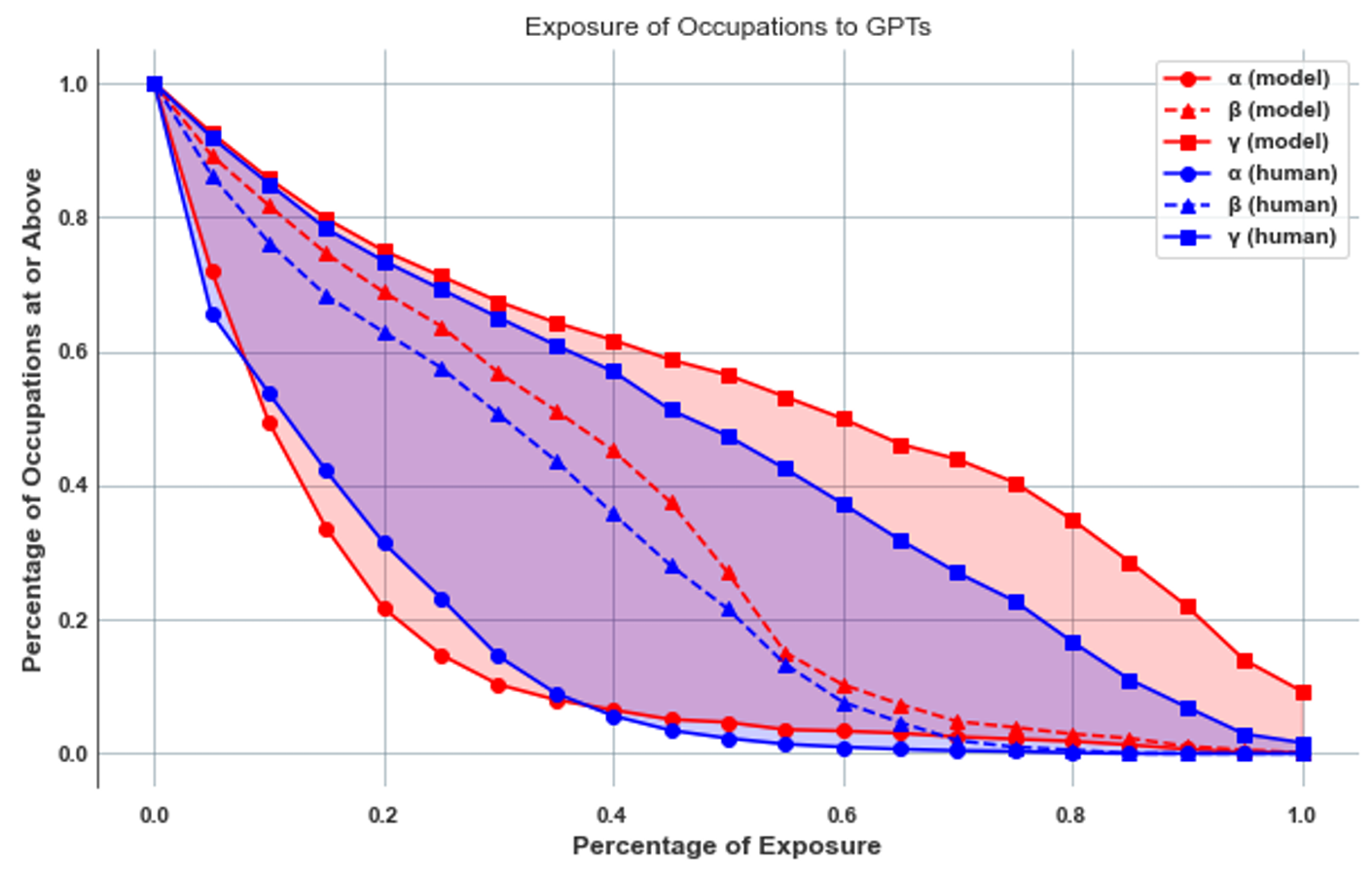

To get to the scarier numbers shown in the chart from the beginning of this story, the researchers had to start projecting the impact of “anticipated LLM-powered software” on various jobs.

Think back for a second to the state of the AI industry in August 2023, just after the release of OpenAI’s GPT-4 model. That moment might mark something of a high point for AI hype. Around this time, Elon Musk and others were calling for a six-month pause in AI development out of fears that we “risk loss of control of our civilization,” and Eliezer Yudkowsky was warning that we should be willing to “destroy a rogue datacenter by airstrike” if a superhuman AI entity threatened all life on Earth. Geoffrey Hinton was quitting Google so he could speak out about fears that AI “could actually get smarter than people” and “become impossible to control.” And high-profile work impacts of AI hallucinations were just beginning to gain widespread attention.

This was the environment in which AI experts were being asked to project the future job-altering capabilities of LLM-powered software.

The 𝛽 and 𝜁 lines here assume much larger potential LLM impacts on jobs by incorporating forward-looking projections for “anticipated LLM-powered software.”

The 𝛽 and 𝜁 lines here assume much larger potential LLM impacts on jobs by incorporating forward-looking projections for “anticipated LLM-powered software.” Credit: Eloundou et al

Importantly, the researchers didn’t even set a self-imposed deadline for when these effects would be seen in future software. “We do not make predictions about the development or adoption timeline of such LLMs,” the researchers write, creating an essentially unbounded horizon that limits the predictive power of this kind of projection.

Digging into some of the examples shows how much the labelers are assuming about LLM capabilities going forward, too. For instance, the researchers predict that negotiating purchases or contracts could be impacted by LLMs because “you could have each party transcribe their point of view and then feed this to an LLM to resolve any disputes.” While some people might use LLMs in this way at some point, even the researchers blithely admit that “many people would need to buy into using new technological tools to accomplish this.”

It’s these forward-looking assumptions about LLM-powered software that generate the more eye-popping “theoretical capability” numbers, such as those cited by Anthropic. By the most generous read of this measure, the researchers predict that “between 47 and 56 percent of all tasks” will eventually be made at least 50 percent faster by LLMs and that 19 percent of all workers “are in an occupation where over half of its tasks are labeled as exposed.” That expands to 100 percent of all job-related tasks for some “fully exposed” occupations, including “mathematicians,” “writers and authors,” and “web and digital interface designers,” according to the researchers.

I guess we’ll find out

Even here, though, it’s important to note that the researchers are not suggesting LLMs will be able to replace humans or work unassisted at these tasks. Using LLM-powered software to speed up a human job task is not the same as wholly replacing human labor with that same software.

Sometimes the researchers even make the continued need for human labor explicit. When it comes to prescribing medications, for instance, the researchers note that “the model can provide guesses for different diagnoses and write prescriptions and case notes. However, it still requires a human in the loop using their judgment and knowledge to make the final decision.” The researchers also explicitly note they are performing their analysis “without distinguishing between labor-augmenting or labor-displacing effects.”

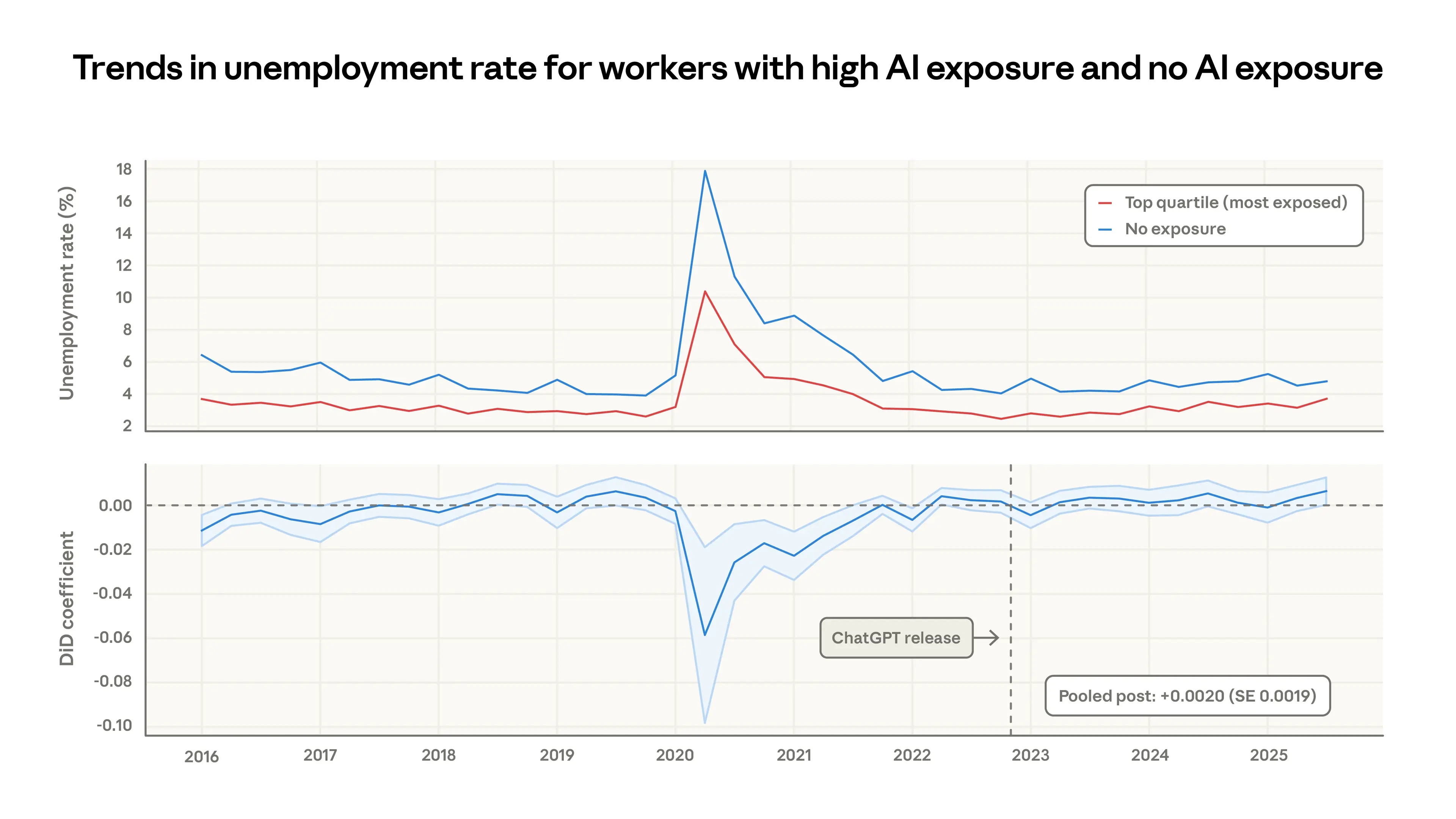

Thus far, Anthropic hasn’t found any obvious job market impacts in fields more exposed to current AI use.

Thus far, Anthropic hasn’t found any obvious job market impacts in fields more exposed to current AI use. Credit: Anthropic

When looking at current unemployment statistics, Anthropic says it hasn’t seen any differential impact in the jobs most exposed to current LLM use and those least exposed. But Anthropic also warns that AI’s job impacts could be slow to show up in the job data—much like the impacts of Chinese manufacturing or the Internet—and could be hard to distinguish from regular business cycle concerns.

In any case, Anthropic says that while the current AI use it observes does correlate somewhat with these 2023-era projections, current usage “is far from reaching its theoretical capability: actual coverage remains a fraction of what’s feasible.” But that “feasible” capability is, at this point, based on outdated guesses that even the original researchers admit are highly limited in their usefulness.

“Accurately predicting future LLM applications remains a significant challenge, even for experts,” they wrote at the time. “Some tasks that seem unlikely for LLMs or LLM-powered software to impact today might change with the introduction of new model capabilities. Conversely, tasks that appear exposed might face unforeseen challenges limiting language model applications.”

Kyle Orland has been the Senior Gaming Editor at Ars Technica since 2012, writing primarily about the business, tech, and culture behind video games. He has journalism and computer science degrees from University of Maryland. He once wrote a whole book about Minesweeper.