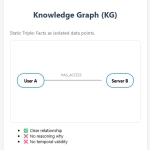

Knowledge Graphs and their limitations With the rapid growth of AI applications, Knowledge Graphs (KGs) have emerged as a foundational […]

Category: AI Career

AI Interview Series #5: Prompt Caching

Question: Imagine your company’s LLM API costs suddenly doubled last month. A deeper analysis shows that while user inputs look […]

AI Interview Series #4: Explain KV Caching

Question: You’re deploying an LLM in production. Generating the first few tokens is fast, but as the sequence grows, each […]

AI Interview Series #4: Transformers vs Mixture of Experts (MoE)

Question: MoE models contain far more parameters than Transformers, yet they can run faster at inference. How is that possible? […]

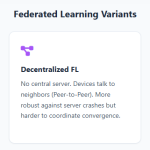

AI Interview Series #3: Explain Federated Learning

Question: “You’re an ML engineer at a fitness company like Fitbit or Apple Health. Millions of users generate sensitive sensor […]

AI Interview Series #1: Explain Some LLM Text Generation Strategies Used in LLMs

Every time you prompt an LLM, it doesn’t generate a complete answer all at once — it builds the response […]