Google’s very own walled garden

Questions remain as Google prepares to lock down Android app distribution in the name of security.

Google plans to begin policing all Android apps later this year. Credit: Aurich Lawson | Getty Images

Google plans to begin policing all Android apps later this year. Credit: Aurich Lawson | Getty Images

It’s been nearly 20 years since Google revealed Android, which the company described as the first “truly open” mobile operating system, setting Google-powered phones apart from the iPhone’s aggressively managed experience. Over time, though, Android has become more aligned with Apple’s approach. For the moment, users still have the final say in what software runs on their increasingly locked-down smartphones. Later this year, though, Google plans to seriously curtail that freedom in the name of security.

In the coming weeks, Google will officially debut Android developer verification, which will require app makers outside the Play Store to register with their real names and pay a fee to Google. Failure to do so will block their apps from installation (sometimes called sideloading) on virtually all Android devices. Google says this is a necessary evolution of the platform’s security model, but upending the status quo could push developers away from Android and risk the privacy of those that remain.

This might make your phone a little safer, sure, but it won’t stop people from getting scammed. At the same time, it could rob the Android ecosystem of what made it special in the first place.

Tilting at windmills

Google’s Play Store (once the Android Market) has undergone much more than a name change over the years. There were virtually no rules in the early days, allowing developers to publish apps that tinkered with undocumented system features, infringed on copyrights, and leveraged exploits to gain root access. Today, Google has numerous security layers that detect and remove malware, and that has undeniably made Android safer. Developer verification could continue to make your phone safer, too, according to Christoph Hebeisen, director of security intelligence research at Lookout.

While there are still malware scares in the Play Store, Google is doing something right. Hebeisen says there’s far less malware in Google Play than outside of it, and the protections are so good that threat actors often don’t bother trying to distribute malware through Google’s platform. It’s just not worth the time when most of their apps will get instantly flagged.

As Google barrels toward mandatory registration for all Android developers, it is talking up how effective these other measures have been. Google says Play Protect, the anti-malware feature built into all Google-certified Android devices, scans 350 billion Android apps every day as of early 2026—both those downloaded from the Play Store and sideloaded apps.

Hebeisen explained to Ars that Play Protect targets a different part of the process than developer verification. Play Protect can flag and remove individual apps, which is less effective than blocking an entire developer profile, and it can be disabled. Google has suggested that people are often “coached” by threat actors to disable features like Play Protect, pointing to the need for more strict controls. Not everyone buys that, though.

“These scenarios seem really implausible to me, but [Google has] not revealed any specific numbers about how many people are affected by this,” said Marc Prud’hommeaux, a board member of the popular F-Droid free and open source software storefront. “They only quote very vague statistics that say there’s 50 times as much malware outside the Play Store than there is inside the Play Store.”

But why is Google suddenly so interested in forcing these particular reforms on non-Google developers? Hebeisen suggests there’s a bit of Apple envy happening.

“I think Google probably looked at Apple and wondered ‘why has it worked for them?’” said Hebeisen. “Because from a technical perspective, there isn’t a fundamental security difference. Why has there been more malware reporting around Android than for iOS? And I think they have come around to the conclusion that the developer ecosystem and the ability to actually get an app distributed and installed makes a big difference.”

Over time, Google has made numerous technical changes to the Android system aimed at reducing the spread of malware. It has implemented granular runtime permissions, made incremental security patches mandatory for new devices, added malware scanning to all certified devices, and yes, made it harder to sideload apps from unknown sources. Android is vastly more secure than it once was, but there are limits.

“You can only do so many things at a technical level, so you have to clamp down on the developers,” said Hebeisen, who admits there are some negative consequences to such actions. “Android has always been the friendly and open system where you could do anything you wanted to, and that is somewhat limited [with developer verification], obviously, because you now have a mandatory registration if you want to distribute an app.”

The supposed upshot of verification is that when Google detects an app involved in malicious activity, it can take out the trash faster. According to Google, it’s only interested in removing apps that cause “a high degree of harm,” which is generally described as malware in Google documentation, and this is “the same bar.” The company has declined to offer more details on its definition of harmful apps to Ars. Still, the definition of malware is fuzzy, and even Google’s own partners can disagree on what counts.

“We are part of the App Defense Alliance ecosystem, where Google sends us every app that goes into Google Play before it gets published,” said Hebeisen. “We run our analysis on it and send it back to them. They don’t always take our word for it. There are a good number of apps that will get published, although we consider them risky.”

The opposite is also true, though. “There are apps that we consider benign and Google doesn’t,” explained Hebeisen. “That’s mostly like terms of service violations and stuff like that won’t directly affect the users. So there isn’t 100 percent agreement.”

The end result is that developer verification gives Google the tools to banish apps, and Google gets to decide what will count as a high degree of harm in the future. The F-Droid team has already seen shifting standards around the world, and that worries them.

“They say, ‘Oh, we want to stop malware,’ and that sounds all well and good, but show me your definition and demonstrate that this definition is going to be agreed upon by an independent consensus of security experts and the community,” said F-Droid’s Marc Prud’hommeaux. “They don’t do that. They just say malware’s whatever we say it is, and when tomorrow they say, ‘VPNs are malware,’ then say goodbye to VPNs.”

Even if Google’s claims are true, developer verification won’t stop people from being scammed. An attacker targeting Android doesn’t even necessarily need to get the user to install malware—whatever that is—to scam them. The same false sense of urgency that can convince people to sideload a shady app (e.g., “your Facebook account is about to be locked forever!”) can be used to get them to install a perfectly legitimate remote support app from the Play Store and give the fraudster full access.

There is a difference between solving a problem for users and solving it for Google. In this case, developer verification could help shift the blame for mobile security woes away from Google.

“The threat actors are not going to go away,” said Hebeisen. “They are going to go somewhere else, and they are going to come up with new innovative methods to scam people, and they’ll be successful there as well.”

Google calling the shots

With the Play Store’s glut of AI slop and in-app purchase factories, it’s easy to forget that non-commercial software is still an important part of the Android ecosystem. Open source projects provide vital tools for a lot of people, and many of them are distributed outside of Google’s platform for a variety of reasons—not least of which is that some people just don’t trust Google.

Online anonymity used to be the default, but simply accessing resources and services is becoming increasingly officious. Platforms are demanding face scans and IDs in the name of protecting the young and vulnerable, but these tactics also force people to attach their real-world existence to what they do and produce online. In the case of developer verification, this situation could stymie the open source innovation that has made Android what it is today.

The Guardian Project, founded in 2009 to support the development of open source apps around the world, has been around almost as long as Android. According to founder Nathan Freitas, there are plenty of developers doing important work who don’t want to get in bed with Google. The organization aims to empower the wider Android ecosystem by helping those developers reach users.

“Our goal with Guardian project is to support regular people because everyone is potentially an activist. Everyone is potentially a citizen journalist. Everyone is an eyewitness,” said Freitas. “We really want to move away from you having to be sophisticated, technically, to have privacy.”

Reliance on Google’s cloud will be a core element of developer verification. Google is creating a database of developers, but only some of that data will be cached on devices. For many app installs, your phone will need to reach out to Google’s servers to verify an APK, which effectively prevents installing apps while offline. That’s a real problem for alternative app distribution models like, for example, the Guardian Project’s ButterBox, a solar-powered microserver that can provide off-grid access to encrypted chat, maps, file sharing, and other important tools. This project, built in collaboration with the F-Droid team, is essentially incompatible with developer verification.

“In their quest to make everything better, they’ve made the process more onerous,” Freitas said. “This is such a common issue of the mental model of these big tech companies… like you’re driving your Tesla down the 101 in Silicon Valley. That’s the user, you know, someone with 5G and great connectivity all the time.”

Some people may find themselves locked out of Android, even if they have perfect 5G. By requiring developers to register and pay a fee, Google is essentially forming a business relationship with people who might otherwise want nothing to do with the company. Simply due to who a developer is or where they live, their applications could end up blocked on most Android devices.

“There are going to be certain developers who are developing applications that are perfectly legitimate, but because they live in a sanctioned country where they belong to a sanctioned organization, Google is by law not going to be able to let these people register,” noted F-Droid’s Marc Prud’hommeaux. “So that is immediately closing the door to someone who is developing an app, and they happen to be a judge on the International Criminal Court or a resident of Cuba. But that’s it. They cannot convey their work to the world.”

No one knows what Google’s internal deliberations around this change look like—we can only guess at the company’s motivations. That said, even the team at F-Droid, which is publicly opposed to Google’s plans, believes that verifying developer identities will probably slow down traditional malware campaigns. But it also gives Google a lot more power.

“This measure can reduce the amount of malware, but at the same time, it reduces a lot of other legitimate activity,” said F-Droid technical lead Hans-Christoph Steiner. He pointed to ad-blockers and alternative YouTube clients as examples. Early on, Google allowed system-level ad-blockers in its store, but tightening restrictions eventually led to most of those tools being banned. Someone could possibly make an argument that these tools are harmful—to a high degree, even, he said.

“People want those apps for legitimate reasons, and we ship apps like that in a safe way,” said Steiner. “These are all legal things that people want that Google doesn’t want. So when we consistently ship ad-blockers, alternative clients, root kits—all these things that are against [Google’s] terms of service, it seems inevitable that they’re just going to block us.”

Ars Technica has reached out to Google to inquire about developers who are in sanctioned countries and their ability to participate in verification, as well as whether policy-violating apps like alternative YouTube clients will be verifiable. The company has not provided comment as of this publication.

Privacy is a global issue for a global company

Assuming developers comply with Google’s new verification rules, they’ll have to give up personal information, including government IDs and business details. Google’s verification system would see the company retain those details on a global scale, expanding beyond the group of Play Store developers already known to the company. That will expose devs to new legal threats, explained Corynne McSherry, legal director at the Electronic Frontier Foundation.

“The problem with creating this kind of verification program is that it necessarily creates a database,” she said. “That is then going to be vulnerable to subpoenas, warrants, government demand, and sometimes private demands. Sometimes people build apps that are privacy-protecting, that are important for human rights defenders, journalists, and so on. And there are governments who might very much like to know the names of the developers of those applications so that they can go after them.”

Google undeniably has massive global reach—it’s the top search engine by a wide margin in every country other than China and Russia, and Android devices are much more popular than iPhones in most places. That means Google’s policies and privacy protections have to adapt from market to market.

You may think that your nation’s legal protections will keep your data safe, but that’s the problem with being a global brand: There are plenty of places where courts don’t value individual rights.

“We have a tradition in the United States of protections for things like anonymous speech,” said McSherry, while noting that those protections have been weakened recently. “But nonetheless, we have default protections for anonymity that many, many countries do not, and so that’s one assumption that you’re just throwing out the window right away.”

Google’s records would be a very tempting target for governments and corporations that want to track down the developer of an app, even if that person is on the other side of the world. According to McSherry, governments can seek information about developers of apps they find disruptive, even if they reside in another country. Corporate entities could also engage in “forum shopping” by finding a compliant court where they can claim some harm.

“Private parties might just go to the country with the easiest, most hair-trigger judicial process,” McSherry said. “Go there and get a subpoena or some equivalent and also seek your information.”

With that information exposed, developers could be vulnerable to legal repercussions, lawsuits, or increased government surveillance. This is already happening, too. Many developers have chosen to remain anonymous online due to the constantly shifting state of legal requirements. Something that was perfectly fine and legal one day may be heavily restricted the next—take, for example, the laws surrounding messaging encryption in Europe and VPNs in India.

“We work with developers on our team who use pseudonyms 100 percent of the time, even if they are a European citizen or US citizen,” said Nathan Freitas of the Guardian Project. “That’s because of family, because of where they travel, or where they have lived. I made the choice years ago to use my real name and identity. Sometimes I regret it because I can’t travel to India because our work on VPNs is illegal in India.”

Google has said on numerous occasions that it complies with valid legal orders for user information—it has no choice in the matter if it wants to operate in a given country. And it gets a lot of those orders. After remaining relatively stagnant in the 2010s, legal requests for user data have exploded in recent years. In the first half of 2025, the most recently reported period, Google received 664,843 requests targeting 287,027 users. That’s an almost 10-fold increase in the last decade and about 18 percent up (for the number of users) in the first half of 2025 alone.

Expanding the number of developers in Google’s database will only make it a more frequent target of legal demands.

Can the “advanced flow” save us?

The backlash from developers when Google announced its plan last year was swift. The company eventually announced that it would build an “advanced flow” for power users who want to bypass developer verification. For many users, this move was all they needed to stop worrying and trust Google. The nature of that added friction is still unclear, though, and Google doesn’t have much room to maneuver if it still wants to accomplish its stated goal of saving people from malware.

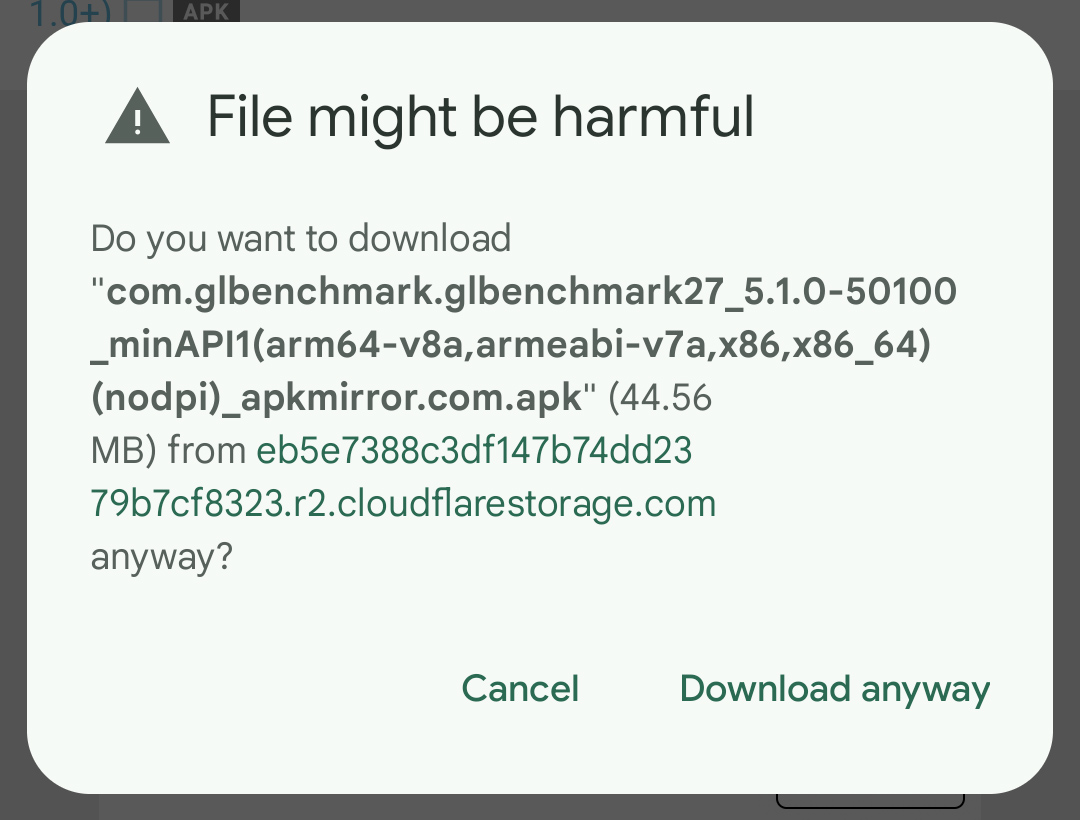

Sideloading apps already involves substantial friction. There’s a warning when you try to download an APK, and a second one appears when you try to open that file. Both the warnings state in no uncertain terms that installing apps downloaded from an unknown source can harm your device. To actually go through with the installation, you have to make a trip to the settings to toggle that option on for the source app (like Chrome). Only then will the installation proceed.

The current security model doesn’t mince words when you try to download an APK.

But will adding more clicks or warnings do anything? For Hebeisen, this seems like a weak point in the plan if Google’s intention is to reduce the spread of malware.

“Obviously, the threat actors have figured out how to social engineer users into allowing apps from third-party sources,” said Hebeisen. “So how much harder does it become to social engineer them through this?”

The team behind the open source F-Droid app store sees many problems with the opacity of Google’s plans. F-Droid’s Prud’hommeaux believes Google’s use of words like “advanced” and “experienced” is a red flag. “F-Droid is not for advanced users, it’s not for experienced users,” he said. “It’s just for users who care about privacy.”

In a recent blog post, F-Droid has claimed that Google isn’t even working on this system yet. Google said in November it was seeking “early feedback” on how the advanced flow could resist coercion and properly warn users of the risks. According to Lookout’s Christoph Hebeisen, as of late February 2026, Google has not asked his team about the design of the advanced flow. Meanwhile, Prud’hommeaux told Ars that his conversations with Google representatives suggest the advanced flow won’t be available until 2027, long after verification enforcement begins in September of this year.

It’s starting to look like Google’s pitch for the advanced flow was less a solution and more a “concept of a plan.” We’ve sought comment from Google, but the company has declined to elaborate on its timeline or the nature of the advanced flow at this time.

If Google begins blocking unverified apps before making this option available, it doesn’t have much incentive to make it genuinely useful—Google will already have everything it wants, and it will be too late for developers and mobile enthusiasts to do anything about it.

Hope for the best, prepare for the worst

While the timeline could still change, Google has said it plans to offer global access to the new developer console and associated identity verification this month. The company will begin enforcing developer verification in September, but only in Brazil, Singapore, Indonesia, and Thailand—markets that have higher rates of malware and high-pressure social engineering scams. In 2027, Google will continue expanding verification requirements until all regions are covered.

Some organizations are still hoping they can change Google’s mind. F-Droid has a website directing users to contact regulatory agencies to oppose developer verification. It also urges independent developers not to comply with Google’s verification requirements.

The F-Droid team recently published an open letter to Google, signed by 35 organizations, that expresses grave concern about what this change will mean for Android as a platform, but Google seems locked into its course of action. Even if a big chunk of independent developers boycott verification, Google may still plow ahead.

On an individual basis, there’s not much you as an Android user can do. You might be stuck letting Google police your apps, and you may not even think about it most days. That is, until you get stuck trying to install an app from someone else’s store without a reliable Internet connection or discover an open-source app you want to use is not verified.

Some developers may simply decide to abandon Android development, too. Nathan Freitas of the Guardian Project notes that the mobile web has gotten much better for developers in recent years. “We have moved a lot of our projects to progressive web apps because they can do more now,” said Freitas. “It’s like, ‘Can we do this in a browser?’ If so, then yes.”

Using more web apps could help, but the only way to truly opt out of Google’s verification system is to get off of Google’s version of Android. While there are some non-certified Android phones out there, such devices are usually rife with security vulnerabilities. So that doesn’t solve the problem. Installing a privacy-protecting alternative Android-based OS (sometimes called ROMs) like LineageOS or GrapheneOS could work. This gives you full control over the software running on your phone, but it’s getting harder to customize phones this way.

F-Droid’s Marc Prud’hommeaux sees Android ROMs as a very implausible solution to keeping open-source projects alive. Installing these software packages is beyond the abilities of most people, and device makers don’t exactly make it easy with locked-down products. “Every phone that you get is Android-certified, and many of those phones have locked bootloaders,” said Prud’hommeaux.

To a certain degree, these restrictions are inevitable for devices that connect to mobile networks. “The harm goes back to the telecoms and the mobile operators,” said Freitas, who explained that carriers have certain expectations and requirements for any baseband radio on their networks. “This thing has to work like a phone, and so we can’t just let it be a Wild West as a computer.”

If you can’t unlock the bootloader on your phone, you’re stuck with the stock software and any security changes implemented by Google and the device maker. And increasingly, it looks like they’re going to decide you need protection from yourself.

Ryan Whitwam is a senior technology reporter at Ars Technica, covering the ways Google, AI, and mobile technology continue to change the world. Over his 20-year career, he’s written for Android Police, ExtremeTech, Wirecutter, NY Times, and more. He has reviewed more phones than most people will ever own. You can follow him on Bluesky, where you will see photos of his dozens of mechanical keyboards.