Research proceeds on alternatives, but some doubt whether true lie detection is possible.

When George W. Maschke applied to work for the FBI in 1994, he had already held a security clearance for over 11 years. The government had deemed him trustworthy through his career in the Army. But soon, a machine and a man would not come to the same conclusion.

His application to be a special agent had passed initial muster. And so, in the spring of 1995, according to his account, he found himself sitting across from an FBI polygraph examiner, answering questions about his life and loyalties.

He told the truth, he said in an interview with Undark. But in a blog post on his website, he recalled the examiner told him that the polygraph machine—which measured some of Maschke’s physiological responses—indicated that he was being deceptive about keeping classified information secret, and about his contacts with foreign intelligence agencies.

“My entire career prospects were basically shattered,” said Maschke. “How could I have told the truth and failed the polygraph?”

He wanted an answer. And so soon after his failed exam, he said he went to the research library to try to learn more about what had transpired between his body, that machine, and the measuring man.

Further spurred by another negative polygraph experience, the resulting deep dive on polygraphs and examination methods eventually led him to co-found the advocacy website AntiPolygraph.org. “When I had my polygraph experience, I had no one to talk to,” said Maschke, who went on to work as a legal translator in the Netherlands. He hoped his public-facing website meant others wouldn’t have that experience.

Today, website visitors can find an e-book summarizing polygraph testing and policies, personal statements from those harmed by the results, official documents about the government’s use of polygraphy, and information about polygraph litigation, among other things.

Maschke isn’t alone in his criticism. Scientists, the press, and the justice system have also derided polygraphs as inaccurate. Research has suggested that the physiological signals they pick up are prone to false positives and not enough true positives. Questions about their scientific validity are, in fact, why they’re not admissible in most US courts.

Polygraphs also generally can’t be used as part of private employers’ hiring decisions. But, despite these doubts, they’re still employed in law enforcement investigations, and in security clearance applications. That entrenched usage may make the US more vulnerable to security threats and play a part in false confessions and lead to wrongful imprisonments.

Given those doubts, researchers and corporations are trying to find more reliable and modern ways to detect deception. Their methods—which span everything from monitoring involuntary eye behaviors to brain activity—also aren’t perfect. And some researchers question whether such an endeavor is even possible.

“This is sort of unscientific,” said Kyriakos Kotsoglou, a legal scholar at Northumbria University in England, “the idea that there’s sort of some parallel behavior in the way we think, in the way we behave, the way our body behaves.”

Still, some people hope there’s a scientific way to find out whether others are telling the truth. The real question, some experts say, is whether humans may be too complex for such quantification.

The invention of the polygraph doesn’t necessarily have a strict date, but it’s usually credited to John Augustus Larson, a police officer with a doctorate in physiology. In 1921, he measured a person’s pulse, blood pressure, and respiration, inspired to improve the techniques that William Moulton Marston, who would become a psychologist, had recently come up with. (Marston also happened to create the comic Wonder Woman and her lasso of truth, which compels those captured to be honest.)

To get at the truth, a subject is asked innocuous questions like their name, followed by charged ones like “Did you murder Sally?” An examiner would then look at the difference in bodily response between the innocuous questions and the charged ones, to pick up on potential deception.

The basic concept of a polygraph hasn’t changed much in the ensuing decades. “And it’s this kind of zombie thing that’s kept on for 100 or so years and keeps being used to this day,” said Ben Denkinger, a professor of psychology at Augsburg University in Minneapolis.

John Augustus Larson (right), a police officer with a doctorate in physiology, demonstrates the operation of a polygraph in the 1930s. Larson improved upon techniques established by William Moulton Marston and is usually credited with inventing the polygraph.

Credit: Pictorial Parade/Getty Images

John Augustus Larson (right), a police officer with a doctorate in physiology, demonstrates the operation of a polygraph in the 1930s. Larson improved upon techniques established by William Moulton Marston and is usually credited with inventing the polygraph. Credit: Pictorial Parade/Getty Images

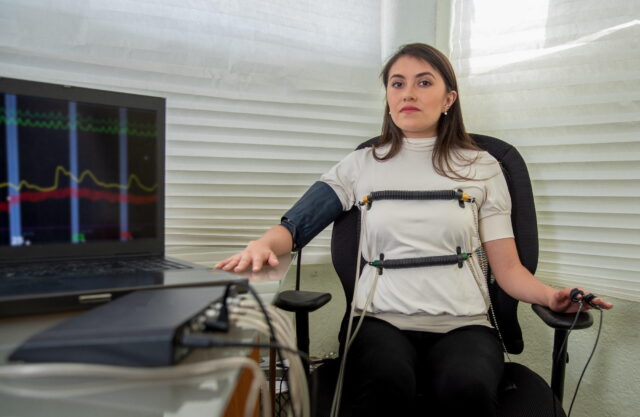

An example of a modern polygraph test. The basic concept hasn’t changed much over the last century.

Credit: Amelia Fuentes Marin/iStock via Getty Images

An example of a modern polygraph test. The basic concept hasn’t changed much over the last century. Credit: Amelia Fuentes Marin/iStock via Getty Images

Today, polygraphs measure the same changes Larson did, in addition to measuring how well the skin conducts electricity, a proxy for sweating. Typically, examiners interview their subjects ahead of time and gather baseline numbers on their physiological ticking. The measured exam, meanwhile, includes neutral control questions as well as questions relevant to whatever the investigators are seeking the truth about. The idea is that if someone is lying, their physiology will show stress compared to their truthful baseline. Their heart rate will elevate; they will sweat more; their blood pressure will increase; they will breathe faster. An examiner would see those spikes in the graphs of each metric, then analyze whether a spike—especially in all the different measurements at once—indicates a lie.

But, according to numerous studies, polygraphs cannot reliably detect lying, or truth-telling, and their use in the justice and employment systems is regulated due to those problems with scientific reliability. A landmark 2003 report from the National Academies of Sciences, Engineering, and Medicine found the quality of research about polygraphy to be low, the theoretical explanation of how it functions (and why it detects lying, and not, say, nervousness) to be inadequate, the rate of false positives to be unacceptable, and the rate of false negatives to be a risk. Researchers still cite this study.

Despite questions about the tool’s robustness, though, it continues to be used in some employment screenings, law enforcement investigations, and security clearances. It’s a significant part of the culture in those latter two arenas, said Denkinger, and entrenched in pop culture too. Interest in police procedurals and true crime podcasts have made polygraphs even harder to push back against because “TV writers, movie writers—they love this stuff. It’s just a fun plot device, and it’s not treated critically at all,” he said.

But if media audiences find themselves in a polygraph exam room, they should probably feel twinges of doubt, perhaps especially if they are innocent. Citing evidence from William G. Iacono, a professor emeritus of psychology at the University of Minnesota, he said that polygraphs can identify just 75 percent of guilty people. But critically, they only accurately judge truth-tellers around 57 percent of the time. “The research generally shows that the people who are innocent are at a disadvantage,” he said.

Denkinger and Iacono have served as ongoing consultants for the Innocence Project, a nonprofit legal group that attempts to exonerate wrongfully convicted people, on cases involving polygraphs and false confessions. They contributed to a 2024 amicus brief on how polygraphs have been inappropriately used to solicit false confessions to support a case the organization was working on in Texas. While polygraph results haven’t generally been allowed in court since 1998, they are still used in criminal investigations, and confessions that result from the polygraph examination can appear in a trial as evidence.

Federal courts are governed by the Federal Rules of Evidence, including Rule 702. That rule means expert witness testimony has to be “based on sufficient facts or data” and be “the product of reliable principles and methods”; strict numerical accuracy isn’t specified, but the validity of an expert’s methodology is assessed using something called the “Daubert Standard,” which includes the criteria that it “has attracted widespread acceptance within a relevant scientific community.”

In 2023, when Denkinger and Iacono pulled cases from the National Registry of Exonerations, they found 56 cases where exonerees were administered a polygraph during the interrogation process and subsequently provided a false confession. Of the 36 cases with a definitive polygraph examiner judgment, a correct exculpatory outcome only occurred in eight cases. But Denkinger’s issues with the dataset went further: “Every single person who took a polygraph in the set was done a disservice by the polygraph,” he said. “Either they were told they failed because the examiner thought that the result was a deceptive response, which was a false interpretation, or they were truthful, and the interrogators or the examiner misrepresented the result and told them that they were lying.”

That latter part is the focus of Denkinger’s most recent work: how the polygraph is used coercively. For example, law enforcement is permitted to tell subjects they’re failing the polygraph even if they’re not—a practice that can induce false confessions. And it is confessions that law enforcement is after.

Charles R. Honts, professor emeritus of psychological science at Boise State University, has a similar professional focus. He spent years administering polygraphs himself and even worked at the Department of Defense Polygraph Institute, now known as the National Center for Credibility Assessment, the government agency responsible for training federal polygraph examiners and doing countermeasures research. The center is aware of the scientific skepticism around polygraphs, and does its own research on alternative technologies. “The National Center for Credibility Assessment (NCCA) acknowledges the scientific community’s concerns regarding polygraph technology,” the Defense Counterintelligence and Security Agency Office of Communications and Congressional Affairs said in a statement. “The reconciliation between these limitations and its practical utility lies in its application. Polygraph examinations are an aid that helps focus security and investigative resources. They deter applicants from withholding critical information and often elicit admissions vital to managing risk to national security missions.”

Later in Honts’ career, he became interested in that latter goal: how polygraphs are used not to seek truth but to exert pressure. “I’ve qualified as an expert to talk about false confessions, and in particular, my niche is the misuse of polygraph as a coercive tool that might produce false confessions,” said Honts.

At the University of Utah, where he got his doctorate, Honts developed a method of polygraph examination that used standardized questions and relied less on the expertise of the examiner. He says that unlike some other countries, examiners in the US haven’t adopted the methods he sees as best-practice at a large scale, in large part because they see polygraphs more as interrogation tools than lassos of truth.

That practice can let guilty people go free, send innocent people to court, and make the most sensitive parts of our government—the defense and nuclear establishments, which both use polygraphy to vet employees—less secure. For instance, infamous spy Aldrich Ames, a three-decade CIA employee who passed secrets for close to a decade prior to his arrest, to the Soviets and later the Russians, passed a polygraph twice while actively committing espionage. Ames later said his polygraph savvy was aided by advice from the KGB, who told him to be cooperative and stay calm to pass the examination. The government is aware that people can use “countermeasures” to fool the device and the examiner, said Maschke. His website has published documents about both the countermeasures themselves and the government’s knowledge of them and their effectiveness.

“I think it exposes the US government to penetration by spies and saboteurs, terrorists,” said Maschke, “because the polygraph is really the cornerstone of American counterintelligence policy.”

Given these drawbacks, scientists are investigating whether better options for lie detection exist, more grounded in evidence and technologies that weren’t available when the polygraph was invented. Some of those options aren’t more accurate than the traditional method, but others are showing more promise.

One avenue doesn’t require throwing out polygraphs as a tool, but simply relying less on humans to be the sole arbiters of their results. A 2023 paper in Nature’s Scientific Reports, for instance, described machine-learning models created by the study authors to give a second opinion on human examiners’ conclusions. The models were able to detect human errors in samples of real-life polygraph screening data, reducing the subjectivity of polygraph screenings. The authors concluded their models were fit for a one-year pilot.

But Kotsoglou, the Northumbria legal scholar, co-authored a response paper. It detailed how the machine learning option doesn’t meet appropriate standards given the legal and ethical stakes of polygraph results, in large part because reliable training data for such models doesn’t exist. “The problem is that there’s no way to establish ground truth for polygraph interviews,” he said, “because you cannot show that case where you have truthfulness and where you do not have truthfulness.”

Machine learning can only suss out patterns humans have missed if the physiological measurements polygraph machines take actually do relate to lying—or if there even is verifiable, reliable connection between physiology and truth. “Unless they show what is the underlying sort of scientific paradigm that is valid, they cannot sort of meaningfully make these claims,” he said.

But maybe examiners don’t need to sort through polygraph results, according to another group of researchers. Maybe they just need the human eye.

In 2002, two University of Utah scientists, John Kircher and Douglas J. Hacker, took a road trip to climb Mount Rainier. Kircher had long worked in the lie-detection field.

On their trip, they discussed whether the eyes (rather than things like the sweat glands and heart) were the window to the proverbial and truthful soul. The basic idea was that the brain works harder during deception, which might cause involuntary ocular behavior, like pupil dilation.

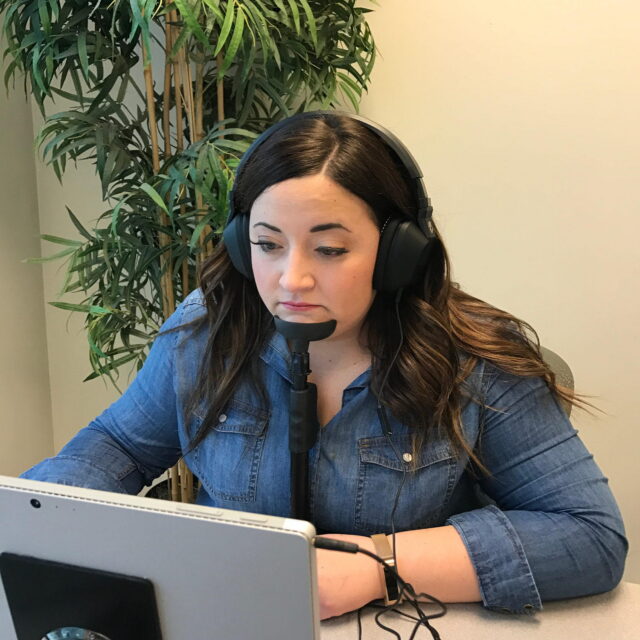

By chance, a colleague had recently gotten a grant to buy an eye tracker, and after they descended Rainier, they asked her to join their team and put her machine to detective use, said Todd Mickelsen. Mickelsen is president and CEO of Converus, the company that commercialized the eye-based technology in 2014. Converus now operates in 60 countries and has more than 1,000 customers. Today, there are around a dozen peer-reviewed studies on the technology, which is called EyeDetect. In lab situations, researchers typically rated its accuracy, under different experimental conditions and with different measurements, to be around 85 percent; independent replications are limited and so doubts about its true accuracy rates exist. “I always get asked, ‘You know, well, does it get it wrong?’ And the answer is, ‘Yes,’” said Mickelsen. “You should use it as a tool to help make a decision, not an exclusive standalone reason for which you would not hire someone, for example.”

And when asked in a follow-up email whether any research had taken neurodivergence—which may affect an individual’s eye movements into account, Mickelsen noted that “no study has been done to look specifically at autism and groups of people who have similar characteristics; however we do have customers that have tested people with these conditions and through ground truth cases have found good success.” He also noted that their studies did control for other characteristics, such as language, gender, and educational level.

The EyeDetect system commercialized by Converus tracks the eyes to assess truthfulness. Users place their chin in a stand to stabilize the eyes during the test.

Credit: Converus

The EyeDetect system commercialized by Converus tracks the eyes to assess truthfulness. Users place their chin in a stand to stabilize the eyes during the test. Credit: Converus

The user then looks at a computer screen while the test is administered. In lab situations, researchers typically rate the technology’s accuracy at about 85 percent.

Credit: Converus

The user then looks at a computer screen while the test is administered. In lab situations, researchers typically rate the technology’s accuracy at about 85 percent. Credit: Converus

Another set of researchers have taken another tack: What if examiners looked inside the head instead? That’s the idea behind a different method that uses EEG technology, which measures the brain’s electrical activity, and zooms in on a specific signal researchers have termed the P300, so-called because it peaks approximately 300 milliseconds after seeing a given stimulus and is triggered during decision-making and with respect to mental processes like attention and perception. The stronger the electrical signal, researchers have found, the more significant the stimulus is to the subject.

While the P300 has been used in other fields, examiners in deception research typically look to it when using something called an “oddball” test. For example, they may show a murder suspect a mostly random list of oddball things: say, a chair, a table, a knife, a bicycle, a rope, and a dog, each dozens of times in a random order. When the weapon—the rope—is shown, a guilty person’s brain should show a strong P300 response, the thinking goes.

A recent systematic review found P300 measurement improved on traditional polygraph methods, ranging from 81 percent to nearly perfect accuracy, under lab conditions, with the variation likely due to different methods of processing, extracting, and classifying the brain activity’s features. A 2025 preprint (which has been submitted to a journal and is currently undergoing revision) combined a deep learning model with the P300 and found nearly 87 percent accuracy under simulated challenging field conditions.

Another method, meanwhile, relies on fMRI, a brain-imaging technique that uses powerful magnets to measure blood flow in the brain, indicating which areas are active. That’s something Boston University’s Arthur Sangil Lee, a cognitive neuroscientist, has done. As part of his research into how different mental states look in fMRI data, Lee turned to deception. He intended to find out whether brain activity that seemed to indicate lying could, in fact, be mixed up with those of other mental states. If that was the case, Lee wanted to determine whether those signals could be separated.

To start, he built a neural predictor to tell whether someone was lying. It seemed to work. But in a second experiment, he and his research team used that neural lie detector to look at people who were telling the truth, but truths that were selfish. It threw a wrench in: “And then we show that brain decoder, that lie detector that we thought we had, can also predict when somebody’s just being selfish,” he said.

In the final stage of the experiment, though, the researchers wanted to see if they could subtract out the brain activity that represented selfishness and separate it from the lying part. They could. In the future, Lee said, they might find out that the remaining signal they thought was simply “lying” is still entangled with another mental state, like arousal. After finding and excising all entanglements, he said, what’s left must be straight lying. Theoretically, at least. “It could also be an empirical result that if we take enough of these compounded processes away, deception disintegrates,” he said. There might not be a straight-lying state, in other words; maybe lying is just the sum of many parts.

Scientists like Lee may be getting closer to an accurate lie detector, and improving on the traditional polygraph. But there’s currently no superhero solution. And the problem, as Lee’s research hints, may be ontological, not technological.

That’s definitely Maschke’s view. “It’s all pseudoscience,” he said. “There is no lie detector. So my thinking is that it’s better not to pretend that you can detect lies, because it’s a way of deceiving yourself.”

Maybe it’s true no one can know, for sure, if another person is lying. After all, humans are, famously, individuals. “Everybody’s so different in how they tell their lie,” said Denkinger. And, apparently, in how they tell their truths.

This article was originally published on Undark. Read the original article.