San Francisco, March 25, 2026 (GLOBE NEWSWIRE) — GoodVision AI, an AI infrastructure company led by former AWS and IBM executives, has introduced an intelligent compute scheduling solution combined with distributed edge inference infrastructure, aimed at addressing rising token consumption, latency, and cost challenges driven by the rapid adoption of AI agents.

At GTC 2026, NVIDIA CEO Jensen Huang noted that AI infrastructure is evolving from traditional “data centers” into “token factories,” where inference throughput becomes a key metric. He indicated that inference demand could increase by multiple orders of magnitude, potentially reaching a million-fold growth within the next two years.

At the same time, systems such as OpenClaw represent a new class of AI agents capable of understanding user intent, maintaining long-term memory, invoking external tools, and autonomously executing multi-step tasks across workflows. As these systems begin to be deployed in production environments, a new constraint is emerging around token consumption.

A single complex task executed by an AI agent may require hundreds of model calls, significantly increasing token usage compared to traditional prompt-response interactions. Industry practitioners report that agent-based workflows can lead to substantial increases in token expenditure, with some high-usage scenarios reaching extremely large daily consumption volumes.

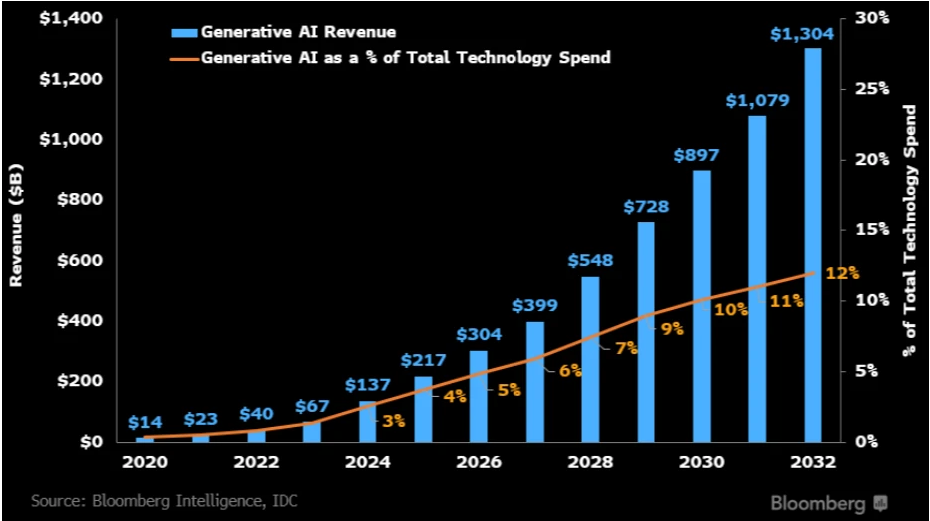

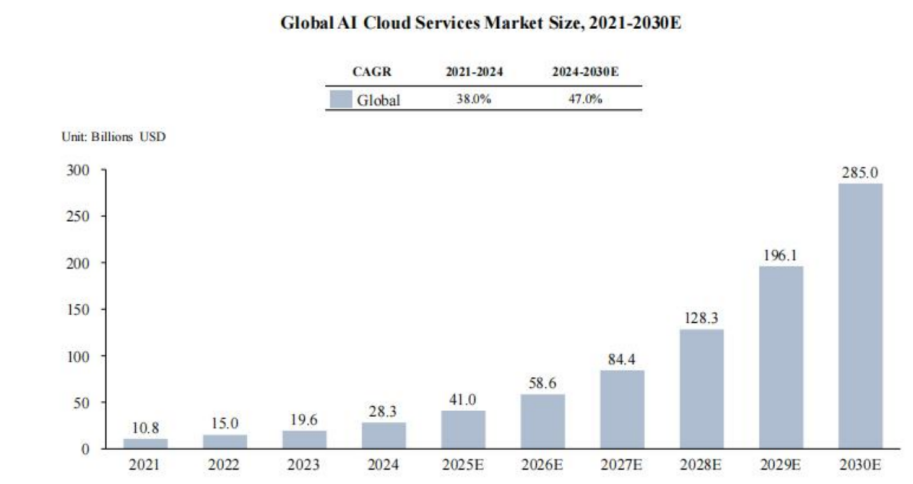

Hyperscalers are increasing capital expenditures to expand AI infrastructure capacity, with combined planned investments exceeding $280 billion in 2026. These investments are focused on securing power resources and compute capacity over the coming years.

However, the rapid increase in demand raises a key industry question: whether scaling centralized compute infrastructure alone is sufficient to address the efficiency, cost, and latency challenges observed in real-world AI deployments.

Compute Congestion: Is More Compute Really the Answer?

GoodVision AI’s CEO, David Wang, has spent decades in the cloud computing industry. He was a Partner at IBM and former Senior Director at AWS, where he helped scale regional cloud operations from zero to hundreds of millions in revenue.

Through years of close involvement in cloud infrastructure, David identified a recurring structural pattern that application demand consistently scales faster than compute infrastructure supply. This persistent mismatch between supply and demand became the foundation of his thesis—and a key motivation behind founding GoodVision AI in 2019.

As large models and AI applications have rapidly proliferated, this view has only been reinforced. Internally, the company has seen its AI-related revenue enter a high-growth phase, reaching nearly $10 million in 2025 with over 100% year-over-year growth. With the rollout of its AI Factory and broader compute infrastructure, GoodVision AI expects total AI revenue to scale to hundreds of millions of dollars by 2027, marking a new phase of expansion.

With the rollout of its AI Factory and broader compute infrastructure, GoodVision AI expects total AI revenue to reach hundreds millions of dollars by 2027, marking a new phase of scale.

In the early wave of AI—when OpenAI brought large language models into the mainstream—industry discourse was almost entirely focused on training compute. David took a different view: “Model training happens once, but inference happens billions of times.”

As AI agents and applications are invoked simultaneously by millions of users, inference workloads become inherently distributed—across geographies, devices, and network conditions. Then the problem happened: today’s cloud architecture was never designed for this demand structure. When inference demand surges faster than supply, the consequences are already visible like rising latency,escalating costs and degraded output reliability in real-world applications.

David argues that AI infrastructure must evolve toward a more distributed and hierarchical architecture:

- Centralized cloud models should handle complex, high-value tasks

- Edge or localized compute should process high-frequency, latency-sensitive inference

The key is not just more compute but better allocation of compute. Through an intelligent scheduling system, tasks of varying complexity can be dynamically routed to the most appropriate compute resources. This prevents all requests from converging on centralized hyperscale data centers — avoiding compute congestion, reducing costs, and improving real-time performance.

In this framework, scaling AI is no longer about brute-force infrastructure expansion, but about matching the right workload to the right compute layer.

Distribution Is the Key to Solving AI Compute Constraints

If we break down today’s AI compute landscape, several distinct models emerge. At the top are the hyperscalers—whose core business is delivering Infrastructure-as-a-Service (IaaS) for general-purpose workloads at massive scale. Alongside them are GPU-native cloud providers, which focus on supplying compute resources tailored for AI training and inference, effectively operating as the next generation of GPU clouds. A third category includes model service platforms which provide unified interfaces that allow developers to route and switch between different models.

Each of these models addresses a specific layer of the stack, but none fully solves the emerging challenges of AI at scale. Traditional hyperscalers rely heavily on centralized data centers, which, while powerful, can become inefficient when handling geographically distributed demand and real-time inference workloads. GPU cloud providers expand compute supply but lack intelligent orchestration, while API routing platforms enable flexibility across models yet have no control over underlying compute resources.

As AI agents gain traction, a new class of demand is emerging. Agent-driven workflows are inherently multi-step, requiring coordination across different models and compute types, while also demanding low latency and cost efficiency. If all inference requests are funneled into remote, centralized data centers, both latency and costs escalate rapidly.

The core challenge, therefore, is not simply scaling more compute capacity, but building a distributed and intelligent compute delivery layer. What the industry increasingly needs is a system capable of dynamically allocating workloads—routing each task to the most appropriate compute resource in real time.

This is precisely where GoodVision AI positions itself: not as another compute provider, but as an intelligent compute distribution network, designed to orchestrate inference at scale.

Compute Distribution Networks: GoodVision AI’s “AI CDN” Approach

In the early days of the internet, website traffic was concentrated on a limited number of centralized servers. As user demand scaled, Content Delivery Networks (CDNs) emerged—distributing cached content across globally dispersed nodes to bring data closer to end users. A similar architectural shift is now unfolding in the AI era. As AI agents scale, compute demand is no longer centralized; inference workloads are increasingly distributed across geographies, cloud environments, data centers, and even edge devices.

If the core tension in today’s AI landscape is the growing imbalance between compute supply and demand; then the solution lies not simply in provisioning more compute, but in rethinking how compute is distributed and delivered. At GTC 2026, Jensen Huang emphasized that the key performance metrics for future AI systems will shift away from raw compute scale toward token output per unit of energy, throughput efficiency, and latency—effectively redefining what makes a “token factory” competitive.

GoodVision AI builds its architecture around this principle. Internally, the system is referred to as the AI Factory—a vertically integrated infrastructure stack that combines GPU compute resources with a globally distributed compute node network, and an intelligent scheduling layer capable of orchestrating workloads across heterogeneous environments.

At the core of this architecture is a proprietary AI agent that functions as a “control plane” for compute orchestration, alongside a token aggregation layer similar to existing API routing platforms. However, unlike pure aggregators, GoodVision AI integrates owned physical compute infrastructure and deployable private model clusters, enabling tighter control over resource allocation and utilization.

One of the key innovations is token-level compute scheduling. Instead of routing requests at the model level, this approach dynamically allocates workloads at a finer granularity—based on task complexity, cost sensitivity, and latency requirements. Workloads can be intelligently routed across public clouds such as Amazon Web Services and Google Cloud, as well as private data centers, ensuring optimal execution paths in real time. Crucially, by owning and controlling underlying compute resources, GoodVision AI is able to stabilize token supply, gain pricing power, and maximize margin capture across the value chain.

At the same time, the company is actively deploying edge compute nodes. As AI agents move into real-world environments and user devices, not all workloads are suited for centralized cloud processing. By placing compute closer to end users, GoodVision AI can significantly reduce latency and improve responsiveness. This architecture—conceptually similar to a CDN—allows compute to be “delivered” to the point of demand, rather than forcing all inference requests through distant hyperscale data centers.

Speed as a Strategic Advantage in AI Compute Expansion

Since 2025, GoodVision AI has been building out its inference compute footprint across Asia and globally, with Japan, South Korea and US emerging as key strategic hubs. The company has already secured more than 400 MW of power capacity across these regions and plans to scale this into large, production-grade inference clusters. At full buildout, its network is designed to support up to 400,000 inference GPUs, representing a multi-billion-dollar compute asset base. These nodes will be tightly integrated with its intelligent scheduling system, forming a globally distributed compute network.

Unlike platforms that focus solely on orchestration, GoodVision AI has built a vertically integrated stack spanning infrastructure development, operations, and demand-side distribution.

For GoodVision AI, these owned and controlled compute assets serve as a critical base layer of supply. In periods of external compute shortages or price volatility, they provide both capacity resilience and greater flexibility in scheduling—ensuring the network can scale efficiently while maintaining cost control.

Future Vision: When Every City Has Its Own AI Factory

As AI agents become embedded in everyday workflows, demand for compute is set to grow exponentially. At its core, this demand is driven by continuous inference workloads—spanning enterprise systems, personal devices, and even urban infrastructure—where real-time responsiveness and reliability are critical.

In parallel, AI infrastructure is evolving toward a globally distributed network of compute nodes, where resources can be dynamically allocated much like data flows across the internet. This is the foundation of GoodVision AI’s AI Factory concept: localized inference hubs designed to serve regional AI applications while remaining interconnected within a global compute network. Each AI Factory functions as a modular production unit for AI inference, supporting local enterprises and developers while participating in cross-network scheduling.

Unlike traditional hyperscale data centers, these AI Factories are deployed closer to end users, enabling a significant portion of real-time inference to be processed at the city level. This proximity translates into measurable performance gains. In existing deployments, clients that migrated to GoodVision AI’s infrastructure have achieved approximately 60% cost reduction, 50% lower latency, and around 50% improvement in platform gross margins.

GoodVision AI is already expanding into compute-intensive verticals such as video generation and biotech. In these sectors, the bottleneck is no longer model capability, but the efficiency of matching rapidly growing inference demand with token consumption and compute supply. Video generation platforms, for instance, require massive volumes of image and video inference requests, while AI-driven drug discovery pipelines—from molecular modeling and protein folding prediction to drug screening and clinical simulation—depend on sustained, large-scale compute. These workloads require infrastructure that is not only powerful, but also low-latency, stable, and horizontally scalable.

As advanced industries—particularly biotech—become increasingly reliant on AI, they are likely to emerge as core customers and long-term growth drivers for GoodVision AI’s compute network.

Looking ahead, as more cities deploy their own AI Factories, compute will no longer remain concentrated in the hands of a few technology giants. Instead, it will evolve into a foundational utility—much like electricity or internet connectivity. Developers, enterprises, and even individual users will be able to access AI agents on demand for creation, automation, and innovation.

The true mass adoption of AI will not be defined solely by better models, but by the emergence of a distributed, globally coordinated compute network that makes intelligence universally accessible.

CONTACT: Joy Chen media@goodvision.ai