Fixstars AIBooster Dramatically Enhances AI Training Efficiency with Proprietary Optimization Algorithms

, /PRNewswire/ — Fixstars Corporation (TSE Prime: 3687, US Headquarters: Irvine, CA), a global leader in performance engineering, today announced a major upgrade to Fixstars AIBooster, significantly enhancing its automated hyperparameter optimization.

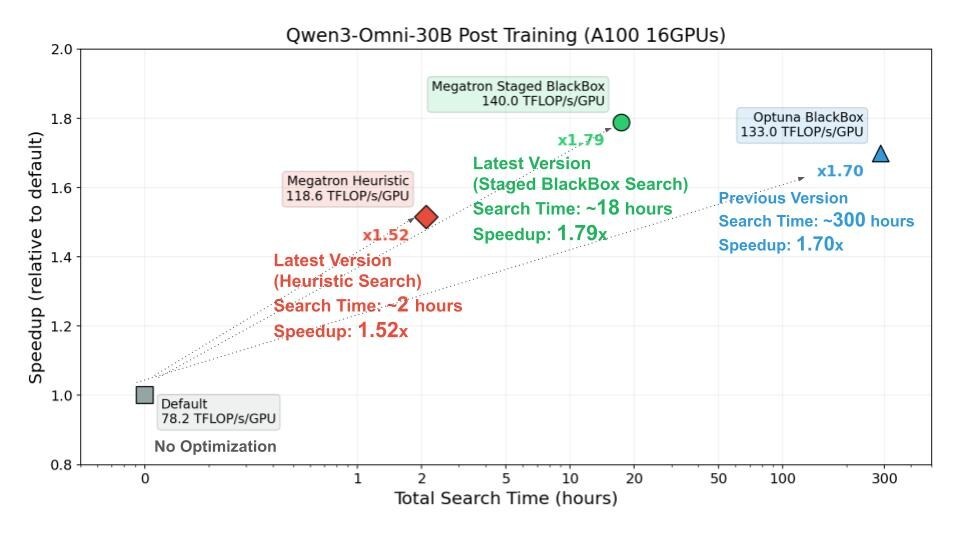

In recent benchmark tests evaluating AI training performance, Fixstars compared three scenarios: unoptimized, optimized using the previous version, and optimized using the latest version. The results demonstrated that the latest AIBooster identifies superior hyperparameters in approximately 1/16th the time required by previous versions, further accelerating processing speeds and operational efficiency.

The Impact of Automated Hyperparameter Optimization on AI Training

In the distributed training of Large Language Models (LLMs), numerous parameters—such as tensor parallelism, pipeline parallelism, and micro-batch size—dictate training efficiency. Setting optimal hyperparameters can significantly increase AI training speeds.

Traditionally, searching for the ideal combination of hyperparameters required deep expertise and extensive trial and error, placing a heavy burden on engineers. Fixstars AIBooster automates this search process, allowing engineers to focus on higher-value development tasks.

By improving AI training efficiency, organizations can achieve the following:

- Reduced AI Investment Costs: Maximize the utilization of limited GPU assets. Efficient operation reduces hardware procurement costs and power consumption.

- Enhanced Accuracy via Rapid Iteration: Faster training cycles allow for more frequent iterations, resulting in higher-precision AI models and improving both the speed and quality of AI development.

Performance Gains Through Proprietary Algorithms

Fixstars has implemented two new proprietary algorithms—Heuristic Search and Staged BlackBox Search—specifically designed for hyperparameter exploration using domain knowledge of Megatron Core parallelization strategies.

Benchmarks conducted using Qwen3-Omni-30B supervised fine-tuning (SFT) on an NVIDIA A100 x 16 GPU environment yielded the following results:

- Heuristic Search: Achieved 1.52x faster training (118.6 TFLOP/s/GPU) in just 2 hours—reaching 1.7x speedup 150x faster than previous methods.

- Staged BlackBox Search: Delivered 1.79x speedup (140.0 TFLOP/s/GPU) in 18 hours, finding optimal parameters 16x faster than conventional methods.

Users can now choose the best approach for their needs: Heuristic Search for rapid practical speedups or Staged BlackBox Search for maximum performance.

No-Code Tuning Capabilities

The latest version introduces a no-code feature, allowing users to execute tuning via command-line operations without writing Python scripts. This enables engineers without specialized optimization backgrounds to leverage high-precision hyperparameter tuning immediately.

About Fixstars AIBooster

Fixstars AIBooster is a solution designed to optimize the efficiency of computational resources and unlock peak performance for AI workloads, including AI training and inference. It primarily offers the following three pillars:

- Performance Observability (PO): Continuously visualizes hardware (GPU) and software execution profiles to identify performance fluctuations and bottlenecks.

- Performance Intelligence (PI): Drives continuous improvement through bottleneck analysis, automated acceleration, AI agent-driven suggestions, and expert reviews.

- Optimized AI Infrastructure: Provides tailored infrastructure—whether public, private cloud, or on-premises—leveraging data-driven insights from PO and PI.

Release note: https://doc.aibooster.fixstars.com/en/

Media Contact:

Aki Asahara

[email protected]

(408) 400-3679

SOURCE Fixstars