Pioneer turns language model development and fine-tuning from a months-long, expert-driven workflow into a single prompt and introduces adaptive inference, a new category in model serving where deployed models continuously improve on live production data, without human intervention.

, /PRNewswire/ — Fastino Labs, the applied AI research lab behind the widely adopted open source GLiNER model family, today announced the launch of Pioneer, a state-of-the-art language model fine-tuning agent and adaptive inference platform for open source small language models. With Pioneer, any developer can fine-tune and deploy production-ready models like Qwen, Gemma, Llama, Nemotron, and GLiNER with a single prompt.

Pioneer is the first platform to bring adaptive inference to production: a new approach to model serving in which deployed models are continuously and autonomously retrained on their own live inference data, with improved checkpoints automatically validated and promoted over time. The era of “deploy and forget” for language models has arrived.

“We believe that the future will not just be a few large models, but billions of small models working together. However, in reality, building language models today is extremely difficult,” said Ash Lewis, CEO and co-founder of Fastino. “Pioneer collapses that into a prompt. And once your model is deployed, it keeps getting better on its own. For the first time, the model you ship on day one is the worst model you’ll ever use.”

“Frontier model token costs haven’t dropped as expected, making accurate, task-specifc open source models the most important tools in the AI stack.”

Small models, built agentically

Frontier large language models have pushed the boundaries of AI, but most production workloads only need a fraction of their parameters and compute. Fine-tuned small language models consistently match or exceed frontier model accuracy on specific tasks at a fraction of the latency and cost. Fastino believes specialized small models will be the primary building blocks of agentic AI, and that the tooling to build them should be accessible to every developer.

Pioneer delivers this through two agentic modes:

- Agent Mode lets users fine-tune and deploy a model in minutes through a simple chat interface. The agent handles synthetic data generation, hyperparameter selection, evaluation, and deployment with no code required.

- Deep Research Mode is a fully autonomous fine-tuning agent with web browsing access. Given only a natural-language task description, it discovers training data, runs multiple experiments in parallel, recovers from failed runs, and iteratively improves the model until it reaches an optimal configuration.

Introducing adaptive inference

Pioneer is also the first platform to offer adaptive inference for all deployed models. Pioneer’s agent continuously monitors deployed models through their inference traces, identifies failure patterns, and automatically trains and deploys improved checkpoints.

Across seven benchmark scenarios designed to simulate real-world deployment drift, Pioneer maintained monotonic improvement while naive retraining approaches degraded, with final performance gaps of up to 43 percentage points. To support this research, Fastino is also introducing AdaptFT-Bench, a new benchmark for evaluating autonomous model improvement under realistic production conditions.

“Adaptive inference will soon be a standard feature in model serving,” said George Hurn-Maloney, COO and co-founder of Fastino. “Your production data is the most valuable training signal you have. Pioneer is the first tool actually using it to make your models better, automatically.”

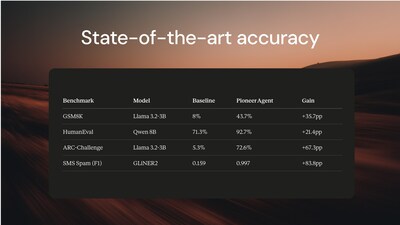

Breakthrough Benchmark Results & Technical Report

Alongside the Pioneer launch, Fastino is publishing a detailed technical report documenting the unprecedented performance gains achieved by its fine-tuning agent, results that redefine what is possible in automated model development. Across a wide range of academic benchmarks, Pioneer’s Research Mode improved accuracy by as much as 83.8 percentage points over base models, with end-to-end runs completing in a matter of hours at a cost measured in tens of dollars. The report also covers the methodology behind the platform’s fine-tuning and adaptive inference systems, and introduces AdaptFT-Bench, a new benchmark for evaluating autonomous model improvement under realistic production conditions.

On a wide variety of academic benchmarks, Pioneer delivered substantial accuracy gains across every base model tested, including a 19.0 percentage point improvement on IFEval with NVIDIA-Nemotron-3B, a 21.4 percentage point improvement on HumanEval with Qwen 8B, a 67.3 percentage point improvement on ARC-Challenge with Llama 3.2-3B, and an 83.8 percentage point improvement on SMS Spam with GLiNER2. End-to-end agent runs completed in an average of 6 hours at a cost of around $35, a fraction of what a senior machine learning engineer would spend on the same workflow.

Backed by leading investors

Pioneer is the latest product from Fastino Labs, which has raised $25 million in total funding across its pre-seed and seed rounds, led by Khosla Ventures and Insight Partners, with participation from M12 (Microsoft’s venture fund), NEA, Valor Equity Partners, and angels including GitHub CEO Thomas Dohmke, former Docker CEO Scott Johnston, and Weights & Biases CEO Lukas Biewald.

Fastino’s open source GLiNER model family has been downloaded more than 6 million times and is used in production by teams at leading Fortune 500 companies.

Availability

Pioneer is available to developers starting today. To learn more or start fine-tuning a model from a single prompt, visit pioneer.ai.

Fastino Labs is an applied AI research lab building small open source models and the infrastructure to make them continuously better in production. Founded in 2024 and based in Palo Alto, California, Fastino is the creator of the GLiNER open source model family and Pioneer, the first agentic fine-tuning and adaptive inference platform. The company is backed by Khosla Ventures, Insight Partners, M12, NEA, and others. Learn more at fastino.ai.

SOURCE Fastino Labs